An open source camera stack for Raspberry Pi using libcamera

Since we released the first Raspberry Pi camera module back in 2013, users have been clamouring for better access to the internals of the camera system, and even to be able to attach camera sensors of their own to the Raspberry Pi board. Today we’re releasing our first version of a new open source camera stack which makes these wishes a reality.

(Note: in what follows, you may wish to refer to the glossary at the end of this post.)

We’ve had the building blocks for connecting other sensors and providing lower-level access to the image processing for a while, but Linux has been missing a convenient way for applications to take advantage of this. In late 2018 a group of Linux developers started a project called libcamera to address that. We’ve been working with them since then, and we’re pleased now to announce a camera stack that operates within this new framework.

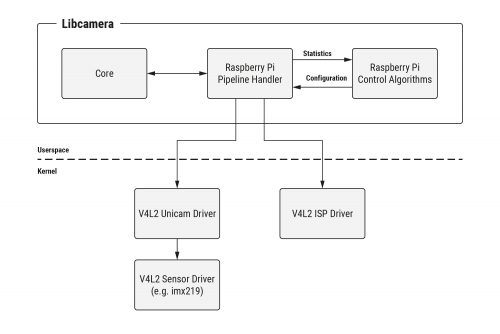

Here’s how our work fits into the libcamera project.

We’ve supplied a Pipeline Handler that glues together our drivers and control algorithms, and presents them to libcamera with the API it expects.

Here’s a little more on what this has entailed.

V4L2 drivers

V4L2 (Video for Linux 2) is the Linux kernel driver framework for devices that manipulate images and video. It provides a standardised mechanism for passing video buffers to, and/or receiving them from, different hardware devices. Whilst it has proved somewhat awkward as a means of driving entire complex camera systems, it can nonetheless provide the basis of the hardware drivers that libcamera needs to use.

Consequently, we’ve upgraded both the version 1 (Omnivision OV5647) and version 2 (Sony IMX219) camera drivers so that they feature a variety of modes and resolutions, operating in the standard V4L2 manner. Support for the new Raspberry Pi High Quality Camera (using the Sony IMX477) will be following shortly. The Broadcom Unicam driver – also V4L2‑based – has been enhanced too, signalling the start of each camera frame to the camera stack.

Finally, dumping raw camera frames (in Bayer format) into memory is of limited value, so the V4L2 Broadcom ISP driver provides all the controls needed to turn raw images into beautiful pictures!

Configuration and control algorithms

Of course, being able to configure Broadcom’s ISP doesn’t help you to know what parameters to supply. For this reason, Raspberry Pi has developed from scratch its own suite of ISP control algorithms (sometimes referred to generically as 3A Algorithms), and these are made available to our users as well. Some of the most well known control algorithms include:

- AEC/AGC (Auto Exposure Control/Auto Gain Control): this monitors image statistics into order to drive the camera exposure to an appropriate level.

- AWB (Auto White Balance): this corrects for the ambient light that is illuminating a scene, and makes objects that appear grey to our eyes come out actually grey in the final image.

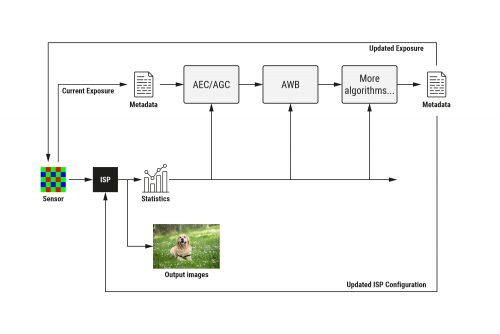

But there are many others too, such as ALSC (Auto Lens Shading Correction, which corrects vignetting and colour variation across an image), and control for noise, sharpness, contrast, and all other aspects of image processing. Here’s how they work together.

The control algorithms all receive statistics information from the ISP, and cooperate in filling in metadata for each image passing through the pipeline. At the end, the metadata is used to update control parameters in both the image sensor and the ISP.

Previously these functions were proprietary and closed source, and ran on the Broadcom GPU. Now, the GPU just shovels pixels through the ISP hardware block and notifies us when it’s done; practically all the configuration is computed and supplied from open source Raspberry Pi code on the ARM processor. A shim layer still exists on the GPU, and turns Raspberry Pi’s own image processing configuration into the proprietary functions of the Broadcom SoC.

To help you configure Raspberry Pi’s control algorithms correctly for a new camera, we include a Camera Tuning Tool. Or if you’d rather do your own thing, it’s easy to modify the supplied algorithms, or indeed to replace them entirely with your own.

Why libcamera?

Whilst ISP vendors are in some cases contributing open source V4L2 drivers, the reality is that all ISPs are very different. Advertising these differences through kernel APIs is fine – but it creates an almighty headache for anyone trying to write a portable camera application. Fortunately, this is exactly the problem that libcamera solves.

We provide all the pieces for Raspberry Pi-based libcamera systems to work simply “out of the box”. libcamera remains a work in progress, but we look forward to continuing to help this effort, and to contributing an open and accessible development platform that is available to everyone.

Summing it all up

So far as we know, there are no similar camera systems where large parts, including at least the control (3A) algorithms and possibly driver code, are not closed and proprietary. Indeed, for anyone wishing to customise a camera system – perhaps with their own choice of sensor – or to develop their own algorithms, there would seem to be very few options – unless perhaps you happen to be an extremely large corporation.

In this respect, the new Raspberry Pi Open Source Camera System is providing something distinctly novel. For some users and applications, we expect its accessible and non-secretive nature may even prove quite game-changing.

What about existing camera applications?

The new open source camera system does not replace any existing camera functionality, and for the foreseeable future the two will continue to co-exist. In due course we expect to provide additional libcamera-based versions of raspistill, raspivid and PiCamera – so stay tuned!

Where next?

If you want to learn more about the libcamera project, please visit https://libcamera.org.

To try libcamera for yourself with a Raspberry Pi, please follow the instructions in our online documentation, where you’ll also find the full Raspberry Pi Camera Algorithm and Tuning Guide.

If you’d like to know more, and can’t find an answer in our documentation, please go to the Camera Board forum. We’ll be sure to keep our eyes open there to pick up any of your questions.

Acknowledgements

Thanks to Naushir Patuck and Dave Stevenson for doing all the really tricky bits (lots of V4L2-wrangling).

Thanks also to the libcamera team (Laurent Pinchart, Kieran Bingham, Jacopo Mondi and Niklas Söderlund) for all their help in making this project possible.

Glossary

3A, 3A Algorithms: refers to AEC/AGC (Auto Exposure Control/Auto Gain Control), AWB (Auto White Balance) and AF (Auto Focus) algorithms, but may implicitly cover other ISP control algorithms. Note that Raspberry Pi does not implement AF (Auto Focus), as none of our supported camera modules requires it

AEC: Auto Exposure Control

AF: Auto Focus

AGC: Auto Gain Control

ALSC: Auto Lens Shading Correction, which corrects vignetting and colour variations across an image. These are normally caused by the type of lens being used and can vary in different lighting conditions

AWB: Auto White Balance

Bayer: an image format where each pixel has only one colour component (one of R, G or B), creating a sort of “colour mosaic”. All the missing colour values must subsequently be interpolated. This is a raw image format meaning that no noise, sharpness, gamma, or any other processing has yet been applied to the image

CSI-2: Camera Serial Interface (version) 2. This is the interface format between a camera sensor and Raspberry Pi

GPU: Graphics Processing Unit. But in this case it refers specifically to the multimedia coprocessor on the Broadcom SoC. This multimedia processor is proprietary and closed source, and cannot directly be programmed by Raspberry Pi users

ISP: Image Signal Processor. A hardware block that turns raw (Bayer) camera images into full colour images (either RGB or YUV)

Raw: see Bayer

SoC: System on Chip. The Broadcom processor at the heart of all Raspberry Pis

Unicam: the CSI-2 receiver on the Broadcom SoC on the Raspberry Pi. Unicam receives pixels being streamed out by the image sensor

V4L2: Video for Linux 2. The Linux kernel driver framework for devices that process video images. This includes image sensors, CSI-2 receivers, and ISPs

39 comments

Esbeeb

Wow!

Bayesian Bouffant, FCD

So the software will be open source, but the hardware is not and runs exclusively on the Rpi. I am not sure that this is better than using USB webcams.

James Hughes

Really? We’ve produced what is probably the world’s only fully open camera software stack and you are complaining that our HW isn’t open source? BTW, schematics for the new HQ camera is here https://www.raspberrypi.org/documentation/hardware/camera/schematics/rpi_SCH_HQcamera_1p0.pdf .

Robert Alderton

Only goes to show you cant please all of the people all of the time.

From the rest of us, great job as always.. Keep up the good work. You’ve built a great platform and organization for makers and education alike.

Thanks

jdb

We really should have a bot that automatically makes this comment for us whenever we post an article on the blog that has “release” and “open-source” in the body text.

It would save so much valuable, productive time for everyone else on tenterhooks who is standing ready to type exactly the same words into the comment box.

PhilE

Some people are a bit hazy on the concept of positive reinforcement.

Anton

What kind of comment is this? You typed the comment on a closed-source hardware and didn’t complain. Apparently this is as open as it currently gets, comparing the effort to USB cam is ridiculous.

Ander

So much to enjoy learning here!

Laurent

Awesome !

Staying (and working) at home isn’t funny, but the Pi folks managed to keep us enjoyed by new stuffs, many thanks for that ^^

I didn’t ever heard about this library, is there known applications which uses it (according your documentation, I guess the Qt framework…) ?

If the purpose of this library is to simplify camera handling for user applications, it’ll be a big step forward. Can’t wait to see modified raspistill, raspivid and PiCamera :)

James Hughes

libcamera is very recent, in fact still under development. Probably not much stuff uses it yet.

Laurent Pinchart

The Qt library doesn’t use libcamera, but the libcamera project includes a Qt-based test application named qcam.

libcamera is relatively new, and is thus not widely used by applications at this point. We haven’t reached the first public API freeze milestone yet, so applications would need to be updated as libcamera gets further developed. This being said, it’s already a good time to try it out for application development and provide feedback :-)

Tomasz Figa

Also note that there is a V4L2-webcam compatibility layer in development, which would enable existing/legacy applications to capture using libcamera before they get a chance to be migrated to the native libcamera API. Last time I’ve heard the basic functionality was already there, although some applications (e.g. qv4l2) still aren’t happy with it.

(The code is at https://git.linuxtv.org/libcamera.git/tree/src/v4l2)

Edwin Shepherd

Intersting, I will have to give this a go! The closed source nature of the GPU and ISP on the RPi, has been frustrating. My only concern would be if the gpu isn’t beening used to process frames as they come in, is rapidly taking photos and then doing CPU processing on them slower if the CPU also has to do AWB etc? I shall benchmark.

David Plowman — post author

We’re still using the hardware ISP, which is principally what determines the performance. Things like AWB actually don’t take a great deal of computation; we run such things asynchronously, and only every few frames. Please check out the documentation and source code links to find out more.

Anton

Most awesome, thank you!

Btw., not sure I understood fully, but did you write your own de-bayer algorithm as well? Can you please provide more details on this?

David Plowman — post author

We still use the hardware ISP on the Soc, that’s the only way to get reasonable performance, and that’s where the de-Bayering is done. We are taking over control of the ISP from the closed-source firmware, but we can’t change the capabilities that is has. Please check out the documentation and source code in the links above to understand more about what we’ve done.

James Hughes

AIUI, the HW on the GPU does the debayer, under control of the ARM. There are a number of HW blocks on the GPU that form the ISP, this open source software moves the control of those blocks from the firmware to the ARM. plus some software algorithms like AGC in the firmware that have now been re-implemented on the ARM.

Ebrahim

Im not familiar on the low level details but as usual great work. You are really getting the max out of this hardware. I hope there is a roadmap for this ISP

Michael Ratzel

I recently started working on a project using mmal to control the Pi Cam.

I’m not very into this right now, so please correct me if I’m wrong. I understand libcamera as a open source more standardized replacement for mmal, which sounds great tbh.

David Plowman — post author

Yes, the broad aim for us is to support an open source camera initiative (libcamera), as an alternative to proprietary firmware that our users can’t see or modify, and to give them as much control over the camera pipeline as we can, right down to the ISP control algorithms.

Michael Kirk

Does this mean we can attach any camera imager to the Pi CSI camera port? Or are we still restricted to Pi supported cameras ( OV5647, iMX 219 and new iMX477)?

Is the encryption chip (found on v2 Sony iMX219) still present? Does the new 12MP camera also have this encryption chip?

Michael Kirk

Found part of my answer. Schematic for 12MP IMX477 shows Atmel ATSHA204A encryption chip. Is encryption authentication still required with libcamera for non-compute module based Pis?

David Plowman — post author

Hi, answer here: https://www.raspberrypi.org/forums/viewtopic.php?f=43&t=273018#p1655382

Michael Kirk

Hi David, That link is to my forum post with same type of question. :-) Cheers, Mike

Gavin McIntosh

Does it have built in IR filter?

David Plowman — post author

The question of the IR filter is obviously down to the camera module. That said, with this announcement we’re enabling people to tune and use alternative camera modules, so hopefully users will have more choices in the future.

David Goadby

WOW! Great work you guys. I foresee some amazing applications using this. I wonder what the affects will be on the Python camera (picamera) options.

Dave Stevenson

Libcamera has it’s own Python bindings.

We may look at whether it is feasible to make a wrapper such that the API matches that of picamera and so some existing examples still work, but not at the moment.

libcamera itself doesn’t include codecs or multiple resizes, therefore some of the picamera functionality would be external to libcamera itself.

Laurent Pinchart

To be exact we *will* have python bindings :-) This is something we are seriously considering, but we will possibly delay doing so until the API freeze.

Gaurav

I had implemented Raspberry pi MIPI CSI-2 Camera IMX219 to FPGA using verilog HDL, my design only had debayer and no other image optimization.

I was exactly looking for how raspberry pi controls WB and Gain, I wish this information was available when i

did my project in January,

complete project Details about interfacing camera to FPGA can be found at my blog

https://www.circuitvalley.com/2020/02/imx219-camera-mipi-csi-receiver-fpga-lattice-raspberry-pi-camera.html

ed

great work, thanks :)!

I was wondering if it is possible just to capture a raw video stream? at what fps would I get to sustain the write speed of the pi?

Laurent Pinchart

Raw capture is currently inefficient for video streams (it has been developed for still image capture as the first target). We’re working on solving this, and the fix should land in libcamera by end of June at the latest according to our current tentative schedule.

Takanori Miki

Is HW capable to capture IMX219 8MP@30fps 10bit Bayer RAW video? It requires quite a lot BW.

Dafna

hi, can you refer to the repo/branch of the kernel implementation ? I was digging in github.com/raspberrypi/linux and was not able to find it. Thanks

David Plowman — post author

Hi, I believe it’s here: https://github.com/raspberrypi/linux/tree/rpi-5.4.y

(I expect someone will correct me if I’m wrong!)

Ben

Thanks for the great work. I have one question.

“A shim layer still exists on the GPU, and turns Raspberry Pi’s own image processing configuration into the proprietary functions of the Broadcom SoC.”

Q1. Is this the permanent or temporary solution for the Open Source Camera Stack?

Q2. Could you explain a bit more about what functionalities are on the “shim layer” on GPU?

David Plowman — post author

Sorry for not seeing your question sooner (the Camera Board forum tends to get monitored more assiduously).

Anyway, I think your two question are closely related. To answer both, we use the ISP (Image Signal Processor) on the Broadcom SoC under NDA, so I don’t expect we’ll ever be able to tell you much more about it. As such, the “shim layer” translates the “Raspberry Pi view of an ISP” into the register reads and writes of Broadcom’s ISP.

Now the “Raspberry PI view”, as I called it, is documented fairly comprehensively here: https://github.com/raspberrypi/documentation/blob/master/linux/software/libcamera/rpi_SOFT_libcamera_1p0.pdf (chapter 5, I expect you’ve found that already). What it gets turned into is of course proprietary – but it really is just the generation of Broadcom’s ISP configuration, culminating in reads and writes to hardware registers.

So long as this arrangement with a proprietary ISP attached to the GPU exists, I think we will always need some kind of thin translation “shim layer” between the two. Of itself I wouldn’t describe it as “adding any functionality”, it’s “just” plumbing.

gallex

Does HQ camera support old MMAL api ?

David Plowman — post author

Yes. You can use the HQ cam with all the same interfaces as our other cameras. MMAL, raspistill/raspivid, picamera and the open source libcamera-based stack.

Comments are closed