Twitter-triggered photobooth

A guest post today: I’m just off a plane and can barely string a sentence together. Thanks so much to all the progressive maths teachers we met at the Wolfram conference in New York this week; we’re looking forward to finding out what your pupils do with Mathematica from now on!

Over to Adam Kemény, from photobot.co in Hove, where he spends the day making robotic photobooths.

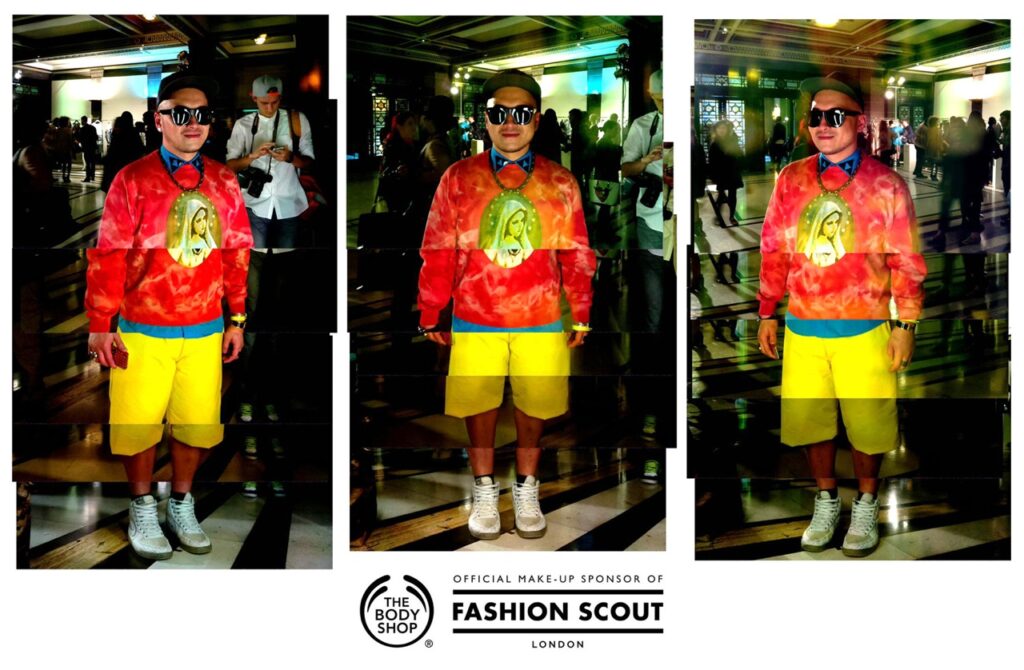

This summer Photobot.Co Ltd built what we believe to be the world’s first Twitter-triggered photobooth. Its first outing, at London Fashion Week for The Body Shop, allowed the fashion world to create unique portraits of themselves which were then delivered straight to their own mobile devices.

In February we took our talking robotic photobooth, Photobot, to London Fashion Week for The Body Shop to use to entertain the media and VIPs. We saw that almost every photostrip that Photobot printed was quickly snapped with smartphones before being shared to twitter and facebook and that resonated with an idea we’d had for a photobooth that used twitter as a trigger, rather than buttons or coins.

When The Body Shop approached us to create something new and fun for September’s Fashion Week we pitched a ‘Magic Mirror’ photobooth concept that would allow a Fashion Week attendee to quickly share their personal style.

By simply sending a tweet to the booth’s twitter account the Magic Mirror would respond by greeting them via a hidden display before taking their photo. The resulting image would then be tweeted back to them as well as being shared to a curated gallery.

Exploring the concept a little further led us to realise that space constraints would mean that in order to capture a full length portrait we’d need to look at a multi-camera setup. We decided to take inspiration from the fragmented portrait concept that Kevin Meredith (aka Lomokev) developed for his work in Brighton Source magazine – and began experimenting with an increasing number of cameras. Raspberry Pi’s, with their camera module, soon emerged as a good candidate for the cameras due to their image quality, ease of networked control and price.

We decided to give the booth a more impactful presence by growing it into a winged dressing mirror with three panels. This angled arrangement would allow the subject to see themselves from several perspectives at once and, as each mirrored panel would contain a five camera array, they’d be photographed by a total of 15 RPi cameras simultaneously. The booth would then composite the 15 photographs into one stylised portrait before tweeting it back to the subject.

As The Body Shop was using the Magic Mirror to promote a new range of makeup called Colour Crush, graphics on the booth asked the question “What is the main #colour that you are wearing today?”. When a Fashion Week attendee replied to this question (via Twitter) the Magic Mirror would be triggered. Our software scanned tweets to the booth for a mention of any of a hundred colours and send a personalised reply tweet based on what colour the attendee was wearing. For example, attendees tweeting that they wore the colour ‘black’ would have their photos taken before receiving a tweet from the booth that said “Pucker up for a Moonlight Kiss mwah! Love, @TheBodyShopUK #ColourCrush” along with their composited portrait.

The Magic Mirror was a challenge to build, mainly due to the complications of getting so many tiny cameras to align in a useful way, but our wonderful developer created a GUI interface that allowed far easier configuration of the 15 photos onto the composited final image, saving the day and my sanity. The booth was a hit and we’re always on the look out for other creative uses for the booth so would welcome any contact from potential collaborators or clients.

13 comments

bertwert

Cool!

john

i love this

Oliver

Nice idea!

Any chance that you release your software?

And how – how fast – was the image taken after the tweet happened? The image shown above looks like the guy is posing for the picture so I guess he had some kind of instruction – some photobooth-3-2-1-SMILE?!

Adam Kemény

Thanks for the nice comments! Once the booth receives the tweet it does indeed have a visible countdown that lets the subject know that the photo is about to be taken.

A Philistine

Finally! Something interesting happening in Fashion Week

AndrewS

Neat! A bit like a smaller, social-media enabled version of http://www.raspberrypi.org/archives/5232 :)

Is the MacMini what stitches all the individual photos together? Is that part too resource-intensive to run on another Pi?

Adam Kemény

Yes, that Pi 3D scanner is a particularly cool creation, and along similar lines!

With the time and budget available for this work the mac mini was kind of defaulted to as being the device used for the compositing but the code now theoretically allows a pi to do all the work. Haven’t tried it with that many images yet though but will soon :)

mike

I like the idea and very good work on the concept. Definitely a good start. My personal opinion to improve further is maybe arrange the picture so it looks more artistic (not so straight) rather than a bad stitching job. Make the pictures placement more random as in lomokev. Maybe arrange the pi camera so they are not in any exact array? Or Maybe it’s the guys’ shirt that is bothering me….

Good work on the project, very impressive.

Adam Kemény

Thanks Mike!

As it happens manual rotation is built into the configuration tool for the app so I can individually rotate each image as required. I didn’t get to properly test this functionality until the very last minute at Fashion Week and discovered that rotation created jaggy edges on each image that I wanted to avoid so opted to keep everything level. I agree that this would improve the final composited image so it’s a bug that definitely needs squashing!

Jim Manley

A variation of this and other recent multi-Pi/Camera rigs will be able to deliver 1080p quality “The Matrix” slow-motion, multi-angle bullet-dodging effects, assuming there’s software that can take advantage of the Pi’s GPU’s hardware capabilities for this sort of thing.

Jonno

this is creepy…

Terry Rector

Can you use the photo’s meta data to “stitch” the photos together? I have done this lots, there is much written about doing it on the web, however it just depends on that meta data is being provided. Also what are the power supplies that you are using, I have been looking for a way to power several Pis without several power supplies and without cutting and splicing USB cables?

Great project, I see lots of utility in various commercial opportunities, including 3D imaging.

Adam Kemény

Hi Terry,

We deliberately opted for this overlaid look rather than attempting to smooth or stitch the images together and given that the brain at the booth’s core is a mac mini I’m sure stitching could have been an option.

Power supply-wise we just used powered usb hubs, one per mirror powering five Pi’s each. Nice and easy.

Comments are closed