Wear your words with this subtitle hoodie

For lip readers, mask mandates had a major impact on communication. Zack Freedman’s Raspberry Pi-powered real-time subtitles aimed to bridge that gap.

Lip reading in lockdown

For some, myself included, lockdowns and mask mandates helped us realise just how dependant we are on lip reading. And this struggle is what led Zack Freedman to dust off an old project idea and team up with Deepgram, a US and Philippines-based brand specialising in automatic speech recognition.

Building wearable subtitles

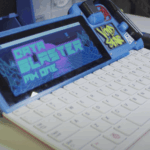

The stretched-bar display, a leftover from Zack’s Raspberry Pi 400 Cyberdeck project, is buddied up with a Raspberry Pi 3 B+, a lav mic, and a Codec Zero add-on board for Raspberry Pi to build one of the most “off the shelf” projects we’ve ever seen from Voidstar Labs.

With bits and pieces all successfully connected and tucked inside a couple of custom 3D-printed cases, it was time for Deepgram’s Python SDK to get to work. And work it did. Somewhat. For the average Joe using a speech-to-text API, Deepgram works remarkably well. But when you’re a fast-talking content creator who wants to see subtitles in real life, well…

ALL THE CODE!!!

600-odd lines of additional code later, and Zack had exactly what he’d set out to create: a wearable subtitle display that takes his every word and relays it, in real time, across his chest. It really is a remarkable bit of kit that tackles a very real real-world issue.

Show Zack some love

Help Zack continue to produce awesome content like his wearable subtitles by subscribing to him on YouTube. You can also hang out with Zack on Discord, and support his future builds by becoming a Patreon.

4 comments

Peter

Are you a robot? :) Cool gadget!

RoboDoc

This is a super cool idea and an awesome project. In future we can see this project without wires. Highly appreciate for the incredible invention. Keep up the good work.

Kine

This is an amazing future project, appreciated your efforts.

Robotech

Wow! Very interesting. Thanks for sharing…

Comments are closed