Five years of Raspberry Pi clusters

In this guest blog post, OpenFaaS founder and Raspberry Pi super-builder Alex Ellis walks us down a five-year-long memory lane explaining how things have changed for cluster users.

In this guest blog post, OpenFaaS founder and Raspberry Pi super-builder Alex Ellis walks us down a five-year-long memory lane explaining how things have changed for cluster users.

I’ve been writing about running Docker on Raspberry Pi for five years now and things have got a lot easier than when I started back in the day. There’s now no need to patch the kernel, use a bespoke OS, or even build Go and Docker from scratch.

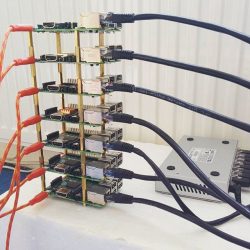

My stack of seven Raspberry Pi 2s running Docker Swarm (2016)

Since my first blog post and printed article, I noticed that Raspberry Pi clusters were a hot topic. They’ve only got even hotter as the technology got easier to use and the devices became more powerful.

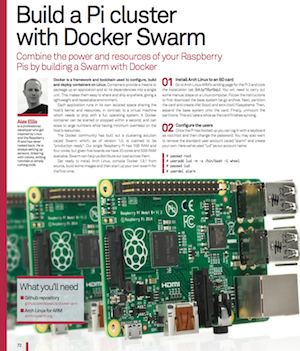

- Docker article in Linux magazine — March 2016

Back then we used ‘old Swarm‘, which was arguably more like Kubernetes with swappable orchestration and a remote API that could run containers. Load-balancing wasn’t built-in, and so we used Nginx to do that job.

I built out a special demo using kit from Pimoroni.com. Each LED lit up when a HTTP request came in.

- Docker load-balanced LED cluster Raspberry Pi — 17 May 2016

After that, I adapted the code and added in some IoT sensor boards to create a smart datacenter and was invited to present the demo at Dockercon 2016:

- IoT Dockercon Demo — 28 June 2016

Docker then released a newer version of Swarm also called ‘Swarm’ and I wrote up these posts:

- Live Deep Dive — Docker Swarm Mode on the Pi — Aug 2016

This is still my most popular video on my YouTube channel.

Now that more and more people were trying out Docker on Raspberry Pi (arm), we had to educate them about not running potentially poisoned images from third-parties and how to port software to arm. I created a Git repository (alexellis/docker-arm) to provide a stack of common software.

- 5 things about Docker on Raspberry Pi — Sep 2016

I wanted to share with users how to use GPIO for accessing hardware and how to create an IoT doorbell. This was one of my first videos on the topic, a live run-through in one take.

Did you know? I used to run blog.alexellis.io on my Raspberry Pi 3

- Hands-on Docker for Raspberry Pi — Nov 2016

Then we all started trying to run upstream Kubernetes on our 1GB RAM Raspberry Pis with kubeadm. Lucas Käldström did much of the groundwork to port various Kubernetes components and even went as far as to fix some issues in the Go language.

I wrote a recap on everything you needed to know including exec format error and various other things. I also put together a solid set of instructions and workarounds for kubeadm on Raspberry Pi 2/3.

Users often ask what a practical use-case is for a cluster. They excel at running distributed web applications, and OpenFaaS is loved by developers for making it easy to build, deploy, monitor, and scale APIs.

In this post you’ll learn how to deploy a fun Pod to generate ASCII text, from there you can build your own with Python or any other language:

This blog post was one of the ones that got pinned onto the front page of Hacker News for some time, a great feeling when it happens, but something that only comes every now and then.

The instructions for kubeadm and Raspbian were breaking with every other minor release of Kubernetes, so I moved my original gist into a Git repo to accept PRs and to make the content more accessible.

- Build your own bare-metal ARM cluster — Dec 2018

I have to say that this is the one piece of Intellectual Property (IP) I own which has been plagiarised and passed-off the most.

You’ll find dozens of blog posts which are almost identical, even copying my typos. To begin with I found this passing-off of my work frustrating, but now I take it as a vote of confidence.

Shortly after this, Scott Hanselman found my post and we started to collaborate on getting .NET Core to work with OpenFaaS.

This lead to us co-presenting at NDC, London in early 2018. We were practising the demo the night before, and the idea was to use Pimoroni Blinkt! LEDs to show which Raspberry Pi a Pod (workload) was running on. We wanted the Pod to stop showing an animation and to get rescheduled when we pulled a network cable.

It wasn’t working how we expected, and Scott just said “I’ll phone Kelsey”, and Mr Hightower explained to us how to tune the kubelet tolerance flags.

As you can see from the demo, Kelsey’s advice worked out great!

Fast forward and we’re no longer running Docker, or forcing upstream Kubernetes into 1GB of RAM, but running Rancher’s light-weight k3s in as much as 4GB of RAM.

k3s is a game-changer for small devices, but also runs well on regular PCs and cloud. A server takes just 500MB of RAM and each agent only requires 50MB of RAM due to the optimizations that Darren Shepherd was able to make.

- Will it cluster? k3s on your Raspberry Pi — March 2019

I wrote a new Go CLI called k3sup (‘ketchup’) which made building clusters even easier than it was already and brought back some of the UX of the Docker Swarm CLI.

To help combat the issues around the Kubernetes ecosystem and tooling like Helm, which wasn’t available for ARM, I started a new project named arkade . arkade makes it easy to install apps whether they use helm charts or kubectl for installation.

k3s, k3sup, and arkade are all combined in my latest post which includes installing OpenFaaS and the Kubernetes dashboard.

In late March I put together a webinar with Traefik to show off all the OpenFaaS tooling including k3sup and arkade to create a practical demo. The demo showed how to get a public IP for the Raspberry Pi cluster, how to integrate with GitHub webhooks and Postgresql.

The latest and most up-to-date tutorial, with everything set up step by step:

In the webinar you’ll find out how to get a public IP for your IngressController using the inlets-operator.

Take-aways

- People will always hate

Some people try to reason about whether you should or should not build a cluster of Raspberry Pis. If you’re asking this question, then don’t do it and don’t ask me to convince you otherwise.

- It doesn’t have to be expensive

You don’t need special equipment, you don’t even need more than one Raspberry Pi, but I would recommend two or three for the best experience.

- Know what to expect

Kubernetes clusters are built to run web servers and APIs, not games like you do with your PC. They don’t magically combine the memory of each node into a single supercomputer, but allow for horizontal scaling, i.e. more replicas of the same thing.

- Not everything will run on it

Some popular software like Istio, Minio, Linkerd, Flux and SealedSecrets do not run on ARM devices because the maintainers are not incentivised to make them do so. It’s not trivial to port software to ARM and then to support that on an ongoing basis. Companies tend to have little interest since paying customers do not tend to use Raspberry Pis. You have to get ready to hear “no”, and sometimes you’ll be lucky enough to hear “not yet” instead.

- Things are always moving and getting better

If you compare my opening statement where we had to rebuild kernels from scratch, and even build binaries for Go, in order to build Docker, we live in a completely different world now. We’ve seen classic swarm, new swarm (swarmkit), Kubernetes, and now k3s become the platform of choice for clustering on the Raspberry Pi. Where will we be in another five years from now? I don’t know, but I suspect things will be better.

- Have fun and learn

In my opinion, the primary reason to build a cluster is to learn and to explore what can be done. As a secondary gain, the skills that you build can be used for work in DevOps/Cloud Native, but if that’s all you want out of it, then fire up a few EC2 VMs on AWS.

Recap on projects

Featured: my 24-node uber cluster, chassis by Bitscope.

-

- k3sup — build Raspberry Pi clusters with Rancher’s lightweight cut of Kubernetes called k3s

- arkade — install apps to Kubernetes clusters using an easy CLI with flags and built-in Raspberry Pi support

- OpenFaaS — easiest way to deploy web services, APIs, and functions to your cluster; multi-arch (arm + Intel) support is built-in

- inlets — a Cloud Native Tunnel you can use to access your Raspberry Pi or cluster from anywhere; the inlets-operator adds integration into Kubernetes

Want more?

Well, all of that should take you some time to watch, read, and to try out — probably less than five years. I would recommend working in reverse order from the Traefik webinar back or the homelab tutorial which includes a bill of materials.

Become an Insider via GitHub Sponsors to support my work and to receive regular email updates from me each week on Cloud Native, Kubernetes, OSS, and more: github.com/sponsors/alexellis

And you’ll find hundreds of blog posts on Docker, Kubernetes, Go, and more on my blog over at blog.alexellis.io.

22 comments

Dougie Lawson

As well as showing what a RPi cluster can do, perhaps you should also be explaining what it can’t do.

Anders

That would be a very long list, but this paragraph from the article should cover it:

“Kubernetes clusters are built to run web servers and APIs, not games like you do with your PC. They don’t magically combine the memory of each node into a single supercomputer, but allow for horizontal scaling, i.e. more replicas of the same thing.”

Dirk Broer

Would it be possible to run a multi-threaded application, using a RPi cluster, so that each pi does one thread of it?

crumble

Not without rewriting the whole program.

Threads share the memory of its process. So you have to broadcast memory changes to all nodes. This will be really slow and a pain in the a* to rewrite. You have to find all existing memory writes and decide if other nodes have to be informed. the changes will have a great impact on the timing. So you may run into racing issues which never showed up before.

César

But you can successfully run parallel and distributed programs, can’t you? Not the same thing, I know…

Tony

As crumble said, its not recommended to do this and it is not provided as standard – but in general and with much programming work, it should be possible.

James Carroll

I’m always amazed at how many people follow Raspberry Pi videos on YouTube just to make negative comments. Not critical ones, that have a point, just nasty stuff. It’s depressing.

Liz Upton

It’s depressing, but I don’t think it’s unique to Raspberry Pi videos: it’s universal. YouTube comments are a horrid place. (We know this very intimately. We have to moderate them.)

Kevin Davies

Sad state of affairs isn’t it.

Michael Hunt

Thanks for the overview. My build from several years ago:

https://www.thingiverse.com/thing:1897376

I had some success at partitioning astronomy work but yeah, it’s hard work.

Michael Hunt

@Lodewijk a 63watt/18.5v HP laptop power supply through the buck converters down to 5v for the Pi and 12v for the switch.

Lodewijk

What do you use as a power supply?

Thomas

I use elixir (on the erlang beam) to run programs across pi 2/3/4 nodes in parallel. With elixir (and erlang) the sharing of memory, where processes run etc is all part of the language and not difficult to do at all.

Interesting articles gonna spent some time reading and watching them!

Thanks for the post

Wabo

Perhaps try to use GLUSER FS (https://www.gluster.org/) as it works great on the RASPI

Alex

Thanks for the talk/video it was super interesting and helpful! I am trying to get a distributed/sharded database or filesystem that is compatible with Spark running on an RPi4 cluster, any recommendations?

Eric Olson

Very nice to learn about k3sup. Do you know if it is possible and how to run a parallel MPI code on a kubernetics cluster? What about a parallel program with coarrays in modern Fortran?

Marc

I have been running a production cluster on Raspberry 2 (heat is a problem for >2) for 5 years too: https://www.instagram.com/p/BmspVeTggaz/

The FOSS server can be found here (app server with integrates distributed database over HTTP): https://github.com/tinspin/rupy

Emmanuel

Minio seems to work on arm64 now :

https://github.com/minio/minio/issues/5345#issuecomment-605487556

Binaries available also for arm :

https://dl.min.io/server/minio/release/

Didn’t test it but hope that helps.

Toys Samurai

Sorry for asking such simple question. I am still in the researching state of this matter. I wonder how memory works in general in the cluster. Let’s say I have something that demands 4 Gb of RAM upfront, and my cluster is made up of 4 Raspberry Pis, each equipped with 2Gb. Will my project still run? The cluster will have a total of 8Gb, but each node only has 2!

Federico Ramos

Nice summary and post, this remember’s me when I build a cluster of three nodes using standar linux debian and running things with OpemMP and MPI for little HPC demos

Kevin Davies

Superb blog post. Thank you. You have given me much to think about.

Duncan

Thank you for the excellent write-up and for all your blog posts that I have avidly followed over the last few years. I know that Hypriot did some stress-tests to see how many Docker containers they could run on a single RPi several years ago. Do you know of any more up-to-date stats, e.g. for the RPi 4, that show how many ‘ordinary’ (non-optimized) containers can be run?

Comments are closed