Raspberry Pi clusters come of age

In today’s guest post, Bruce Tulloch, CEO and Managing Director of BitScope Designs, discusses the uses of cluster computing with the Raspberry Pi, and the recent pilot of the Los Alamos National Laboratory 3000-Pi cluster built with the BitScope Blade.

High-performance computing and Raspberry Pi are not normally uttered in the same breath, but Los Alamos National Laboratory is building a Raspberry Pi cluster with 3000 cores as a pilot before scaling up to 40 000 cores or more next year.

That’s amazing, but why?

I was asked this question more than any other at The International Conference for High-Performance Computing, Networking, Storage and Analysis in Denver last week, where one of the Los Alamos Raspberry Pi Cluster Modules was on display at the University of New Mexico’s Center for Advanced Research Computing booth.

The short answer to this question is: the Raspberry Pi cluster enables Los Alamos National Laboratory (LANL) to conduct exascale computing R&D.

The Pi cluster breadboard

Exascale refers to computing systems at least 50 times faster than the most powerful supercomputers in use today. The problem faced by LANL and similar labs building these things is one of scale. To get the required performance, you need a lot of nodes, and to make it work, you need a lot of R&D.

However, there’s a catch-22: how do you write the operating systems, networks stacks, launch and boot systems for such large computers without having one on which to test it all? Use an existing supercomputer? No — the existing large clusters are fully booked 24/7 doing science, they cost millions of dollars per year to run, and they may not have the architecture you need for your next-generation machine anyway. Older machines retired from science may be available, but at this scale they cost far too much to use and are usually very hard to maintain.

The Los Alamos solution? Build a “model supercomputer” with Raspberry Pi!

Think of it as a “cluster development breadboard”.

The idea is to design, develop, debug, and test new network architectures and systems software on the “breadboard”, but at a scale equivalent to the production machines you’re currently building. Raspberry Pi may be a small computer, but it can run most of the system software stacks that production machines use, and the ratios of its CPU speed, local memory, and network bandwidth scale proportionately to the big machines, much like an architect’s model does when building a new house. To learn more about the project, see the news conference and this interview with insideHPC at SC17.

Traditional Raspberry Pi clusters

Like most people, we love a good cluster! People have been building them with Raspberry Pi since the beginning, because it’s inexpensive, educational, and fun. They’ve been built with the original Pi, Pi 2, Pi 3, and even the Pi Zero, but none of these clusters have proven to be particularly practical.

That’s not stopped them being useful though! I saw quite a few Raspberry Pi clusters at the conference last week.

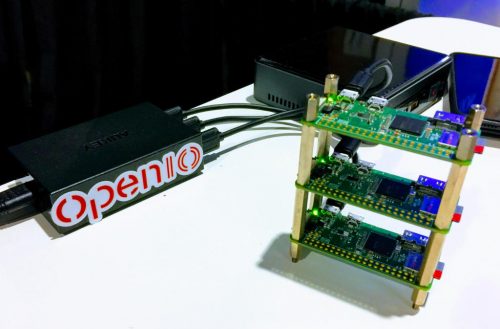

One tiny one that caught my eye was from the people at openio.io, who used a small Raspberry Pi Zero W cluster to demonstrate their scalable software-defined object storage platform, which on big machines is used to manage petabytes of data, but which is so lightweight that it runs just fine on this:

There was another appealing example at the ARM booth, where the Berkeley Labs’ singularity container platform was demonstrated running very effectively on a small cluster built with Raspberry Pi 3s.

My show favourite was from the Edinburgh Parallel Computing Center (EPCC): Nick Brown used a cluster of Pi 3s to explain supercomputers to kids with an engaging interactive application. The idea was that visitors to the stand design an aircraft wing, simulate it across the cluster, and work out whether an aircraft that uses the new wing could fly from Edinburgh to New York on a full tank of fuel. Mine made it, fortunately!

Next-generation Raspberry Pi clusters

We’ve been building small-scale industrial-strength Raspberry Pi clusters for a while now with BitScope Blade.

When Los Alamos National Laboratory approached us via HPC provider SICORP with a request to build a cluster comprising many thousands of nodes, we considered all the options very carefully. It needed to be dense, reliable, low-power, and easy to configure and to build. It did not need to “do science”, but it did need to work in almost every other way as a full-scale HPC cluster would.

Some people argue Compute Module 3 is the ideal cluster building block. It’s very small and just as powerful as Raspberry Pi 3, so one could, in theory, pack a lot of them into a very small space. However, there are very good reasons no one has ever successfully done this. For a start, you need to build your own network fabric and I/O, and cooling the CM3s, especially when densely packed in a cluster, is tricky given their tiny size. There’s very little room for heatsinks, and the tiny PCBs dissipate very little excess heat.

Instead, we saw the potential for Raspberry Pi 3 itself to be used to build “industrial-strength clusters” with BitScope Blade. It works best when the Pis are properly mounted, powered reliably, and cooled effectively. It’s important to avoid using micro SD cards and to connect the nodes using wired networks. It has the added benefit of coming with lots of “free” USB I/O, and the Pi 3 PCB, when mounted with the correct air-flow, is a remarkably good heatsink.

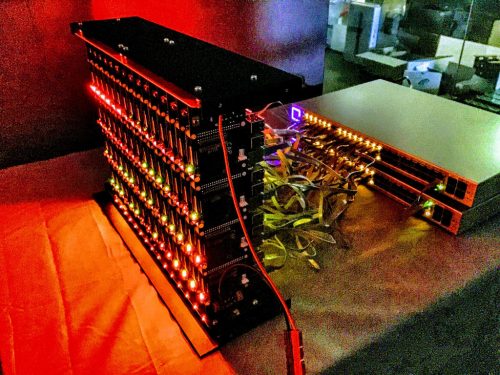

When Gordon announced netboot support, we became convinced the Raspberry Pi 3 was the ideal candidate when used with standard switches. We’d been making smaller clusters for a while, but netboot made larger ones practical. Assembling them all into compact units that fit into existing racks with multiple 10 Gb uplinks is the solution that meets LANL’s needs. This is a 60-node cluster pack with a pair of managed switches by Ubiquiti in testing in the BitScope Lab:

Two of these packs, built with Blade Quattro, and one smaller one comprising 30 nodes, built with Blade Duo, are the components of the Cluster Module we exhibited at the show. Five of these modules are going into Los Alamos National Laboratory for their pilot as I write this.

It’s not only research clusters like this for which Raspberry Pi is well suited. You can build very reliable local cloud computing and data centre solutions for research, education, and even some industrial applications. You’re not going to get much heavy-duty science, big data analytics, AI, or serious number crunching done on one of these, but it is quite amazing to see just how useful Raspberry Pi clusters can be for other purposes, whether it’s software-defined networks, lightweight MaaS, SaaS, PaaS, or FaaS solutions, distributed storage, edge computing, industrial IoT, and of course, education in all things cluster and parallel computing. For one live example, check out Mythic Beasts’ educational compute cloud, built with Raspberry Pi 3.

For more information about Raspberry Pi clusters, drop by BitScope Clusters.

I’ll read and respond to your thoughts in the comments below this post too.

Editor’s note:

Here is a photo of Bruce wearing a jetpack. Cool, right?!

16 comments

Robert Cromer

You guys need to start making a computer “erector set”. As a kid, I received an erector set when I was 7-8 years old for Christmas. I made everything one could think of. I later became a Mechanical Engineer. I designed parts for GE Gas Turbines, and when you switch on your lights, I have a direct connection to powering RPis all over the world.

You have most of the fundamental parts right now. You need a bus, something like the CM DDR3 bus. If the RPi 3B or whenever the RPi 4 comes out, had an adaptor or pinouts that connected to that bus, Clustering would be easy. I could envision four quad processor CMs, a graphics processor/Bitcoin miner on a CM, a CM with SSD, etc. A computer erector set…

Phil

What’s wrong with using the switch and ethernet fabric as the “bus” on the existing hardware?

Eric Olson

Is there a short video presentation available that discusses the Los Alamos Pi cluster, how it was constructed, what it will be used for and why this solution was chosen over others?

Also, given the interest in OctoPi and other Pi clusters, could there be a section devoted to parallel processing in the Raspberry Pi Forum?

Bruce Tulloch

That’s a good idea. I think the time is right.

crumble

Is the airwing demo free available?

Bruce Tulloch

The EPCC Raspberry Pi Cluster is called Wee Archie (https://www.epcc.ed.ac.uk/discover-and-learn/resources-and-activities/what-is-a-supercomputer/wee-archie) and it (like the Los Alamos one we built) is a “model”, albeit for a somewhat different purpose. In their case it’s representative of Archer (http://www.archer.ac.uk/) a world-class supercomputer located and run in the UK the National Supercomputing Service. Nick Brown (https://www.epcc.ed.ac.uk/about/staff/dr-nick-brown) is the guy behind the demo I saw at SC17. Drop him a line!

Anonymous

I’m glad I left their high performance computing department now. This is madness. The Fortran code bad so prevalent at the labs is not going to run the same on the ARM architecture when the super computers the code is to run on will be used on Intel architecture machines. This project is going to give the interns a playing field to learn what they should have learned in college.

Eric Olson

One of the pending issues with exascale computing is that it is inefficient to checkpoint a computation running on so many cores across so many boxes. At the same time, the probability that all nodes function faultlessly for the duration of the computation decreases exponentially as more nodes are added.

Effectively utilizing distributed memory parallel systems has been compared to herding chickens. When contemplating flocks so large that it takes megawatts to feed them, it may be better to practice by herding cockroaches. This isn’t about performance tuning Fortran codes, but how to manage hardware faults in a massively distributed parallel computation. As mentioned in the press release, we don’t even know how to boot an exascale machine: By the time the last node boots, multiple other nodes have already crashed. In my opinion modelling these exascale difficulties with a massive cluster of Raspberry Pi computers is feasible. For example, dumping 1GB of RAM over the Pi’s 100Mbit networking is a similar data to bandwidth ratio as dumping 1TB of RAM over a 100Gbit interconnect.

Bruce Tulloch

Spot on Eric. The issue is one of scale, booting, running the machines, getting the data in and out and check-pointing to avoid losing massive amounts of computational work.

Some interesting things I learned from this project…

One normally thinks of error rates of the order of 10^-18 as being pretty good, but at this scale one can run into them within the duration of a single shot on a big machine. At exascale this will be worse. The word the HPC community uses for this is “resilience”; the machines need to be able to do the science in a reliable and verifiable way despite these “real world” problems intervening in the operation of the underlying cluster.

They do a lot of “synchronous science” at massive scale so the need for check-points is unavoidable and Los Alamos is located at quite a high altitude (about 7,300 feet) so the machines are subject to a higher levels of cosmic radiation. This means they encounter higher rates of “analogue errors” which can cause computation errors and random node crashes.

All these sorts of problems can be modelled, tested and understood using the Raspberry Pi Cluster at much lower cost and lower power than on big machines. Having root access to a 40,000 core cluster for extended periods of time is like a dream come true for the guys who’s job is to solve these problems.

Richatf

I make 120 Raspberry Pi clusters for 3D scanning. Use pure UDP multicasting to control them all using a single network packet transmission. Works really well :-)

Giovanni Scheepers

That’s very similar with what we want with a new grass roots project. But instead of a cluster physical near located, We are thinking of a ‘collective’ (kind of Borg, but then nice…), for doing Three.js GPU 3D rendering. I’ve got a prototype running on http://sustasphere.org/ If you Bing or Google on sustasphere, you will find the corresponding GitHub (not completely up to date however). The current prototype renders (obviously) in your browser. With the collective, your browser-calls will be routed to (hopefully) thousands of Raspberry’s; each crunching real-time a part of the 3D rendering. In ‘my head’, I’m thinking about Open Suze stacked with Express.js.

For the energy-supply of each node, we thank the wind and an archemedian screw, with an hydraulic head, with a simple bicycle dynamo…

Nice, but why? We would like to honor an echo from the past (The Port Huron Statement); introduce a virtual sphere of dignity. Giving people the opportunity to express their emotions; again defining the meaning of dignity. Imagine Mozart performing his Nozze di Figaro (for me a perfect example of bringing art to the people and sharing thoughts about morality); and being able to actualy be there, move around, ‘count the nostrils’ and maybe even ‘get physical’.

Yep, you’ll need some GPU-collective for that.

Based on your expierence, could you advise us on our road ahead? Help use make sound decisions?

Thank you.

Pablo

> recent pilot of the Los Alamos National Laboratory 3000-Pi cluster

It should read 750-Pi cluster, 5 blades of 150 Pis each, with 3000 cores total (4 cores each per CPU)

Joe

Ok, I’m a nuby on raspberry pi 3’s. But I was wondering if they used LFS with the bitscope blade cluster? …and if so, how did it perform?

Thanks,

Joe

Bruce Tulloch

Not LFS but not Raspbian either (except for initial testing). They will eventually published more to explain what they’re doing but suffice to say it’s a very lean software stack which is aimed to make it easy for them to simulate the operation of big clusters on this “little” one.

Robin Watts

Why is it “important to avoid using the Micro SD cards” ?

I have an application in mind for a pi cluster, for which I will need local storage. If I can’t use the MicroSD card, then what?

Bruce Tulloch

When running a cluster of 750 nodes (as Los Alamos are doing), managing and updating images on all 750 SD card is, well, a nightmare.

If your cluster is smaller it may not be a problem (indeed we often do just this for small Blade Racks of 20 or 40 nodes).

However, the other issue is robustness.

SD cards tend to wear out (how fast depends on how you use them). PXE (net) boot nodes do not wear out. Local storage may also not be necessary (if you use an NFS or NBD served file system via the LAN) but the bandwidth of accessing the (remote) storage may be a problem (if they all the nodes jump on the LAN at once depending on your network fabric and/or NFS/NBD server bandwidth).

The other option are USB sticks (plugged into the USB ports of the Raspberry Pi). They are (usually) faster and (can be) more reliable than SD cards and you can boot from them too!

All that said, there is no problem with SD cards used within their limitations in Raspberry Pi Clusters.

Comments are closed