Creating AI art with Raspberry Pi | #MagPiMonday

Creating AI art using a Raspberry Pi Zero 2 W shouldn’t work, but a determined software optimiser found a way. This #MagPiMonday, Vito Plantamura tells Rosie Hattersley how he did it.

Artificial intelligence has garnered thousands of headlines over the past few years, intriguing and appalling people in roughly equal measure.

AI art generation, whether musical, visual or written, has alarmed many a creator concerned about losing work, or having theirs ripped off.

What we’ve seen little of, to date, is canny AI generators that can run on a computer with modest processing capabilities. Training AI models requires powerful servers and multiple dedicated GPU (graphics processing unit) boards to create the model that can generate images.

After this training process comes “inference”, where a trained model is used to infer a result on a regular computer. Even this takes time on a powerful computer with multiple GBs of RAM.

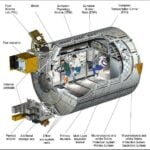

OnnxStream, however, makes inference possible on a tiny Raspberry Pi Zero with just 512MB RAM and finds a balance between processing power and generation time to turn out impressive imagery via Stable Diffusion.

“Generally major machine learning frameworks and libraries are focused on minimizing inference latency and/or maximizing throughput, all of which are at the cost of RAM usage. So I decided to write a super-small and hackable inference library specifically focused on minimizing memory consumption”, says maker Vito Plantamura of how OnnxStream came about.

Enterprising guy

Although this is his first big Raspberry Pi project, Vito controls his home TV setup with a Raspberry Pi 4 and sometimes uses one for enterprise-grade tasks “running software jobs that took weeks to execute and that weren’t convenient to run on a desktop computer.” He bought a Raspberry Pi Zero 2 W as soon as it was released “because it was too cute!” and says the idea of running Stable Diffusion on such a small device was so intriguing he couldn’t resist.

With a day job writing enterprise software, the process of simplifying things to run on Raspberry Pi really appealed, and “allows me to interact on a lower level with computers than what my job allows me to do at the moment.”

Power play

While his OnnxStream project is intended to run on Raspberry Pi Zero 2, most of the development took place on Vito’s Raspberry Pi 4. This meant he had plenty of processing power available to get the code running smoothly, and could focus on optimising it and reducing its requirements knowing that it was fully operational. The process required a number of iterations in order to optimisations the OnnxStream code and, only when the memory consumption dropped below 300MB, did he begin the final tests on Raspberry Pi Zero 2 W.

The first version of Stable Diffusion for OnnxStream consumed 1.1GB of RAM, far too much for a Raspberry Pi Zero 2 W, but he eventually “managed to get the three models of Stable Diffusion into the memory of the little Zero.”

He developed OnnxStream over a five-month period, developing and testing on both Raspberry Pi 4 and Raspberry Pi Zero 2 W. He notes that “both run a full Linux operating system [so] compared to a full desktop or server system, there’s no limit to what you can do. The only limitation is,of course, speed and memory. That’s why running Stable Diffusion on the smaller of the two is such an intriguing idea: being able to overcome one of these two barriers brings Raspberry Pi Zero 2 W closer to a modern desktop system costing two orders of magnitude more.”

Vito’s detailed GitHub explains that the MAX_SPEED option allows to increase performance by about 10% in Windows, but by more than 50% on the Raspberry Pi. Using this feature, he was able to reduce the generation time on his Raspberry Pi 2 Zero W from three hours down to 1.5. However, he warns that this option consumes much more memory at build time and there is a chance of the executable not working. Should this be the case, he recommends retrying with MAX_SPEED set to OFF.

OnnxStream makes use of XNNPACK to optimise how Stable Diffusion generates AI imagery. Testing was done using Raspberry Pi OS Lite 64-bit with OnnxStream eventually able to run all but one Variable Autoencoder (VAE) on Raspberry Pi Zero 2 W. Vito’s GitHub has more details on setting up OnnxStream on a Raspberry Pi with “every KB of RAM needed to run Stable Diffusion.”

1 comment

Lee

You acknowledge that creators are concerned about the perpetual plagarism engines that are generative models, but continue to just… uncritically showcase how someone figured out how to use it with something? Without any further admonishments, and a caption *encouraging* usage with the Popstar image?

You do realise that not only is your job at risk, but we have actual verifiable cases of articles being published using AI to both write and source information for articles, meaning the risk isn’t just “non-zero chance so it’s technically-possible-but-not-really”?

Comments are closed