Adafruit guest post: Machine learning add-ons for Raspberry Pi

Hi folks, Ladyada here from Adafruit. The Raspberry Pi folks said we could do a guest post on our Adafruit BrainCraft HAT & Voice Bonnet, so here we go!

I’ve been engineering up a few Machine Learning devices that work with Raspberry Pi: BrainCraft HAT and the Voice Bonnet!

The idea behind the BrainCraft HAT is to enable you to “craft brains” for machine learning on the EDGE, with microcontrollers and microcomputers. On ASK AN ENGINEER, we chatted with Pete Warden, the technical lead of the mobile, embedded TensorFlow Group on Google’s Brain team about what would be ideal for a board like this.

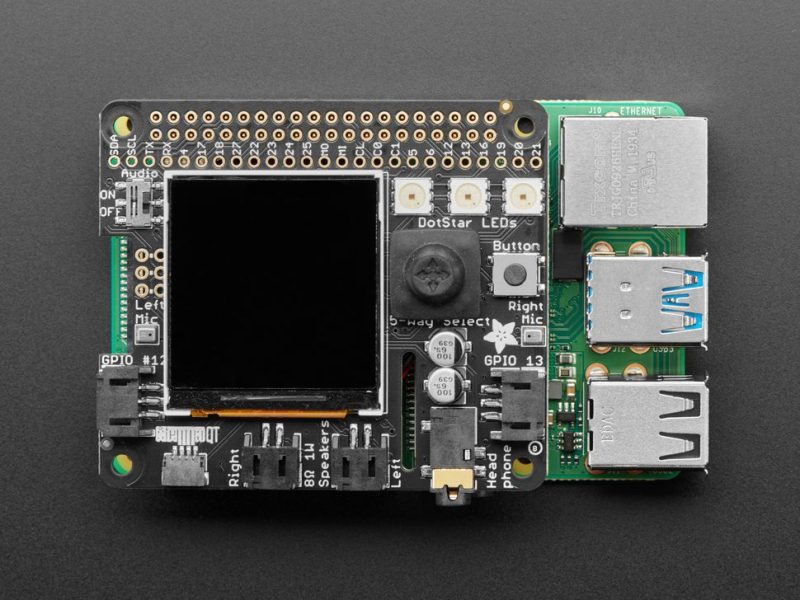

BrainCraft HAT

And here’s what we designed! The BrainCraft HAT has a 240×240 TFT IPS display for inference output, slots for camera connector cable for imaging projects, a 5-way joystick, a button for UI input, left and right microphones, stereo headphone out, stereo 1W speaker out, three RGB DotStar LEDs, two 3-pin STEMMA connectors on PWM pins so they can drive NeoPixels or servos, and Grove/STEMMA/Qwiic I2C port.

This will let people build a wide range of audio/video AI projects while also allowing easy plug-in of sensors and robotics!

A controllable mini fan attaches to the bottom and can be used to keep your Raspberry Pi cool while it’s doing intense AI inference calculations. Most importantly, there’s an on/off switch that will completely disable the audio codec, so that when it’s off, there’s no way it’s listening to you.

Check it out here, and get all the learning guides.

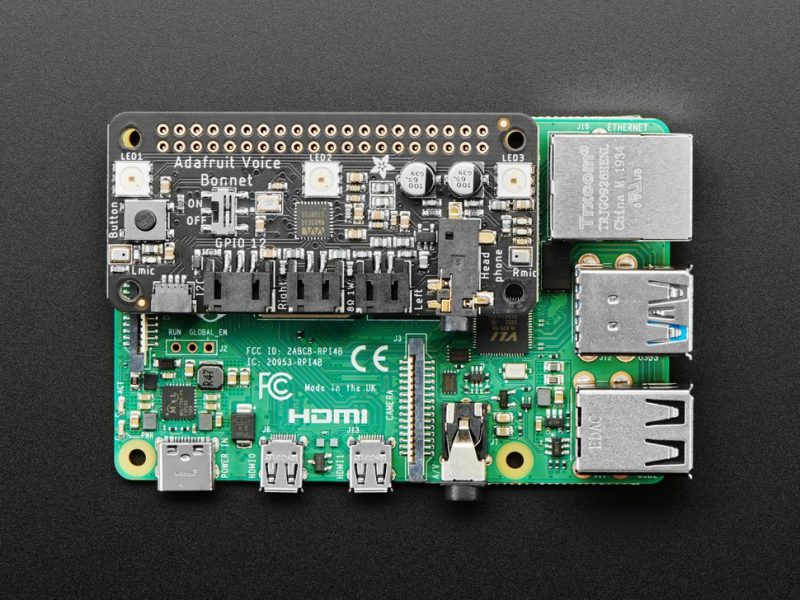

Voice Bonnet

Next up, the Adafruit Voice Bonnet for Raspberry Pi: two speakers plus two mics. Your Raspberry Pi computer is like an electronic brain — and with the Adafruit Voice Bonnet you can give it a mouth and ears as well! Featuring two microphones and two 1Watt speaker outputs using a high-quality I2S codec, this Raspberry Pi add-on will work with any Raspberry Pi with a 2×20 GPIO header, from Raspberry Pi Zero up to Raspberry Pi 4 and beyond (basically all models but the very first ones made).

The on-board WM8960 codec uses I2S digital audio for great quality recording and playback, so it sounds a lot better than the headphone jack on Raspberry Pi (or the no-headphone jack on Raspberry Pi Zero). We put ferrite beads and filter capacitors on every input and output to get the best possible performance, and all at a great price.

We specifically designed this bonnet for use when making machine learning projects such as DIY voice assistants. For example, see this guide on creating a DIY Google Assistant.

But you could do various voice-activated or voice recognition projects. With two microphones, basic voice position can be detected as well. Check it out here, and see the guides as well!

6 comments

Johan Tibell

I’d love to see a HAT that integrates the Google/coral.ai EdgeTPU. It would need to use the PCIe bus though so I don’t know if that’s possible as a HAT.

Kamaluddin Khan

BrainCraft HAT is unavailable at your site…..

Jaydn

Forget robotics, these could be great uses in custom pro studio gear or stage control.

Bonzadog

Why “forget” Robotics. this an important aspect of AI and complex projects?

Skylar Boothby

Hello, I am wondering if there is any prewritten code to connect a camera module to in 128×128 mini OLED. I’m new to all of this so anything helps.

Lucas Morais

Nice! But as Johan mentioned, it would be great to see Raspberry components adding some degree of HW acceleration to ML tasks. These ASICs are very small nowadays and given their great energy/area efficiency, you can really deliver some interesting performance without blowing up constraints on power or manufacturing costs.

Comments are closed