Why Raspberry Pi isn’t vulnerable to Spectre or Meltdown

Over the last couple of days, there has been a lot of discussion about a pair of security vulnerabilities nicknamed Spectre and Meltdown. These affect all modern Intel processors, and (in the case of Spectre) many AMD processors and ARM cores. Spectre allows an attacker to bypass software checks to read data from arbitrary locations in the current address space; Meltdown allows an attacker to read data from arbitrary locations in the operating system kernel’s address space (which should normally be inaccessible to user programs).

Both vulnerabilities exploit performance features (caching and speculative execution) common to many modern processors to leak data via a so-called side-channel attack. Happily, the Raspberry Pi isn’t susceptible to these vulnerabilities, because of the particular ARM cores that we use.

To help us understand why, here’s a little primer on some concepts in modern processor design. We’ll illustrate these concepts using simple programs in Python syntax like this one:

t = a+b u = c+d v = e+f w = v+g x = h+i y = j+k

While the processor in your computer doesn’t execute Python directly, the statements here are simple enough that they roughly correspond to a single machine instruction. We’re going to gloss over some details (notably pipelining and register renaming) which are very important to processor designers, but which aren’t necessary to understand how Spectre and Meltdown work.

For a comprehensive description of processor design, and other aspects of modern computer architecture, you can’t do better than Hennessy and Patterson’s classic Computer Architecture: A Quantitative Approach.

What is a scalar processor?

The simplest sort of modern processor executes one instruction per cycle; we call this a scalar processor. Our example above will execute in six cycles on a scalar processor.

Examples of scalar processors include the Intel 486 and the ARM1176 core used in Raspberry Pi 1 and Raspberry Pi Zero.

What is a superscalar processor?

The obvious way to make a scalar processor (or indeed any processor) run faster is to increase its clock speed. However, we soon reach limits of how fast the logic gates inside the processor can be made to run; processor designers therefore began to look for ways to do several things at once.

An in-order superscalar processor examines the incoming stream of instructions and tries to execute more than one at once, in one of several pipelines (pipes for short), subject to dependencies between the instructions. Dependencies are important: you might think that a two-way superscalar processor could just pair up (or dual-issue) the six instructions in our example like this:

t, u = a+b, c+d v, w = e+f, v+g x, y = h+i, j+k

But this doesn’t make sense: we have to compute v before we can compute w, so the third and fourth instructions can’t be executed at the same time. Our two-way superscalar processor won’t actually be able to find anything to pair with the third instruction, so our example will execute in four cycles:

t, u = a+b, c+d v = e+f # second pipe does nothing here w, x = v+g, h+i y = j+k

Examples of superscalar processors include the Intel Pentium, and the ARM Cortex-A7 and Cortex-A53 cores used in Raspberry Pi 2 and Raspberry Pi 3 respectively. Raspberry Pi 3 has only a 33% higher clock speed than Raspberry Pi 2, but has roughly double the performance: the extra performance is partly a result of Cortex-A53’s ability to dual-issue a broader range of instructions than Cortex-A7.

What is an out-of-order processor?

Going back to our example, we can see that, although we have a dependency between v and w, we have other independent instructions later in the program that we could potentially have used to fill the empty pipe during the second cycle. An out-of-order superscalar processor has the ability to shuffle the order of incoming instructions (again subject to dependencies) in order to keep its pipes busy.

An out-of-order processor might effectively swap the definitions of w and x in our example like this:

t = a+b u = c+d v = e+f x = h+i w = v+g y = j+k

allowing it to execute in three cycles:

t, u = a+b, c+d v, x = e+f, h+i w, y = v+g, j+k

Examples of out-of-order processors include the Intel Pentium 2 (and most subsequent Intel and AMD x86 processors with the exception of some Atom and Quark devices), and many recent ARM cores, including Cortex-A9, -A15, -A17, and -A57.

What is branch prediction?

Our example above is a straight-line piece of code. Real programs aren’t like this of course: they also contain both forward branches (used to implement conditional operations like if statements), and backward branches (used to implement loops). A branch may be unconditional (always taken), or conditional (taken or not, depending on a computed value); it may be direct (explicitly specifying a target address) or indirect (taking its target address from a register, memory location or the processor stack).

While fetching instructions, a processor may encounter a conditional branch which depends on a value which has yet to be computed. To avoid a stall, it must guess which instruction to fetch next: the next one in memory order (corresponding to an untaken branch), or the one at the branch target (corresponding to a taken branch). A branch predictor helps the processor make an intelligent guess about whether a branch will be taken or not. It does this by gathering statistics about how often particular branches have been taken in the past.

Modern branch predictors are extremely sophisticated, and can generate very accurate predictions. Raspberry Pi 3’s extra performance is partly a result of improvements in branch prediction between Cortex-A7 and Cortex-A53. However, by executing a crafted series of branches, an attacker can mis-train a branch predictor to make poor predictions.

What is speculation?

Reordering sequential instructions is a powerful way to recover more instruction-level parallelism, but as processors become wider (able to triple- or quadruple-issue instructions) it becomes harder to keep all those pipes busy. Modern processors have therefore grown the ability to speculate. Speculative execution lets us issue instructions which might turn out not to be required (because they may be branched over): this keeps a pipe busy (use it or lose it!), and if it turns out that the instruction isn’t executed, we can just throw the result away.

Speculatively executing unnecessary instructions (and the infrastructure required to support speculation and reordering) consumes extra energy, but in many cases this is considered a worthwhile trade-off to obtain extra single-threaded performance. The branch predictor is used to choose the most likely path through the program, maximising the chance that the speculation will pay off.

To demonstrate the benefits of speculation, let’s look at another example:

t = a+b u = t+c v = u+d if v: w = e+f x = w+g y = x+h

Now we have dependencies from t to u to v, and from w to x to y, so a two-way out-of-order processor without speculation won’t ever be able to fill its second pipe. It spends three cycles computing t, u, and v, after which it knows whether the body of the if statement will execute, in which case it then spends three cycles computing w, x, and y. Assuming the if (implemented by a branch instruction) takes one cycle, our example takes either four cycles (if v turns out to be zero) or seven cycles (if v is non-zero).

If the branch predictor indicates that the body of the if statement is likely to execute, speculation effectively shuffles the program like this:

t = a+b u = t+c v = u+d w_ = e+f x_ = w_+g y_ = x_+h if v: w, x, y = w_, x_, y_

So we now have additional instruction level parallelism to keep our pipes busy:

t, w_ = a+b, e+f u, x_ = t+c, w_+g v, y_ = u+d, x_+h if v: w, x, y = w_, x_, y_

Cycle counting becomes less well defined in speculative out-of-order processors, but the branch and conditional update of w, x, and y are (approximately) free, so our example executes in (approximately) three cycles.

What is a cache?

In the good old days*, the speed of processors was well matched with the speed of memory access. My BBC Micro, with its 2MHz 6502, could execute an instruction roughly every 2µs (microseconds), and had a memory cycle time of 0.25µs. Over the ensuing 35 years, processors have become very much faster, but memory only modestly so: a single Cortex-A53 in a Raspberry Pi 3 can execute an instruction roughly every 0.5ns (nanoseconds), but can take up to 100ns to access main memory.

At first glance, this sounds like a disaster: every time we access memory, we’ll end up waiting for 100ns to get the result back. In this case, this example:

a = mem[0] b = mem[1]

would take 200ns.

However, in practice, programs tend to access memory in relatively predictable ways, exhibiting both temporal locality (if I access a location, I’m likely to access it again soon) and spatial locality (if I access a location, I’m likely to access a nearby location soon). Caching takes advantage of these properties to reduce the average cost of access to memory.

A cache is a small on-chip memory, close to the processor, which stores copies of the contents of recently used locations (and their neighbours), so that they are quickly available on subsequent accesses. With caching, the example above will execute in a little over 100ns:

a = mem[0] # 100ns delay, copies mem[0:15] into cache b = mem[1] # mem[1] is in the cache

From the point of view of Spectre and Meltdown, the important point is that if you can time how long a memory access takes, you can determine whether the address you accessed was in the cache (short time) or not (long time).

What is a side channel?

From Wikipedia:

“… a side-channel attack is any attack based on information gained from the physical implementation of a cryptosystem, rather than brute force or theoretical weaknesses in the algorithms (compare cryptanalysis). For example, timing information, power consumption, electromagnetic leaks or even sound can provide an extra source of information, which can be exploited to break the system.”

Spectre and Meltdown are side-channel attacks which deduce the contents of a memory location which should not normally be accessible by using timing to observe whether another, accessible, location is present in the cache.

Putting it all together

Now let’s look at how speculation and caching combine to permit a Meltdown-like attack on our processor. Consider the following example, which is a user program that sometimes reads from an illegal (kernel) address, resulting in a fault (crash):

t = a+b u = t+c v = u+d if v: w = kern_mem[address] # if we get here, fault x = w&0x100 y = user_mem[x]

Now, provided we can train the branch predictor to believe that v is likely to be non-zero, our out-of-order two-way superscalar processor shuffles the program like this:

t, w_ = a+b, kern_mem[address] u, x_ = t+c, w_&0x100 v, y_ = u+d, user_mem[x_] if v: # fault w, x, y = w_, x_, y_ # we never get here

Even though the processor always speculatively reads from the kernel address, it must defer the resulting fault until it knows that v was non-zero. On the face of it, this feels safe because either:

vis zero, so the result of the illegal read isn’t committed towvis non-zero, but the fault occurs before the read is committed tow

However, suppose we flush our cache before executing the code, and arrange a, b, c, and d so that v is actually zero. Now, the speculative read in the third cycle:

v, y_ = u+d, user_mem[x_]

will access either userland address 0x000 or address 0x100 depending on the eighth bit of the result of the illegal read, loading that address and its neighbours into the cache. Because v is zero, the results of the speculative instructions will be discarded, and execution will continue. If we time a subsequent access to one of those addresses, we can determine which address is in the cache. Congratulations: you’ve just read a single bit from the kernel’s address space!

The real Meltdown exploit is substantially more complex than this (notably, to avoid having to mis-train the branch predictor, the authors prefer to execute the illegal read unconditionally and handle the resulting exception), but the principle is the same. Spectre uses a similar approach to subvert software array bounds checks.

Conclusion

Modern processors go to great lengths to preserve the abstraction that they are in-order scalar machines that access memory directly, while in fact using a host of techniques including caching, instruction reordering, and speculation to deliver much higher performance than a simple processor could hope to achieve. Meltdown and Spectre are examples of what happens when we reason about security in the context of that abstraction, and then encounter minor discrepancies between the abstraction and reality.

The lack of speculation in the ARM1176, Cortex-A7, and Cortex-A53 cores used in Raspberry Pi render us immune to attacks of the sort.

* days may not be that old, or that good

180 comments

Tim

Great news, thank you!

Tobias Huebner

Having read this, I feel smarter. Didn`t really understand it, but I feel smarter.

Alex Bate

I 100% agree with this statement.

Ameer Razmi

I second that. Truly made me feel smarter when reading those.

Mavis

Same here. And feeling smarter is all that really matters, r-right guys? :’)

Pete

Thanks, great explanation and I feel confident Raspberry Pi’s are tough little computers.

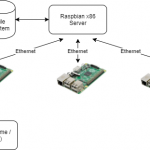

If I am using Raspbian x86 desktop on Oracle Virtual Box, does the Virtual CPU have the exploit or is it protected by the Host OS. I am assuming the host hardware may have the exploit patched.

MW

That is not relevant to ARM CPU of Raspberry Pi

Joshua Barretto

A virtual Raspberry Pi is vulnerable because the problem is dependent on the underlying chip. Besides, the emulator likely doesn’t emulate the RPi’s CPU down to that level of detail: it doesn’t need to.

Niall

If you’re using an emulated CPU, I imagine you’re safe — after all, implementing complicated parallelism in software will only serve to slow the program down, and hardware parallelism is intended to make software run faster.

HOWEVER…

I don’t believe Virtualbox does any processor emulation at all — it simply mediates between the host operating system and the guest environments, but passes through x86 commands to the host x86 processor.

The big issue with Spectre and Meltdown is that they can actually break out of a virtual machine and access system memory for the host.

Jon

> I don’t believe Virtualbox does any processor emulation at all

VirtualBox has a dynamic recompiler borrowed from QEMU that it uses when it can’t virtualize.

It’s pretty much just used to emulate real mode and protected mode (ie. 16-bit) software. If it didn’t do this, it wouldn’t be possible to run VMs that use BIOS or DOS machines (including NTVDM on modern 32-bit Windows) under a 64-bit host.

Martin Bonner

That is an *excellent* summary of how Spectre and Meltdown work. (The fact that the Pi is immune is just a bonus).

Shelley Powers

Wow. Silver lining related to Meltdown and Spectre: I’m learning a whole lot more about how processors work.

Antz

Eben, could you please clarify how this A53 CPU feature is different from the one that is exploitable by Spectre?

http://infocenter.arm.com/help/topic/com.arm.doc.ddi0500g/CHDGIAHH.html

Eben Upton

That link refers to speculative fetches of instructions, as opposed to speculative execution. The former is much more common than the latter, as without it the processor will frequently stall waiting for instructions from memory, crippling performance.

Why don’t speculative instruction (and data) fetches introduce a vulnerability? Because unlike speculative execution they don’t lead to a separation between a read instruction and the process (whether a hardware page fault or a software bounds check) that determines whether that read instruction is allowed.

Antz

Awesome, thank you!

Canis Lupus

Eben, may I disagree that the speculative fetch does not cause a vulnerability?

Input:

kernel address KA

Variables:

X:=0

Step 1:

Create a table of 256 branch instructions. All but offset X jump to address A, and offset X is a branch to address B.

Flush the caches.

Step 2:

Prepare an exception handler to catch the protection violation.

Step 3:

Load KA in Rn. Load 0 in Rm.

Invoke the instruction

TBB [Rn, Rm]

just before the table from step 1.

Step 4:

In the exception handler from step 2 check whether a cache hit is achieved at table offset X.

If yes, the byte at address [KA] is X.

If not, increment X, and repeat steps 1-4.

Jeremy

+1

A well written and easy to understand introduction to some aspects of modern CPU design (add some more about instruction fusion and the crucial register renaming) and it should be permanently published in the education section.

Shane Johnson

Excellent write up Ebon. Thank you.

Jeff Schoby

I’m still a little unclear on how knowing what memory address is in processor cache get us actual data from memory.

Eben Upton

Imagine the value at the kernel address, which gets loaded into

_w, was0xabde3167. Then the value of_xis0x100, and addressuser_mem[0x100]will end up in the cache. A subsequent load ofuser_mem[0x100]will be fast.Now imagine the value at the kernel address, which gets loaded into

_w, was0xabde3067. Then the value of_xis0x000, and addressuser_mem[0x000]will end up in the cache. A subsequent load ofuser_mem[0x100]will be slow.So we can use the speed of a read from

user_mem[0x100]to discriminate between the two options. Information has leaked, via a side channel, from kernel to user.Sam

I still don’t get the *depending on the eighth bit of the result of the illegal read* & *you’ve just read a single bit from the kernel’s address space* part of this and other articles. Why 8th bit ? Is that the privilege bit in L1$ ? How does this process leak just 1 bit and not a byte/word/etc ?

Martijn

The “8th bit” comes from the x_ = w_&0x100 instruction. This is a mask-instruction:

– if the 8th bit in w_ is 1, then x_ = 100.

– if the 8th bit in w_ is 0, then x_ = 000.

The subsequent read of user_mem[x_] causes either address 100 or address 000 to be brought into the cache, depending on whether the 8th bit in w_ is 1 or 0. By reading address 100 again and measuring how long it takes, you can determine whether 100 or 000 was brought into the cache.

Sam

Actually Eben should add a footnote in this excellent article stating that 8th bit in 0x100 is 1 from programmer’s PoV when starting from 0 and not in layman’s PoV who would consider the least significant bit to be at position 1. That was the bit in the article which threw me off.

Evan

In this particular example, you know whether the eighth bit of a particular kernel address is 1 or 0. You can use the exact same principle to leak any other bit of an address, so you can do this eight times with different operands to & to get an entire byte. Do it another eight times and you can read the entire byte at the next address, and so on. It’s slow, but you can eventually read out the entire kernel address space that way, which would potentially allow you to compromise the operating system.

Naveen Michaud-Agrawal

It might be easier to see Eben’s example in binary instead of hex (and we’ll use an 16 bit architecture to make it easier to see):

value in _w: 0x3167 in binary is 0b0011000101100111

0x0100 in binary is 0b000000100000000

So if you AND them together you get: 0b0000000100000000, which tells you the 8th bit. And then in subsequent code, you AND against other single bit values, thereby being able to read out arbitrary amounts of kernel memory.

D

I read the stuff below and it makes sense to me, but now my question is: Why would the kernel allow w_ to read from it’s address space? Shouldn’t the kernel give a fault because of a permission error?

Ralph Little

No, because the illegal read was done speculatively.

The CPU should only generate a fault for what the program actually does, not what it could theoretically do in the future. In fact the code was arranged so that the branch wouldn’t ever happen in practice so it should never generate a fault.

Omen

I think, the whole story it extremely exaggregated. Like global warming (the continental glacier melted 10.000 years ago). Because you can illegaly move from the memory to the cache only 3 neighbouring bytes (the bus has is bytes wide) – not a larger block and definitely not a arbitrary one. In the Windows environment is it far easier possible to read the whole memory by other means. So it is definitely not a failure of Intel but a failure of the OS vendors. But it seems that someone is hard trying to damage Intel – like years ago I.M with the old FPP bug …

AdithyaG

Thanks for the great post,Eben. However, I’m not clear what exactly _u,_v,_w represent, as they seem to have come out of thin air. I see that _w holds the content of the target kernel memory. So, why digress and rely on side-channel attacks to extract the data, that is already stored at w_?

jedi34567

I believe the _u, _v, _w, etc. represent the ‘speculative’ state of the registers that don’t get committed (‘retired’ is the actual term) to the real registers until the processor knows for certain whether the branch is taken or not. Basically, the processor sets up virtual execution pipelines to pre-execute the different paths of the program, then only ‘retires’ one of the results depending on the actual execution path that is taken.

Mario Giammarco

It is called register renaming: _u,_x,_w are another registers that out of order cpus have to keep temporary results. Basically you think to have 16 registers but cpu probably has 64. One official set and other hidden sets for temporary computations. At the end if computation is not discarded the cpu renames _u to u avoiding a copy

Janghou

In `w&0x100`

0x100 stands for a byte literal

and & for a `bitwise and` operator:

w & 0x100

if I’m not mistaken.

mr3

‘0x’ is shorthand for hex, so 0x100 should be hex 100 which is 2-byte (0x10 would be 1-byte)

Patches

@Pete: The Raspberry Pi computer itself is invulnerable to the bug. The Intel or AMD-based computer you are running Raspbian on is not.

So if you are running Raspbian x86 you will need to install patches in both your Raspbian guest operating system and your host operating system to be safe from the vulnerability.

MW

That is so wrong Raspbian Operating System only runs on ARM CPU.

The x86 version is Debian x86-32….

This post is about the ARM CPU of the Raspberry Pis

Peter Dolkens

It’s not wrong at all – he’s responding to the comment that specifically asked about virtualized Raspbian – aka Raspbian running on x86.

don isenstadt

Eben .. thanks so much for this … I read through it once but will reread to hopefully understand it better .. It is nice to get education instead of hysteria! If you were willing to pay with performance to get security could you simply turn off specualtion? Is that what the news was referring to when they say the fix will cause a 30% degradation in performance?

Maybe we should have raspberry pi terminals communicating to IBM Z mainframes!

Thanks again!

-don

Evan Hildreth

> If you were willing to pay with performance to get security could you simply turn off specualtion? Is that what the news was referring to when they say the fix will cause a 30% degradation in performance?

From what I understand (so I could be wrong!), the 30% degradation comes from additional checks added at the operating system level to make sure there are no security leaks. This particularly surrounds programs that read and write a lot of files to the disk.

Normally, this works by:

– Program asks OS for file

– OS reads file into memory

– Program reads file from memory

This context-switching (from the program to the operating system and back again) is computationally expensive, so modern processors have–at a very low level–blended the two contexts. From what I’ve gathered, the “fix” for this is to have the OS perform extra checks to make sure no cached data is being leaked. For some programs, it’s a negligible difference (Apple is claiming no noticeable difference for most of their customers); other programs like databases, however, will probably see all of that 30% drop.

I hope this helps. I also hope this was right!

Mark Woodward

Turning off “speculation” is not possible in software. Maybe intel could implement that in microcode and issue an update, but that is an even far more complicated discussion.

The performance hit comes from the Linux kernel mapping an unmapping the kernel. Currently, process memory is divided in two: the low half is process space (unique to each process), the upper half is kernel space (shared with all processes). The processor is supposed to protect the kernel memory, but these hardware bugs break that protection.

The fix is therefor to map the kernel space on entry to a kernel call and unmap it upon return to the process. This can be a time consuming set of operations.

Eben Upton

One almost wishes that they’d stuck with the original name for the KPTI patchset: Forcefully Unmap Complete Kernel With Interrupt Trampolines.

https://www.theregister.co.uk/2018/01/02/intel_cpu_design_flaw/

Bill Stephenson

That is hilarious!!!!

Omen

In Von Neumann architecture (Intel x86) is it impossible to do: “These KPTI patches move the kernel into a completely separate address space” :))) Because the shared common memory is the the main difference against the Harvard Architecture (ARM and old Intel micocontrollers like 8051) …

But you could move the kernel to another PC and pull the plug ;)

Eben Upton

I believe the performance degradation projections (which are on the order of 5% for most real benchmarks) are based on the cost of adding Kernel Page Table Isolation to the Linux kernel.

Disabling speculation, even if possible, would have a much larger impact.

Kirn Gill II

The 30% penalty is for extra things done to modify the process memory layout in order to prevent these attacks.

Normally, the kernel’s own memory is mapped into the process space of a user-mode process, just with memory permission flags set so that the user-mode process isn’t able to (normally) read into kernel memory. When a system call (e.g. read a file) is made, the CPU switches to kernel mode, and simply changes the permission on the memory so that the kernel can access itself.

However, because the kernel is still mapped into process memory, timing attacks like this can be used to slowly pry information out of it.

The fix, on the other hand, is to remove the kernel from process memory. The performance hit comes from the system call handler now having to map the kernel into the process memory at the start of the call and then unmap it again at the end, extra work which was not previously done before.

XLWiz2k

Hi guys! Sorry but I fail to understand how loading on demand/unloading after use the mem pages would protect the attack from happening? as far as I understood, the side-channel attack happens WHILE the mem is available to the speculative engine, and does nothing to do with the mem contents themselves, rather to the cache properties (access speed) – or I got it all wrong? ;-P

Mario Giammarco

Turning off speculations is not possible because it has a terrible impact on performance… guess what is the difference between an intel core and an intel atom…

Shannon

This is the best “tutorial” I have seen on this subject. The side effect of this attack has been a better awareness of modern processor architecture. It is unfortunate that this had to happen to get folks to draw back the curtain on this, instead of keeping the pretense of everything being scalar and in order. It does matter in many more instances than people think.

Thank you for the awesome lesson in processor technology.

Luyanda Gcabo

Thanks for this. Even though you mention that the real exploit is more complex, this gives the context. I feel like I could read more and more on this topic.

Happy New Year*

Eben Upton

Happy New Year to you too.

styfle

Thanks for the excellent explanation!

I wonder, can a python program actually exploit this bug?

And how can you reliably “time a subsequent access to one of those addresses”?

Eben Upton

You really need be down at the machine-language level to manipulate this (and to be able to do unchecked pointed arithmetic).

As I’m not particularly au fait with Intel high-performance timing. If you’re in ring 0 (the kernel) you can probably use the performance counters:

https://www.blackhat.com/docs/us-15/materials/us-15-Herath-These-Are-Not-Your-Grand-Daddys-CPU-Performance-Counters-CPU-Hardware-Performance-Counters-For-Security.pdf

I can imagine that in userland you may need to loop the attack to get enough signal-to-noise. There’s some discussion in the Spectre paper:

https://spectreattack.com/spectre.pdf

about incrementing a counter on another thread to generate a sufficiently accurate time reference.

Dave Jones

As a point of interest Python 3.3+ does have time.perf_counter() which is meant to be high resolution. Whether that actually queries HPET or not (on a PC) I can’t recall but the info’s probably buried somewhere in PEP-418 (https://www.python.org/dev/peps/pep-0418/). Also unchecked integer arithmetic is possible by abusing certain things (e.g. ctypes).

That said, I’m sure Eben’s right about needing to be closer to machine code. The overhead of the CPython interpreter and the GC are probably sufficient to make it either outright impossible, or at least extremely difficult, to implement in pure Python (i.e. without resorting to some externally compiled module).

Caroline

Thank you for the incredible post! I understand so much more now.

Allison Reinheimer Moore

This is fantastically friendly and clear. Thanks so much for the accessible explanation! I’m much less confused than I was before.

Rohit

Thanks for the wonderfully explained post, Eden. You’ve explained a complex concept in a really simple manner.

Jamie

So did you have an Archimedes too, or did you defect to Amiga? =)

Eben Upton

I defected to the Amiga: a shop-soiled A600, for £200 just after Christmas 1992. Couldn’t afford an Archimedes, though I drooled over the single unit my school could afford.

Feels good to hold the record for shipping the largest number of units of Archimedes-compatible hardware.

z

For Christ sake, why should an userspace program ever “flush the cache”?

Eben Upton

Are you asking why a userspace program should be allowed access to a cache flush primitive?

Kirn Gill II

I think that’s what’s being asked here, or more like: What useful purpose would it serve for a user-mode process to flush the cache?

James T. Carver

This might be good except for the fact that arm themselves started that those devices are effected by the “bug” if one could really call it that.

Eben Upton

[citation needed]

Helen Lynn

Arm’s statement lists the processors affected, which don’t include those used in Raspberry Pis. As that statement says, “[o]nly affected cores are listed, all other Arm cores are NOT affected“.

Louis Parkerson

You are a mind reader! I was thinking about this problem earlier and I was about to ask it on the forums then this long and helpful post pops up.

James Wright

Thank you for a fantastic explanation, it should be preserved somewhere for educational purposes!

Perhaps we should look at far simpler CPU designs more seriously as they say, “complexity kills”. SUBLEQ anyone? :-)

All this talk of scalar vs superscalar takes me back to the day I got my 68060 (a superscalar CPU) expansion board for my Amiga 1200 and overclocked it from 50Mhz to 66Mhz by simply soldering on a different clock crystal! :-)

PS: Love the RasberryPi, it’s really put the fun back into computing, keep up the great work!

Eben Upton

I was a 68000 junkie for three years in the early 90s. Beautiful architecture: in a more just world it, or its descendants, would have won out.

James Wright

I learnt assembly on a 68000 (Amiga) in the early 90’s, imagine my horror when I moved to x86! :-)

Eben Upton

Sadly I do not need to use my imagination, having followed a similar road myself.

Travis Johnson

Thanks for the article!

The Cortex A53 boasts an “Advanced Branch Predictor” which I assumed to mean it supports speculative execution. If the processor isn’t using the branch prediction to pre-execute instructions is it using it for instruction re-ordering? What’s the point of branch prediction without speculative execution of the predicted branch?

Eben Upton

A branch predictor, and branch target buffer, are useful even without speculative execution because they give you a hint about which instructions to admit to the pipeline next while you wait for the branch condition to resolve.

Cortex-A53 isn’t capable of “real” speculative execution because it can’t stash the results of instructions which are started speculatively. This means that the pipeline bogs down quite fast if resolution of the branch condition is significantly delayed, and critically the chained dependent memory accesses that both attacks rely on to modify cache state can never happen.

Perhaps I do need to write about register renaming: I’d been hoping to avoid that.

Daniel

Then why do Cortex-A53 and Cortex-A7 implement PMU event 0x10? It counts the number of “mispredicted or not predicted branches speculatively executed”. I doubt ARM implemented it to always return zero.

Kirn Gill II

Software compatibility with ARM processors possessing speculative execution?

I’d imagine that it’s easier to implement it as an “always zero” than to require OS developers to have to code around a single missing PMU event.

Dan Huby

I read the CPU technical manual and the only section on branch prediction I could find referred to preemptively loading the set of instructions in the branch, but not executing them.

Dan Huby

Sorry, it looks like Eben replied while I was typing!

solar3000

Eben doesn’t type. He has a pi glued to his brain via GPIO.

jdb

There’s an important difference between branch prediction and speculative execution.

Branch prediction guesses what *instructions* are likely to be executed next. Speculative execution precomputes the *results* of the instructions on both sides of the branch, before deciding the path that the branch took and discarding (retiring) the results of the non-executed instructions.

The branch predictor’s job is to keep the instruction pipelines in an in-order core full by guessing the most likely instruction flow after a branch instruction. It does this by storing and comparing the results of previous branch instructions and by using certain architectural hints, like predicting a forwards branch to be not-taken and a backwards branch to be taken.

The branch predictor in an in-order core only affects the instruction cache, by predicting and speculatively fetching what instructions need to be in the Icache ahead of time. The vast majority of modern processors (ARM1176 included) have split instruction and data caches at the innermost level, so a data cache timing attack will not reveal anything about the direction the branch predictor took. Additionally, fooling a branch predictor into speculatively fetching something that is not an instruction will not work – page table structures have dedicated bits that specify whether a particular memory page contains instructions or data (see the NX bit for x86), and fetching instructions from data pages will almost certainly result in an access violation.

Travis Johnson

Thanks for the replies. Makes total sense.

Tomm

I hope Pi that will be released in 2019 (speculation?), also continue using ARM A-53 :)

Eben Upton

:)

Silviu

Please, Eben, can the CPU + GPU for Raspberry Pi 4 be done on 14nm FinFET technology – it would reduce heat and increase performance. I would pay the extra money required for that if I would know it’s 14nm. I would save time and portable electricity in my projects. And thank you for everything.

Pete Stevens

I’m sure if you send a cheque for around $100m or so they’ll get it sorted. The second one should only be $35 :-)

Silviu

Maybe a croudfunding on this blog of 1$ for 100 million people would work :) or 10$ for 10 million people. There are 7 billion people on Earth and at least 20 percent are willling and able to do something good for 1$ online.

solar3000

Holy! Eben is awesome! Met him at a Maker Faire in NYC.

And Liz too!

Beckalooo

Woah. I understood that and was able to follow it to the end of the article!

Thanks, Eben, you are a fine writer.

B

LH

From what I read, AMD seems to reject that their chips are affected by Meltdown. Does this mean that AMD chips don’t implement speculative execution? Can’t imagine that however..

Eben Upton

It’s perfectly possible to implement an out-of-order core with speculation that isn’t vulnerable to Meltdown. For example, of ARM’s out-of-order cores, only Cortex-A75 is vulnerable. Intel cores are vulnerable because of a design choice not to prevent speculative loads from illegal addresses, but instead to rely on a delayed fault (or instruction non-retire) to suppress the result.

Kaitain

Ah! This is exactly what I was wondering about while reading the article. (“But why is the illegal fetch allowed at all in the first place?”) It seems to me like a reasonable thing to do to fault if someone has written code with an illegal instruction *even if in practice the branch with that instruction is never officially executed*.

Ed

You can’t fault just because a speculative instruction is invalid. Think of this simple pattern that’s used everywhere in C/C++ code:

if (pointer != NULL)

pointer->data = value;

Check if you have a valid memory address, and if so, do something with it. If you throw a fault based on speculative instructions, you’ll be faulting constantly on code like that.

(Implementation details: NULL is zero. Memory addresses at or close to zero are always marked invalid in the page table, and trigger a fault when accessed. This is done so that a lot of bad code will crash immediately instead of writing garbage over real data.)

Kaitain

Okay, so:

> Intel cores are vulnerable because of a design choice not to prevent speculative loads from illegal addresses

If the other design choice had been taken, what would it have looked like?

Mario Giammarco

Yes you are both right but this one is the Meltdown breach that uses an Intel fault (looking for permissions rights AFTER executing instructions).

The Spectre uses arrays bounds and for this one all cpus are affected (there are two versions of Spectre btw )

SpeculaArrg

Shouldn’t the susceptibility to Meltdown be implementation as well as model specific ? Or is validating permissions on memory access before committing rather than before loading is part of the specified A-75 micro-arch. ?

LH

Hi Eben,

If I understand your reply correctly, that means that in your example above:

t, w_ = a+b, kern_mem[address]

u, x_ = t+c, w_&0x100

v, y_ = u+d, user_mem[x_]

if v:

# fault

w, x, y = w_, x_, y_ # we never get here

Intel implementation will just let the code run until the last line and generate fault at the very last line, while the other speculative execution implementation will generate the fault for example already at the first line while the first attempt to access the kernel memory is executed. Is this correct?

mjr

Couldn’t they just zero out the value on a faulty read? ANDing the data with ~fault bit, would seem pretty quick and cheap.

pjt

Good writing. Thanks!

Richard Collins

I think I understand. Please correct if this is wrong.

So you’re saying:

You have an if that will equate to false that tries to read from kernel memory. (if you did read this memory, it’ll raise an exception)

You ensure the cache is flushed so that when the CPU speculatively executes the read from kernel memory the value will be in the cache.

The if is then checked and is found to be false and so an exception is not fired as the CPU pretends it was not executed.

Now the memory read from the kernel is in the cache (because of the speculative execution) and is in the same place that our user space memory would have been because of how the cache is aliased against the whole address space. And this is what allows you to read it????

Kaitain

It’s a bit more complex than that. The memory from the kernel is not loaded into the cache. However, a section of (legal) user memory is loaded into the cache whose address is based in part on a tiny piece of the (illegal) kernel memory, in an operation that officially never happened but whose cache fetch has been left as a side-effect. By attempting to read that legal memory in a subsequent legal operation, and timing how long it takes, you can reason backwards to what that tiny piece of kernel memory was that you were never supposed to have been able to access. You can’t read it directly, but you can infer its value from the side-effects of the phantom operation (the speculative fetch).

James Wright

Nearly, you compute a memory address based on a single bit in the hidden value and then access that memory address, all within the branch that will ultimately be thrown away. However, you can still determine the hidden value by timing how long it then takes to access that memory address, because if it’s super fast, then you know it it must be in the CPUs cache and not main memory (as it has been used before in the branch that got trhown away). Well done, you’ve just discivered a single bit of memory that your were never meant to see… now repeat for the rest… :-)

Cenek

You do not have to directly read data from within the cache. Your cachche was flushed, so either array[0] or array[4] are not cached. Then, afther execution, read access of one of these two values with timing will leak if is your particular value cached or not. If present or not could be tell apart by delay, short time mead data are cached, opossite whe readed directly from memory … what is exactly one bit of information ;)

S. Rose

This is extremely well-written, and if a reader has the patience to read it through carefully, that reader comes away with an understanding not just of how a kernel-reading exploit could be constructed, but also twenty years of advances in CPU design. I am in awe, and reassured to have people like Eben Upton on the users’ side.

Badger

Exactly what i was thinking.

(say about 1% of readers)

:)

Aung Thu Htun

pardon my noobie question,

does that mean smartphones made with Cortex-A53 processor are not affected by meltdown & spectre?

(e.g Xiaomi’s Honor 7X Smartphone with CPU : Octa-core (4×2.36 GHz Cortex-A53 & 4×1.7 GHz Cortex-A53) OS : Android 7.0 (Nougat)-)

million thanks….

Sam

Aung Thu Htun sayed: 6th Jan 2018 at 7:31 am

>does that mean smartphones made with Cortex-A53 processor

>are not affected by meltdown & spectre?

Yes, that is exactly the case. A smartphone with just a bunch of Cortex-A53 processor cores is NOT affected – neither by Meltdown nor by Spectre.

My own smartphone, a Sony Xperia X, for example is an interesting case as it is partly affected: It has two fast Cortex-A72 cores, which ARE affected. And it has four slower, power-saving Cortex-A53 cores, which are NOT affected. :-) So depending on what CPU core a program is currently running on (programs/threads are hopping from core to core constantly depending on processor load etc.), it can be vulnerable or not.

Mike Morrow

What is not discussed is that you have to have a program running that is doing these timings. That, alone, would skew the numbers as control is taken from one thread and given to another. Or… If the pipeline is stuck waiting on something, where is this program going to run? I guess it could run on an additional core. Then it would have to be running really tight code to get these timings. In fact, it seems like the program would have to run faster than the cycle time of the core to be able to watch what is happening (timing wise) in another core or memory or cache or whatever it is exactly watching. This seems on the face of it to be impossible since you have to run faster than what you are timing for timing to be usable. Am I missing something here or was it just left out. So far, all of this seems theoretical. It seems like you would need another, faster processor to time the decision processes of the other, slower processor. How can all this actually run on the same CPU, even with multiple cores? Maybe the timing program can run faster after its memory is all in cache. But, then, it has to collect and eventually send this data out so it is subject to the same speed restrictions on the internal buss(es) as the program it is watching. Seems all very theoretical and not particularly practical. Where is this wrong? My speculations must be wrong if this can actually be done.

Silviu

Make chains of assembly instructions:

a) 1 2 3 4 5 6 7 8 9 10 11 12

b1) 1 2 3 4 5 6 7 8 9 1 0 11 12 13

c2) 1 2 3 4 5 6 7 8 9 10 11

a) = done for measurement, at the end see if bx) is completed

If bx) is not completed, we assume it’s b1)

If bx) is completed ,we assume it’s b2)

It’s more complex than that of course.

John Read

This is a great article in explaining the processor issues and the operation of the current software fixes. I’m terms of future processor architecture design, how easy will it be to design this out for ARM and Intel, and will it be possible to do so without suffering a significant performance hit in future processor designs ? Are there any designs in the pipeline that take a different approach to speculation and parallel pipelines from the current generation of processor architectures ?

It’s FOSS

And all these years, ARM has been ignored by major players. At least this should make people thinking about wider adoption of ARM processors.

N1X

Thanks to your blog and FOSS, my shift towards ARM or Pi in general saved me a lot of headache this new years’ (and not to mention the super-ability to have avoided server downtime)!

And Eben! Even a noobie like me almost got this precisely in the first-read! Spick-and-span, just like the <3ly ARMarch!

Terry Coles

Eben,

Long before I retired, I worked for some years as a Technical Author; an experience that makes me super-critical of so-called technical journalists and authors who don’t really understand their topic.

I have to say that this is the best bit of technical journalism that I’ve read for years. After technical authorship I worked as an engineer in the test industry, but 30 years of that did not equip me to understand the intricacies of CPU architecture. Your posting has impressed me most because it doesn’t assume that reader knows anything except a slight grounding in electronics engineering and computer science, but to me anyway, is incredibly readable.

Thanks for this and for everything else that you’ve done for education.

Eben Upton

Thanks Terry – that means a lot. These days I don’t often get a continuous block of time required to write this sort of thing, but this felt worth spending a day on. I ran out of time before getting to the detail of Spectre, but I’ve started adding some relevant material (e.g. branch prediction) to the post, and hope to get to it this week.

Dennis

+1

Barnaby

Amazingly clear writeup; more content like this please.

Taivas

Very good article!

Thanks for explaining this complex matter in an easy to read and understandable way for everybody.

Keep on writing technical stories like this.

Martin Whitfield

I see what you do Eben.

Raspberry Pi is actually Battlestar Galactica.

:-)

Nice explanantion. I now have something to point folks to

Geoff S

Hi Eben. A really helpful and informative post. It made way more sense than the flurry of pseudo-tech commentaries I’d been reading before today. Thanks for the update and pleased the Pi is spared.

Say hi to Liz toe

Geoff

Jack Cole

Nice article that triggered an equally nice discussion in comments. Sad to see Wikipedia description incorrectly pin side channel attacks to crypto systems when in fact such attacks are widely used and not unique to crypto systems. Thanks for taking time to write, and for providing a useful product to the general public.

Cat

Reading the white papers, the only (known) way to deploy the Spectre attack would be to have a kernel with the Berkley Packet Filter JIT compiled in, which is in Ring 0. What if it’s more of a flaw in the BPF or gcc toolchain, and not necessarily a flaw with any particular processor?

Have we seen it deployed as anything other than taking advantage of any other methods?

Either way, the fix on the arm white paper for the issue isn’t computationally expensive.

Alex

Incredibly interesting. Thank you for the write up.

Jay Boisseau

This excellent explanation is a greta primer on how (most) modern microprocessors and compilers attempt to maximize performance, as well as the clearest explanation of the fundamentals of Meltdown and Spectre. Great job!

Frank

Thanks Eben for taking the time and effort to write this article! It’s the most understandable one I have read so far.

Dominic Giampaolo

This is hands-down the best explanation of Meltdown and Spectre that I have come across. Extremely well presented and easy to understand. Thanks.

Canol

Thanks for the great explanation!

Romel

Awesome explanation.

Easy to read, easy to understand.

Peter

The ARM Cortex A7 and A53 (and even the old 1176) certainly can both prefetch and execute speculatively. Speculative instructions will not be retired, but loads _will_ cause pahe walks and cache fills to be initiated while still speculative in the issue and dc1 stage. There are at most 6 instructions after the branch that are executed until the pipeline is cleared if mispredicted.

Whatever saves most arm processors from spectre it is not a lack of speculative issue of loads. What does save it is the fact that the first load will get abandoned before it can forward to the AGU for the second load, since there is no register renaming that can handle undoing of register updates. No speculative instructions after the branch will retire.

It would be possible for the second load to use a forwarded result from dc2 of the first load to start generating the tlb lookup. I guess something in the timing allows the page table walk to be abandoned before it or started.

Evgeny

Is Raspberry Pi vulnerable to Rowhammer?

Martin Taylor

That was a very well written piece about a complex subject. Thanks for that.

htom

Such a great post, Eben. I don’t think I have ever read such a clear and concise explanation of this kind of problem in a CPU. (I know I was never able to be either, although I sometimes convinced management they’d had a bad idea.)

Thank you.

(Yes, the 68xxx family was the Betamax of CPUs. Should have won. Was better. The Amiga … (still have a hot-rodded 2000) … I have never had a WinTel machine that was as responsive to human input. Improper design focus, imao.)

Alexander

I have the only non-vulnerable computer in the house!! Thanks for the information

ALbert Mietus

Thanks. A #mustRead for all software-ingenieurs, I would say

And, an inspiration for everybody how to write documentation that is useful

Denheer de Luynes

You can always tell when someone understands something markedly; they explain it so well.

Thanks, Eben.

Mike

Hi Eben,

Your article is the first I have found that explains how memory is exposed where it shouldn’t be — Thank you! But I am having a difficult time understanding how this would be useful to anyone. Isn’t this like opening a book and reading a part on a random page one bit at a time? I don’t see how anyone could use this to figure out what book they’re reading. If an attacker’s purpose is to gather info (passwords, credit card data, etc.), how could they possibly know what they are reading until they read a sizable chunk of memory and analyze it to see what they collected? Or am I missing something?

Ralph Corderoy

Hi Eben,

Hennessy and Patterson might be a bit deep end for those wanting an introductory overview. Are you aware of the excellent `The Elements of Computing Systems’ by Nisan and Schocken? http://amzn.to/1qlmwCy

The first half introduces Boolean logic and their own little hardware-description language. They provide a series of Java emulators for the HDL and have one write plain text files to build logic gates, registers, RAM, ALU, etc., to implement a simple CPU for a PDA.

The second half has you use the language of your choice to write an assembler for it, then a compiler to byte-code for a simple OO language, and then the VM to execute that as the CPU’s operating system. Finally, there’s Pong written in that OO language.

All the exercises come with expected outputs and a test harness so you only progress when you’ve grasped the material. It’s a slim book, so not daunting, and covers just enough of each topic to string them together. It’s nicknamed `The NAND Book’, because you build this little PDA from just NANDs.

BTW, how did you transition from software to VLSI design? It’s an uncommon route.

Cheers, Ralph.

Waldek

Hi Eben,

Thanks for the explanation. I might be wrong, but the real problem with the Spectre vulnerability is not the speculative execution in CPU. It is the ability to control the CPU cache, like cache eviction by userspace program. There is ARMageddon paper (https://www.blackhat.com/docs/eu-16/materials/eu-16-Lipp-ARMageddon-How-Your-Smartphone-CPU-Breaks-Software-Level-Security-And-Privacy.pdf) and they mention ARM Cortex A-53.

Is this not relevant for RPi ? If not, why ?

HeS

Thanks a lot. Example is better than a lecture:)

Manuel Sanchez

What an amazing way of explaining quite a few microprocessor design concepts building on the same example!

Just one nit which perhaps could make it more accessible: many readers would expect bit 8 in 0x100 to be named as “ninth” when referred to as an ordinal

A

Exactly my reaction, the bit with an index of 8 is the ninth bit. If we had indexed the bits alphabetically then the “i”th bit would still be the ninth bit, because the two concepts are separate.

Still the most interesting thing I’ve read in a long time. Thumbs up.

William Stevenson

Yes. Thanks very much for the effort of trying to explain this to a lot of people who don’t understand what’s going on, which includes me.

Pugwash

It’s always good to see the BBC B referred to in a story mentioning the good old days.

The Green Billy

“””

Even though the processor always speculatively reads from the kernel address, it must defer the resulting fault until it knows that v was non-zero

“””

The zero-check ensures that a the memory access violation (A memory access violation zeros out the target register) isn’t sooner than the parallel computation . What if you remove the if statement (ie: remove any need for speculation)

Sure – you will get a less accurate memory dump (with some zeros here and there), but won’t this still mean you can get a somewhat estimated guess of the kernel memory contents?

Jim

Fantastic article. I have small question, and it’s somewhat subjective.

I’m wondering if perhaps there is an off by one error in here? You say “[this] will access either userland address 0x000 or address 0x100 depending on the eighth bit of the result of the illegal read.”

Wouldn’t this be dependent on the _ninth_ bit of the illegal read? Granted, it depends on whether we’re calling w_&0x1 the first bit or the zeroth bit.

Just wanted to clarify for myself and anyone else who may have wondering similarly. Definitely not trying to be pedantic. Thanks for the article, it’s very helpful.

PW42

Thank you Eben for your clear exposition of the basis of the Spectre problem. It would be very interesting if you could write a follow-up in which you describe the principles of the next steps in a hack. Not because I want to write a hacking program, but just because I’m curious.

As a non-assembler programmer I have the following questions:

1. How do you time separate fetch instruction (get the time differences between fetches from user-space and kernel-space)?

2. A program that loops over individual bits of the kernel-space must be pretty slow. Is it not possible that the state of the kernel changes during the loop?

3. Assuming that the kernel state is constant long enough, you know it when the loop is finished. That is, you have a large number of 0’s and 1’s. How do you disassemble such a long binary string to obtain passwords and userid’s from it? (At least this is what is generally described as the main threat of the Spectre vulnerability).

4. If it so happens that the momentary snapshot of the kernel-space does not contain useful info, does the hacking program then repeat the procedure in a kind of while (info is not useful) loop?

I would be grateful if you could answer – at least in principle – the questions above. Undoubtedly there are many more problems toward a hacking program that I’m not even aware of.

Mario Giammarco

On points 2 and 3: yes you will receive a probably non consistent sequence of 1 and 0.

The incredible things is that this type of attacks is quite rare! Is not like a ransomware: send it to many people and get money fast. It is a specific attack that needs an human that tries to make sense to a sequence of 1 and 0. So it must be payed in advance by someone with lots of money like gouvernment.

Simon Ponder

Thank you for the effort in dumbing this down so that others can understand this, I think I am starting to grasp what is needing to be fixed.

I still don’t understand how they are going to patch this via software and not a physical replacement of a component.

Kratos

My pi shed tears of relief while I read this article to it.

CTSguy

to PW42.

The program (malware) needs to have a starting point. As soon as it has a starting point it can begin to execute it’s code. It does not determine passwords and user id’s from it’s derivation of memory locations. The programmer determines the starting point for executing his malware. Once his code begins to execute it’s katie bar the door.

takuma udagawa

Hello This Article is so awesome and what all we need to know the problems, not only pi users but also other architecture users.

I want it to translate to Japanese, Can I?

Colin Wray

It makes me wonder about the authors of these attacks. They must come from a very select group of insiders making it difficult to hide their identities ?

anon

mmm … rpi if it’s vulnerable..

——–

CVE-2017-5715 [branch target injection] aka ‘Spectre Variant 2’

* Mitigation 1

* Hardware (CPU microcode) support for mitigation: UNKNOWN (couldn’t read /dev/cpu/0/msr, is msr support enabled in your kernel?)

* Kernel support for IBRS: NO

* IBRS enabled for Kernel space: NO

* IBRS enabled for User space: NO

* Mitigation 2

* Kernel compiled with retpolines: UNKNOWN (couldn’t read your kernel configuration)

> STATUS: VULNERABLE (IBRS hardware + kernel support OR kernel with retpolines are needed to mitigate the vulnerability)

CVE-2017-5754 [rogue data cache load] aka ‘Meltdown’ aka ‘Variant 3’

* Kernel supports Page Table Isolation (PTI): UNKNOWN (couldn’t read your kernel configuration)

* PTI enabled and active: NO

> STATUS: VULNERABLE (PTI is needed to mitigate the vulnerability)

andrew

+1 for translating it in my native language (Italian). Please get in touch with directions if you’re interested.

Dave

Eben,

I followed this all the way up to the point where you did w_&0x100 to read only one bit from the cache.

Why do that? Why not just read the whole byte?

Silviu

A bit because you compare time 0 or 1, this is what the bug allows, you read the whole byte by reading 8 times 8 bits, up to 32 bits. Very slow to do that.

Charlotte

Thank you for taking the time and trouble to post this. It’s made my head hurt but no pain, no gain!

PW42

To CTSguy at 9th Jan 2018 at 2:11 am, thank you, that makes sense. As I understand you, all you have to do is insert into the kernel a call to executable malware stored somewhere in user-space. This can be done, for instance, by replacing in kernel-space a call to a bona fide program. Do I understand that correctly?

Such a hack is of course a lot easier than what I previously surmised, but it is still far from trivial. It requires more than JavaScript – I read somewhere that a hacking code could be written in JS.

Hans Lepoeter

Thanks, thats a good explanation.

I discussed with some colleagues today how such a bug could possibly be of use to hackers. Now I know a lot more.

It’s a lot like finding a buffer overflow in a program, allowing reading of memory you are not supposed to

Russell

For cloud computing, this is a very real issue. Or any multi-user environment (web hosting, schools, etc.)

But for the typical PC user, not so much, as there are plenty of easier attack vectors than the dribble of data from this. (emailed links, fake login screens, hacked downloads, social engineering, etc. etc.)

Spectre and Meltdown are not remote access vectors; an attacker must first deliver code to the victim. Javascript attacks are possible, but they would be active only as long as the javascript can execute. If the browser is closed or moved away from the attacker’s site, the attack stops.

If the attacker has a VM in an unpatched cloud provider, then the attacker can have unlimited time to look for usable data from other VMs on the same server.

Even if a Pi were vulnerable, the attacker would need remote access to the Pi. The easiest is to try “pi/raspberry”! If successful, they don’t need any other attack vector. Your Pi is PWNed! (change your Pi password!)

PW42

For a Spectre hack by JavaScript, see Sec. 4.1 of https://arxiv.org/pdf/1801.01203.pdf

42BS

The Spectre attack on a Cortex-A9 seems to be quite difficult. At least the example code which works fine on a PowerPC and Intel Core2 does not give any results on a Xilinx ZYNQ.

Thomas Friehoff

Great article and explanation!

Any idea how long it might take to “aquire” one MByte of Data?

Thomas

Jim Manley

We’re not fooled for a minute, Eben – this most outstanding, lucid, informative post was written by Aphra and edited for technical accuracy by Mooncake and Liz, wasn’t it?

Greetings from Montana, where I’m off from teaching today due to a snow day following feet of fluffy white stuff falling the last couple of days. It’s hard to believe such a collection of quadrillions of every-one-unique crystalline structures can be both so beautiful and dad-burned impossible to drive through! The respite is needed so that I could catch up on my now roughly weekly perusal of the blog to see what’s happening in our wonderful community that you’ve all built.

Keep up the great work Eben and Liz … and, oh, yeah, with the Pi stuff, too!

Mario Ray Mahardhika

This is how the vulnerabilities shall be explained. Even non-programmers (with a bit technical knowledge) should be able to understand relatively easily.

pkmlp

Thanks, great Explanation, although I didn’t understand all of it. But it is once again a great sign of how Raspi-People takes care for its community.

ABC

thanks for breaking this down to us. Well explained!

Dr.Lal

Also the Cortex-A53 and Cortex-A55 cores are NOT affected, b because those two processors cores don’t do out-of-order execution , btw Arm has also released Linux patches for all its processors.

Gregory

I hope raspberry pi 4 will not have unsafe cpu.

XOR

Good read.

Bob Burns

Thanks for the update. I came across an older paper while trying to understand spectre/meltdown and because one of the test devices was an ARM Cortex A53, I am eager to try and adapt its poc to a spectre like test on the Pi.

FLOHR

15.01.2018

I own an intructions set 8080. and 4 TEK 4/8 INMOS T 800 Transputer with Parallel c from logical systems. I wonder, that there are any forensic circumstancial after a Spectre attac ? BAD JAVA !

Dennis

FLOHR: don’t you mean “java bad”.

6502 reigns supreme!

FLOHR

Intel has informed HPE that Itanium is not impacted by these vulnerabilities. (CVE-2017-5715, CVE-2017-5753, CVE-2017-5754)

SUPPORT COMMUNICATION – CUSTOMER BULLETIN Document ID: a00039267en_us :

https://ark.intel.com/products/family/451/Intel-Itanium-Processor until 2025

isnt it bad just piping true 32 bit CPUs using 8 Cores ?

Friends never let Friends fly with Boeing … my IBM started with ? 3.77 MHz and a week later Taiwans pused up 10 MHz. remember when Apple mounced Oh Dad ” my PC has eaten my homework.. Ras Pi can not be eaten !!! FACTS.

Jonathan Miller

Thank you for writing this up – this is an excellent and clear explanation of why these two attacks depend on speculation. Keep up the great blog!

Marco Faustinelli

I may be no Raspberry PI devotee and therefore I find it difficult to join the praise chorus. I came here through a referral link, I read the thing carefully but the key questions still remain open:

1) may I understand why:

0 && 0x100 = 0x000

1 && 0x100 = 0x100

without having to read a massive academic treatise?

2) Shouldn’t an instruction issued by a user program to read into kernel memory be banned from execution regardless?

To me this page reads just like a sleek advertorial for the PI. You happened to choose processors without speculation, maybe you had to, possibly you regretted that choice thousand times in the last years; now it comes out that speculation is bad and -hooray!

FLOHR

Spectre example: ( say 1 )

if (x < array1_size) {

y = array[array1[x] * 256];

}

In Itanium, if array1_size is known to be unchanged in the function, it would be loaded early and maintained in a register, as would the addresses of array1 and array2, so the Itanium coding for the expression would be:

cmp.ltu p6, p7 = r13, r14

shladd r8 = r13, 2, r15 ;;

(p6) ld4 r8 = [ r8 ] ;;

(p6) shl r8 = r8, 8 ;;

(p6) add r8 = r8, 8 ;;

(p6) ld4 r8 = [ r8 ]

Notice there’s no branching; further, the architecture definition ensures that no results of predicated operations are architecturally visible, even in the case of loads, stores, or exceptions.

THX !

FLOHR

http://developer.amd.com/wordpress/media/2013/12/Managing-Speculation-on-AMD-Processors.pdf

MITIGATION V2-1

Description:

Convert indirect branches into a “retpoline”. Retpoline sequences are a software construct which

allows indirect branches to be isolated from speculative execution. It uses properties of the return stack

buffer (RSB) to control speculation. The RSB can be filled with safe targets on entry to a privileged mode and

is per thread for SMT processors. So instead of

1:

jmp *[eax]

; jump to address pointed to by EAX2:

To this:

1:

call l5 ; keep return stack balanced

l2:

pause ; keep speculation to a minimum

3:

lfence

4:

jmp l2

l5:

add rsp, 8 ; assumes 64 bit stack

6:

push [eax] ; put true target on stack

7:

ret

and this 1: call *[eax]

;

WHITE PAPER:

SOFTWARE TECHNIQUES FOR MANAGING SPECULATION ON AMD PROCESSORS

6

MITIGATION V2-1

(CONTINUED)

To this:

1:

jmp l9

l2:

call l6

; keep return stack balanced

l3:

pause

4:

lfence

; keep speculation to a minimum

5:

jmp l3

l6:

add rsp, 8

; assumes 64 bit stack

7:

push [eax]

; put true target on stack

8:

ret

L9:

call l2

Effect:

This sequence controls the processor’s speculation to a safe known point. The performance impact

is likely greater than V2-2 but more portable across the x86 architecture. Care needs to be taken for use

outside of privileged mode where the RSB was not cleared on entry or the sequence can be interrupted.

AMD processors do not put RET based predictions in BTB type structures.

MITIGATION V2-2

THX

Bob Burns

I’ve been digging in to this more, and have a super dumb question I can’t find an answer to online: does branch prediction on Cortex-A53 execute pre-fetched instructions or wait until it is known that the branch is correct (hence in-order execution)? I’m assuming from Eben’s explanation they are not, but I haven’t found the specifics of what happens in A53 branch prediction.

Thanks,

Bob

Bob Burns

I should have looked closer at this thread. This was answered very well by jbd around Jan 5. Sorry about that.

FLOHR

normally we consider assembler code. Try a deep look into timing as used in time critical applications like AIRBUS A 380. or pay 99 Euro for a HEISE webinar with OLD Stiller.

https://www.hochschule-trier.de/uploads/media/Wilhelm_-_ASE-2013_01.pdf

for example, there are more about in the web by Reinhard Wilhelm, PHd. Emeritus

BEST !

TheLinuxCode

So, AMD CPUs are only affected by Specter.

But as I remember, in some comments of the dozens of threads that I read, the function affected by Specter can be disabled (and its disabled by default ). So, an AMD Cpu would have no problem unless you need that function.

Am I right?

Néstor

Good, ARM has published some vulnerabilities on their processors:

https://developer.arm.com/support/security-update

Sam @ GadgetCouncil

Hey, where can I read the English version? Kidding, great post.

Michal

Why eighth bit? ..depending on the eighth bit of the result of the illegal read.. It could acquire whatever value…. Please can you describe?

Eben Upton

It’s the eighth bit because we mask all the other bits (by ANDing with 0x100). The resulting value determines the address of the dependent load, and so which line ends up being fetched into the cache.

Comments are closed