ScreenDress is embellished with spooky Pi-powered eyes | The MagPi #135

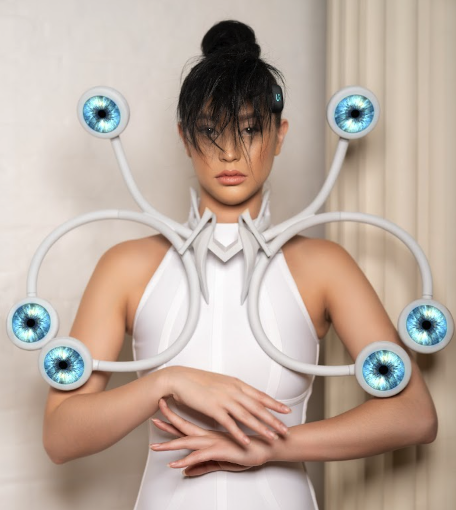

We’d definitely say yes to this dress – a stunning creation by Anouk Wipprecht that is proving to be an eye-opener, as David Crookes discovers in the latest issue of The MagPi.

It’s fair to say that clothing fashion and computing don’t always go hand-in-hand. But bring amazing talent such as Anouk Wipprecht on board and all eyes are definitely going to be on the wearer – as this striking ScreenDress shows to great effect.

As well as fitting snugly around the body, the 3D-printed dress includes a set of circular LCD screens, each of which looks like an eye. Connected to an EEG sensor – a four-channel BCI headset developed by neurotechnology company g.tec – they change according to how the wearer feels by reading brainwaves and working out a person’s cognitive load.

To that end, the screens can show signs of stress, fatigue, and frustration. The more a person subconsciously feels their mental load is increasing, the wider the iris and pupils dilate. By making changes in real-time, Anouk, a Dutch FashionTech designer, says the dress is able show a direct correlation between a person’s actions and their brain’s reaction. One thing’s for sure, it’s certainly eye-catching.

Dress to impress

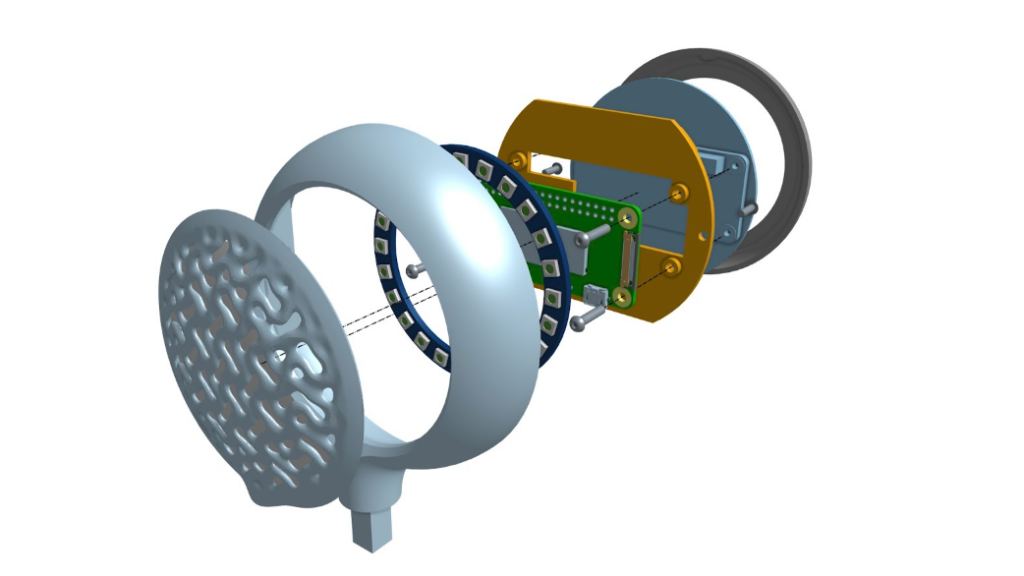

ScreenDress was created from scratch. As well as the headset – called the Unicorn Headband – the dress was designed in Onshape, a free, cloud-based CAD app. Anouk said it helped her to understand the look and feel of the possible embedded LEDs and how light reflected back on the body and space around it. “I used various Onshape design capabilities including mixed modelling, generative design, render studio, and in-context design to reference hardware elements,” she adds.

The dress was outputted to an HP Jet Fusion 5420W 3D printer using a form of nylon. “This material is ideal for engineering-grade, white, quality functional production parts,” Anouk continues, pointing to the components being light – an important factor when being worn.

Behind each of the HyperPixel 2.1 round hi-res screens that display the eyes are Raspberry Pi Zero 2 W computers. “There are six of them,” Anouk says. They take information from the sensor that has been analysed in real-time and determine how the animated eyes appear on the screen.

Got the look

To ensure the ScreenDress is in-tune with the wearer’s brain, anyone wearing the clothing, which has the eyes flaring out from a sculpted neck-piece, has to undergo a two-minute training session. Machine learning then begins to understand the wearer’s mental workload so that the eyes work as accurately as possible.

“Our fortunes turned when Raspberry Pi Zero 2 W, equipped with a quad-core Cortex A53 processor, was released,” Anouk says. “This upgrade significantly enhanced the processing capabilities [over Raspberry Pi Zero W], allowing us to manage data seamlessly and achieve the desired performance.”

ScreenDress is certainly cool and it turned a lot of heads when it was first put on show at the ARS Electronica Festival in Linz, Austria in September before being presented in Budapest, Hungary and Eindhoven, Netherlands. It has also posed a lot of questions.

“What does it mean when we can connect technological-expressive garments to our bodies, body signals, and even emotions? What dialogues can we trigger? This is what I am exploring with designs like these,” Anouk explains. It also means that Raspberry Pi computers can be seen as a fashion accessory, potentially inspiring many other makers.

The MagPi #135 out NOW!

You can grab the brand-new issue right now from Tesco, Sainsbury’s, Asda, WHSmith, and other newsagents, including the Raspberry Pi Store in Cambridge. It’s also available at our online store which ships around the world. You can also get it via our app on Android or iOS.

You can also subscribe to the print version of The MagPi. Not only do we deliver it globally, but people who sign up to the six- or twelve-month print subscription get a FREE Raspberry Pi Pico W!

The free PDF will be available in three weeks’ time. Visit the issue page for more details.

2 comments

Pete Chown

I wonder if this could be combined with a camera and face recognition, so when you go and talk to the wearer, all the fake eyes meet your gaze. That would be really spooky.

Perhaps the measured cognitive load changes as the wearer gets bored. If so, you could find that you were talking to the wearer, but gradually some of the eyes start wandering and looking at other things!

Adam Bryant

Wow, Just read about this in the Issue, would love to read more on the tech behind it.

Comments are closed