What did computer engineering students at Cornell make with RP2040 last term?

We first made friends with Hunter Adams, a lecturer in Electrical and Computer Engineering at Cornell University, when he got in touch at the end of 2022. He dropped us a line to let us know he had designed his Digital Systems Design Using Microcontrollers course around our RP2040 chip.

Fall semester (or autumn term to us hold-outs in the UK) that year saw Hunter’s class make, among many other things, a device which lets you play Minecraft by blowing certain notes on a recorder, and a dancing LED cube.

Fast forward to the last semester of 2023, and Hunter has fostered yet more Pi-based productivity in his students. His Computer Engineering cohort at Cornell built no fewer than 44 projects around RP2040. Their latest creations include:

- A reimagined version of ATARI’s Pong

- A gesture-controlled kitchen utensil carousel

- An RP2040 DJ

- A drawing robot

- And a luggage-following robot

This YouTube playlist contains all the students’ video demonstrations for those projects that have them. The most intriguing project titles by far, in my opinion, are the Christmas Caroling Muscle Machine and the Interactive Lightsaber. Let’s take a closer look at them both.

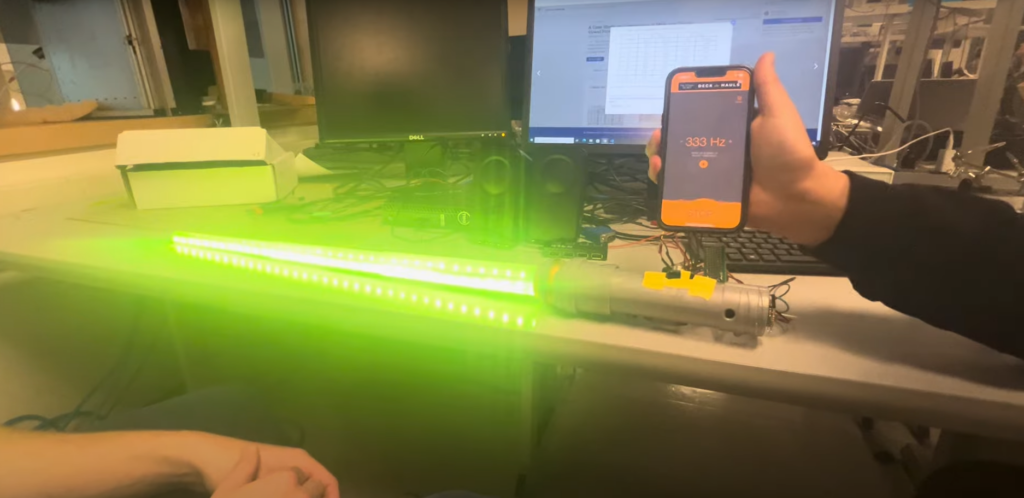

Interactive lightsaber

Star Wars fans Berk Gokmen and Justin Green made a 24-bit LED-illuminated lightsaber that changes colour depending on which character you fancy cosplaying as that day. Using a microphone, an RP2040, and an addressable LED strip, their interactive lightsaber analyses the sounds it picks up and reacts accordingly.

‘Disco mode’ changes the colour of the lightsaber based on the frequency and intensity of the surrounding sound. A second mode, ‘Vowel detection mode’, changes the colour of the lightsaber to red if the user says “EE” and to blue if the user says “AH.”

The students have put together a more in-depth explainer if you’re interested in how an FFT sound analyser was used to gather frequency and intensity of sound, or how they used technology from Cepstral to determine whether the lightsaber wielder had made an “EE” or an “AH” sound.

Christmas carolling muscle machine

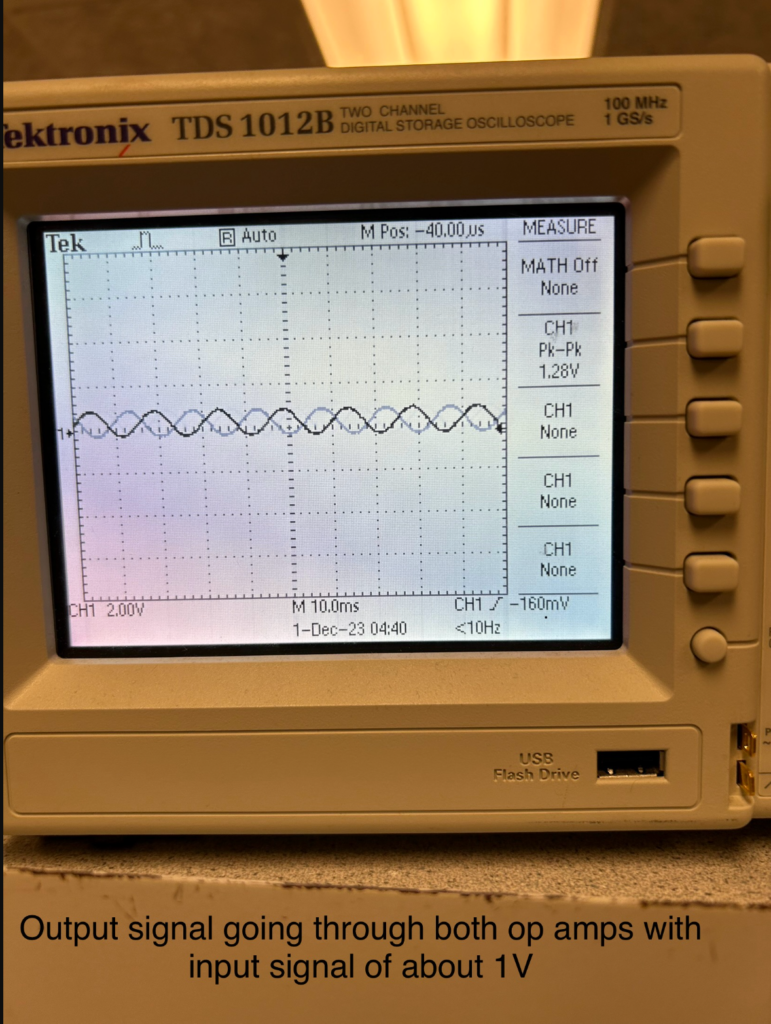

Kidus Zegeye and Sofia Echavarria went totally left-field with their fantastical festive creation. Their Christmas Caroling Muscle Machine uses surface electromyography (EMG) to measure the changes in a muscle when it flexes, then feeds this data into a Markov model trained on Christmas music. The model creates instrumental tunes that are influenced by the strength of the muscle tension measured. Kidus and Sofia share an interest in how humans interact with technology and how we can use sensors to create art.

Their in-depth explainer is incredibly comprehensive, so go to town if this sort of thing sounds like your bag.

Please brighten my day by gracing the comments with maddest student projects you’ve ever seen come out of a computing class.

No comments

Comments are closed