The Social Interaction Dress

I came across Clodagh O’Mahony on Instagram, right at the start of my Raspberry Pi employment. As I was starting my new adventure with Pi, so was she… albeit via a somewhat different approach.

@yodaomahony *sigh* There goes all my (parents’) money. #goodcause tho. #adafruit #thesiscountdown #day82

@raspberrypifoundation What are you planning to make @yodaomahony?

@yodaomahony @raspberrypifoundation I’m building a dress that quantifies real-world social interactions and posts them online. My thesis is a commentary on how much social media affects our actions.

It’s fair to say that our initial interaction had me hooked on the idea of wearable tech that quantified social interactions. So from that moment, I followed her account, checking in on her posts, and then counted down the days until her thesis was due, mainly so I could finally share the build with all of you.

Eventually the Instagram countdown ran its course and last week, as Clodagh announced the end of her project, this long-awaited blog post could finally come to life. And for Clodagh, it meant she had one for us in return…

“Since it’s now all over and done with, I’m going to skip the Phase/Part structure and just do a summary write-up of the thesis build. To be honest, I would probably just let it slide and go back to the pre-thesis days of whinging about my hair and Project Runway, but I feel like I owe the Raspberry Pi Foundation something for following me almost all the way through the Instagram countdown.”

(See? Regardless of what people say, my adorable social bullying helps productivity!)

For her thesis, Clodagh built two components of the study: the dress and its accompanying website.

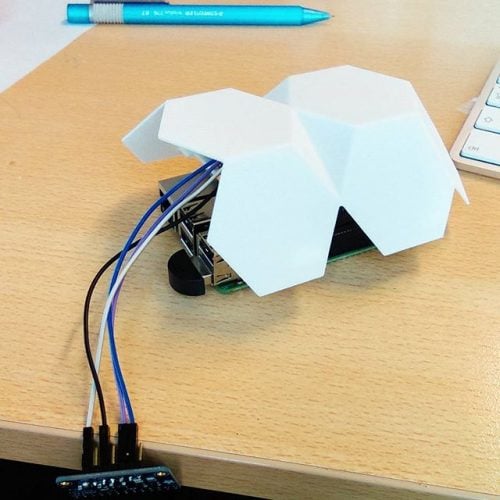

The dress itself houses a Raspberry Pi, fibre optics, an Adafruit 12-Key Capacitive Touch Sensor Breakout and Pimironi Blinkt within a beautiful 3D-printed casing.

With the dress split into sectors, lights glow as a body part is touched, thanks to conductive thread… lots of conductive thread. A hand to the waist sets the dress glowing purple, to the hip, green, and so on.

As the touch sets the lights in action, the dress also registers the interaction via a point system, relaying the data back to the website.

It’s fair to note now that the dress and website are all part of a thesis study into the way in which we have handed ourselves over to social media, and the idea of celebrities ‘selling themselves’ online, opening their lives to public scrutiny for the good of their career progression.

Alongside touch, the dress also sets out to award points based on voice. Positive words boast positive colouring across the Blinkt, while negative words do the opposite. Though the device doesn’t record specific speech, it acknowledges words based on a catalogue and awards points accordingly. Points are also granted for profile page views and location, along with multipliers based on how public you make your profile.

It genuinely is wonderful to see the dress come to life. Changes along the way were well-documented – at one point, an entirely new dress was created to better fit the purpose – and with the piece now complete, Clodagh can go back to bingeflixing Project Runway and blogging, while I hunt down my next Instagram victim prey target maker.

4 comments

Michael Horne

Love it. Love it. Love it. Wonderful project.

Steve Richards

Although I am familiar with the technology being used, this area of application is almost like an idea that has come from another planet (I mean that in the best possible way)

And it opens up a whole new area of inspiration for areas to draw on for school computing lessons… Data about social interactions … splendid.

S Foster

…and just on the interactivity front alone, I’m seeing visions of a dozen dancers who can control the colour of their costumes in real-time, just through their own touch or ‘holds’ of a partner. Awesome :-)

AndrewS

Like a more complicated version of https://www.raspberrypi.org/blog/insert-juggling-pun-here/

Comments are closed