Take a photo of yourself as an unreliable cartoon

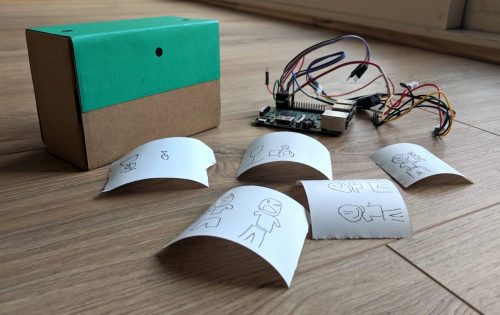

Take a selfie, wait for the image to appear, and behold a cartoon version of yourself. Or, at least, behold a cartoon version of whatever the camera thought it saw. Welcome to Draw This by maker Dan Macnish.

Dan has made code, instructions, and wiring diagrams available to help you bring this beguiling weirdery into your own life.

Neural networks, object recognition, and cartoons

One of the fun things about this re-imagined polaroid is that you never get to see the original image. You point, and shoot – and out pops a cartoon; the camera’s best interpretation of what it saw. The result is always a surprise. A food selfie of a healthy salad might turn into an enormous hot dog, or a photo with friends might be photobombed by a goat.

OK. Let’s take this one step at a time.

Pi + camera + button + LED

Draw This uses a Raspberry Pi 3 and a Camera Module, with a button and a useful status LED connected to the GPIO pins via a breadboard. You press the button, and the camera captures a still image while the LED comes on and stays lit for a couple of seconds while the Pi processes the image. So far, so standard Pi camera build.

Interpreting and re-interpreting the camera image

Dan uses Python to process the captured photograph, employing a pre-trained machine learning model from Google to recognise multiple objects in the image. Now he brings the strangeness. The Pi matches the things it sees in the photo with doodles from Google’s huge open-source Quick, Draw! dataset, and generates a new image that represents the objects in the original image as doodles. Then a thermal printer connected to the Pi’s GPIO pins prints the results.

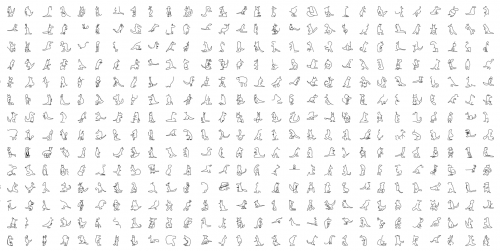

Kangaroos from the Quick, Draw! dataset (I got distracted)

Potential for peculiar

Reading about this build leaves me yearning to see its oddest interpretation of a scene, so if you make this and you find it really does turn you or your friend into a goat, please do share that with us.

And as you can see from my kangaroo digression above, there is a ton of potential for bizarro makes that use the Quick, Draw! dataset, object recognition models, or both; it’s not just the marsupials that are inexplicably compelling (I dare you to go and look and see how long it takes you to get back to whatever you were in the middle of). If you’re planning to make this, or something inspired by this, check out Dan’s cartoonify GitHub repo. And tell us all about it in the comments.

6 comments

Andrew Coburn

That’s brilliant! I am so going to build this with my daughter. This is an inspiring project that touches on physical computing, art, image recognition, machine learning and humour! This will be our first project we build together. Thanks for posting!

Andrew Coburn

This is the first time I’ve seen a Raspberry PI running off 4 AA batteries. Would this work OK and how long would the PI run for? My understanding was that a USB charger normally supplies 5V to the PI. Wouldn’t this be supplying at least 6 Volts ?

Dan Macnish

Hi Andrew, Dan here. I actually used eneloop cells rather than AA batteries – these deliver around 1.2V each rather than 1.5, so are safe to use with the pi. They have been used by other people building polaroid cameras, and seem to deliver enough current for both the pi and the thermal printer.

Sorry for the confusion, I’ll update github to reflect this!

Alex

Hey!

I would love to couple this project with a Line-us robotic arm instead of a thermal printer. Either with a bluetooth connexion or a sort of little fixed photobooth that draws the cartoon in front of you!

However I’m only a beginner programmer. If anyone is willing to work with me on this it could be awesome!

Good weekend to you all,

Alex

NigelT

Is there any reason for using the antiquated Python 2.7?

thornston

Did anyone try this successfully with an a2 micro panel printer (19200 baud)?

Comments are closed