Sign language translation glasses

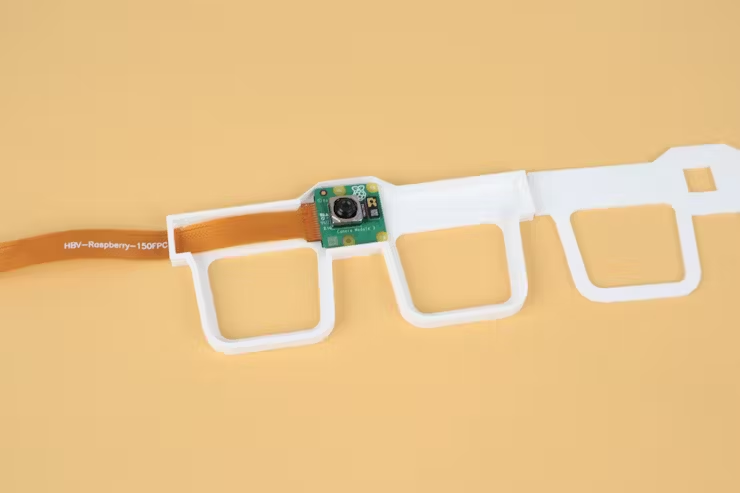

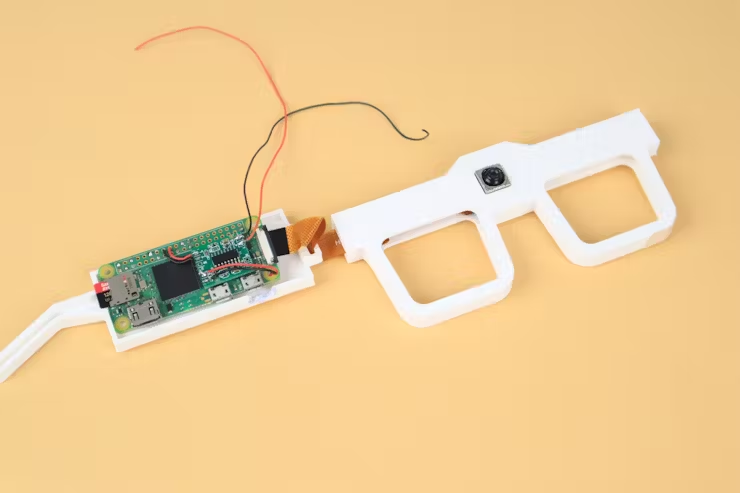

Maker Nekhil has taken a Raspberry Pi Zero 2 W and one of our camera modules to build a piece of wearable tech that could have a transformative impact. These sign language translation glasses run VIAM‘s open-source software, and can easily be produced on any generic 3D printer.

Nekhil’s creation allows people who don’t understand sign language (like my monoglot self) to hear the words that someone is signing to them.

How do they work?

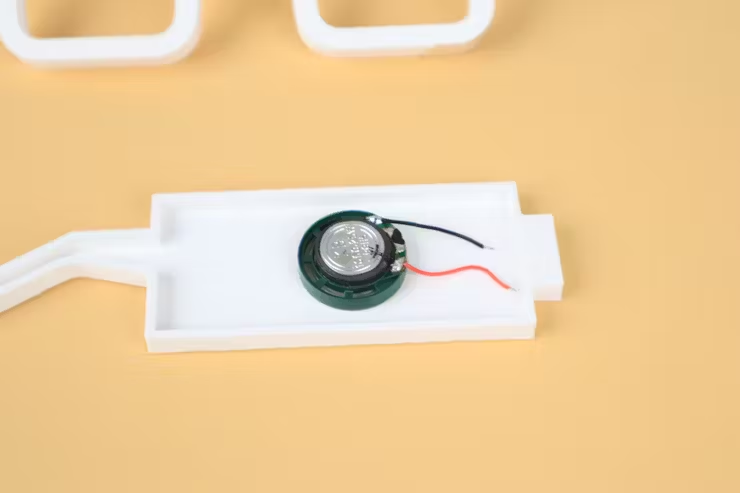

The camera module captures the movements of the signer’s hands, while the Raspberry Pi processes the data using machine learning and translates the gestures into spoken words. A speaker then delivers these out loud. Whether it’s the signer or the person they’re communicating with wearing the glasses, they just need to make sure their hands are in view of the camera module. Doing so might obfuscate their face a little, so some nuance gets lost in translation; if you’ve got a good idea for addressing this, share it in the comments.

Hardware

- Raspberry Pi Zero 2 W

- Raspberry Pi Camera Module 2

- USB sound card

- PAM8403 Amplifier Module

- 3W speaker

Nekhil designed the 3D printed frames in Fusion 360. He originally wanted to use Raspberry Pi 5 for this project to harness as much image processing power as possible, but he couldn’t find an elegant way to incorporate the bigger board into something comfortable to wear, so he turned to the smaller Zero 2 W. This decision brought the cost of the build down — Nekhil’s choice of board is priced at $15. The board sits discreetly along one arm of the glasses, with the speaker in the other arm and the camera module nestled front and centre on the frames.

Accessible tech

Nekhil has shared all the code behind this project on GitHub. He hopes to upgrade it to be even better at interpreting more complex elements of sign language, such as hand orientation. It would be excellent if you were to come up with any upgrade ideas and post them as comments on Nekhil’s project page or his YouTube demo. It’s lovely to see our hardware used in tech aimed at improving accessibility.

4 comments

thagrol

Interesting idea but…

1. Judging by the source code all the heavy lifting AI/ML work is being done on a cloud service so not really a zero2w project and an internet connection is needed.

2. Which sign lanague does it translate from? There’s more than one.

3. Their API keys are in their source code on github. Oops.

TL;DR: nice idea but definitely needs work and more info.

Linkin

might need a lighter software?

BrandyBurg

1. It’s a Zero 2 so no surprise that the ML work is VIAM. Anyway, it’s explained as such in the project page, so no great revelation here. No need to look at source code.

2. I would guess Indian and the project author is in India. But the point here is to demonstrate the tech, other languages would be enabled.

3. This is a bit of a blunder, but a very common one.

Nancy Frishberg

I want to applaud the general idea, but there are difficulties even in this early implementation. Language functions as 2 way communication: the sender of the message is a candidate to receive a message (and vice versa). How does this project acknowledge it?

1. Is the assumption that the Deaf person is signing and the hearing person is wearing the glasses? Then the hearing person gets the AUDIO translation, but the Deaf person has no idea whether the machine said anything. How to correct an error when you don’t know one’s been made? Is there any VISUAL feedback that identification is complete?

2. There’s a party game among American Sign Language speakers where you talk to your friends using a fist (or other single) handshape. Yes, handshape is a distinctive category for signs, but movement is the key to connected text. Without tackling movement parameters, this project will not take off.

3. Do you know the aphorism “Nothing about us without us”? https://www.un.org/esa/socdev/enable/iddp2004.htm It applies here. I urge Nikhil to engage a fluent Deaf signing person to partner with. If that recommendation feels too hard, then it’s worth rethinking what the goal of this project is.

I’ll link to one of several similar critiques of sign language gloves; many of the objections to those projects apply equally well here. https://grieve-smith.com/blog/2016/04/ten-reasons-why-sign-to-speech-is-not-going-to-be-practical-any-time-soon/

Comments are closed