Search our documentation by meaning, not keywords

Raspberry Pi is always looking for new and better ways to do things. One of the most significant ways in which AI has affected the internet is the proliferation of chatbots and answer generators, and while we’ll never use AI to author our documentation, we’re curious to find out whether tools like these could help users find technical information easier and quicker.

Something you may have heard about is retrieval-augmented generation (RAG for short), which uses a defined set of documentation to answer questions for you in an informed way. In this blog, we are trialling two different RAG-based documentation tools: InKeep and Kapa. Our website is serving two versions of today’s post to visitors at random; if you reload the page, you’ll get the other tool. Why not type a question about something you’d like to do with a Raspberry Pi into the chatbot below and see how it responds?

We’d love you to think about whether the tool did a decent job of answering your question — give the response a thumbs up or a thumbs down and get ready to tell us anything else you want us to bear in mind when you get to the comments section. We take our documentation very seriously, and your thoughts and opinions will help us augment and improve it.

So what's special about this?

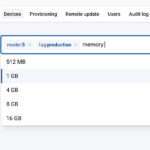

The chatbots are actually using Raspberry Pi documentation, including the html, the white papers and books stored in pip.raspberrypi.com, and some specific GitHub repositories that have documentation built into them (for example, rpi-image-gen). Because of this, it can search within the documentation for specifically relevant chunks of information to feed into the AI model. It can then ask the model your question, and the model will decide whether it actually has relevant information and generate an answer if it feels it does.

How does it work? Isn't it just a fancy search engine?

When LLMs ingest something, it's first converted into tokens. You can think of a token as a thing that represents a single word, although most tokens represent sub-words ('running' might be broken up into 'run-' and '-ning', for example). The next stage is to convert those tokens into 'embeddings'. Embeddings are actually vectors in a very high-dimensional space (up to a thousand different dimensions), and these vectors represent the "semantic meaning" of the token. Two vectors that are close in value have a similar meaning. Here's the canonical example:

The diagram above shows an example displayed in three dimensions (because we can't even visualise what four spatial dimensions would look like). You could think of the dimensions as terms like 'gender', 'royalty', and '80s music reviews'! If you take the embedding for 'woman' and subtract the embedding for 'man', you get a vector that roughly means "the shift from male to female". Add that same shift to 'king', and you land very close to 'queen'.

This isn't a party trick. It tells you something genuinely useful: nearby coordinates in this space mean similar things, and the same kind of relationship between pairs of words shows up as the same kind of geometric shift. The model has learnt, from billions of words of training data, that the relationship between 'king' and 'queen' is about the same as the one between 'man' and 'woman', and it has encoded that where the points sit.

An extension of this concept is to develop embedding models that can take a sequence of tokens (like a paragraph of, say, 100 words) and create an embedding (vector) from it that gives the semantic meaning of the section of text. This is the type of embedding model used in RAG-based systems, as we want them to find chunks of text that have similar meanings.

Chunking

Once you notice this property, it's fairly clear what to do with it. Take all your documentation, chop it into reasonably sized chunks — a paragraph or two each — and generate an embedding for every chunk. You now have a database in which similar meanings are close together.

When someone asks a question, the system generates an embedding for that question and goes looking through the database for the embeddings that sit closest to it. The closest ones are, by construction, the chunks of documentation whose meaning is most similar to the question. You haven't had to guess the author's vocabulary; the model has done that work for you.

Finally, generation

At this point, the system has a list of the most relevant bits of documentation. It could just display them to the user as is — and plenty of search systems do stop there — but it can go one step further: it can hand those chunks, along with the original question, to a language model and ask it to write an answer using only those chunks.

That's the 'generation' half of 'retrieval-augmented generation'. The retrieval step finds the right documentation, and the generation step turns it into a tidy, plain-English answer. The model isn't drawing on whatever it happens to remember from the training data, which is where language models tend to get themselves into trouble; it's giving an answer from your actual documentation and nothing else. If the answer isn't there, the tool will state that, rather than making something up.

Opinions, please

Please have a go and give us feedback on what you found; convenient thumbs-up and -down buttons are included in the chat interface. That information, along with the interaction, will be stored in the system so that we can determine what works and what doesn't.

We will never use AI to create documentation in the first place. Instead, we are hoping to use these tools to help inform us where our documentation has gaps or errors; we put a lot of effort into creating it, and we want it to be as accurate, complete, and straightforward to use as possible. Our documentation team and I are excited to scrutinise the results to discover what they reveal about your needs and how effectively we serve the information you want.

8 comments

Jump to the comment form

Grzesiek

Zapytałem o podłączenie jednego modułu z dwoma czujnikami do Raspberry Pi Pico W.

Pierwszy model zaczął od informacji że nie ma wiedzy na temat modułu ale że powinien działać na i2c, ale najlepiej żebym sprawdził dokumentację modułu.

Drugi model przeszed do konkretu, jak działa i jak podłączyć.

Nie wiem jakim cudem tak wielka rozbieżność z pytaniem o prostą sprawę.

Będę testował 😉

Pozdrawiam

Steve Brook

I asked two questions. 1) can a raspberry pi run ai (note I tried no capitals) Understood the question well and gave a very comprehensive response. giving examples of hardware and software. score=impressive – 2) I asked if a Raspberry PI could run engine management software. I was amazed that it completely understood my question. The AI came back with the fact it did not have specific info, but it gave pointers on the I/O capabilities of hardware etc. It then asked if I wanted it to look at more broader knowledge than it’s manuals. It then posted another line of info, but it did remember that it asked me a question. I replied simply “yes” and it went on to check broader knowledge. It came back with limitations of software and hardware on the PI family and that EMSoftware requires Real Time responses. – Overall I am very impressed with this AI bot. It has a scary understanding of how my mind works. Are you sure you don’t have a Nerd tied up in a box with a keyboard, at PI headquarters, pretending to be AI?

Raspberry Pi Staff Gordon Hollingworth — post author

We have a whole floor of nerds… But they’re not tied up!

James Hughes

Shhh. The reason that so much money is being pushed into AI, is actually to clone all the nerds and buy boxes and keyboards for them.

Otto Schäfer

Just asked how to install nextcloud on the Pi. It gave me a general guidance, which was at the beginning quite good but ended with the apache configuration withiut details. My questionw was “How to install nextcloud on a Raspberry Pi5”

Michael

I asked for current camera controls and got back useful summaries from three different documents, are learly referenced. It would be useful to have a “copy” button to save the answer easily for reference.

PeterF

I tried two questions, one to each machine to see how much help one could get when trying to use nginx with Nextcloud, instead of Apache. The questions were:

– how to install nextcloud on Raspberry Pi using nginx (kappa)

– how to configure nginx for optimal use with nextcloud (inkeep)

The kappa answer was quite sparse, and would indicate at first glance, that there’s not much help available on configuring nginx, particularly for use with Nextcloud.

The inkeep answer finished with a question on whether I would like a general answer. Replying “yes” provided quite a long (cf kappa) list of helpful suggestions. I can’t check at present whether its’ comprehensive enough to get a working installation as I’m away, and can’t access my notes.

I do realise that there is some practical help for this combination outside Raspberry Pi documentation.

Thanks for letting me be a guinea pig!

PeterF

Paul Hutchinson

Yesterday I searched the doc, forum and web for some old information I knew existed about RaPi0W UARTS and bluetooth configuration. I never found what I was looking for, but the AI dug it out for me this morning.

Yay!, thanks for this useful tool.