RP1: the silicon controlling Raspberry Pi 5 I/O, designed here at Raspberry Pi

Raspberry Pi 5 is the most complicated, and expensive, engineering program we’ve ever undertaken at Raspberry Pi, spanning over seven years, and costing on the order of $25 million. It’s also our first flagship product to make use of silicon designed in-house here at Raspberry Pi, in the form of the RP1 I/O controller.

RP1 is an incredibly intricate design, combining all of the outward-facing analogue interfaces required to build a Raspberry Pi, and the respective digital controllers, into a single 20mm² die, implemented on TSMC’s 40LP process. It provides MIPI camera input and display output, USB 2.0 and 3.0, analogue video output, and a Gigabit Ethernet MAC; and it provides enough 3V3-failsafe general-purpose I/O pins, and the various low-speed digital peripherals, to drive our standard 40-pin GPIO header. These components are connected via an AMBA AXI fabric to a PCI Express device controller, and thence to the BCM2712 application processor. Each component has its own clocking requirements, and implementation constraints which must be obeyed for it to function correctly.

Some initial documentation

Today we’re releasing some initial draft documentation around the RP1 silicon. Like the peripherals documentation for the Broadcom BCM2711, which powers Raspberry Pi 4, this release is aimed at people implementing drivers for Raspberry Pi 5. That means that, unlike our documentation around our microcontroller product RP2040, today’s release doesn’t tell you everything about the RP1 silicon that you might want to know; instead, it’s there to help you port an operating system and make use of the features of Raspberry Pi 5. While we are looking at exposing more of the features of RP1, both in software and with further documentation, that’s going to be something you might see a little later on.

Raspberry Pi’s silicon engineering team

Over the last decade, we’ve built an exceptional silicon engineering team, which was able to tackle the challenge of building RP1. But the same capabilities also allowed us to build our RP2040 microcontroller: RP1 and RP2040 share a certain amount of internal infrastructure, and both were built using our in-house SPIV chip-assembly toolchain. And having RP2040 in the market for over two years has served to pipe‑clean many of the processes required to build silicon at scale, making for a much smoother RP1 production ramp.

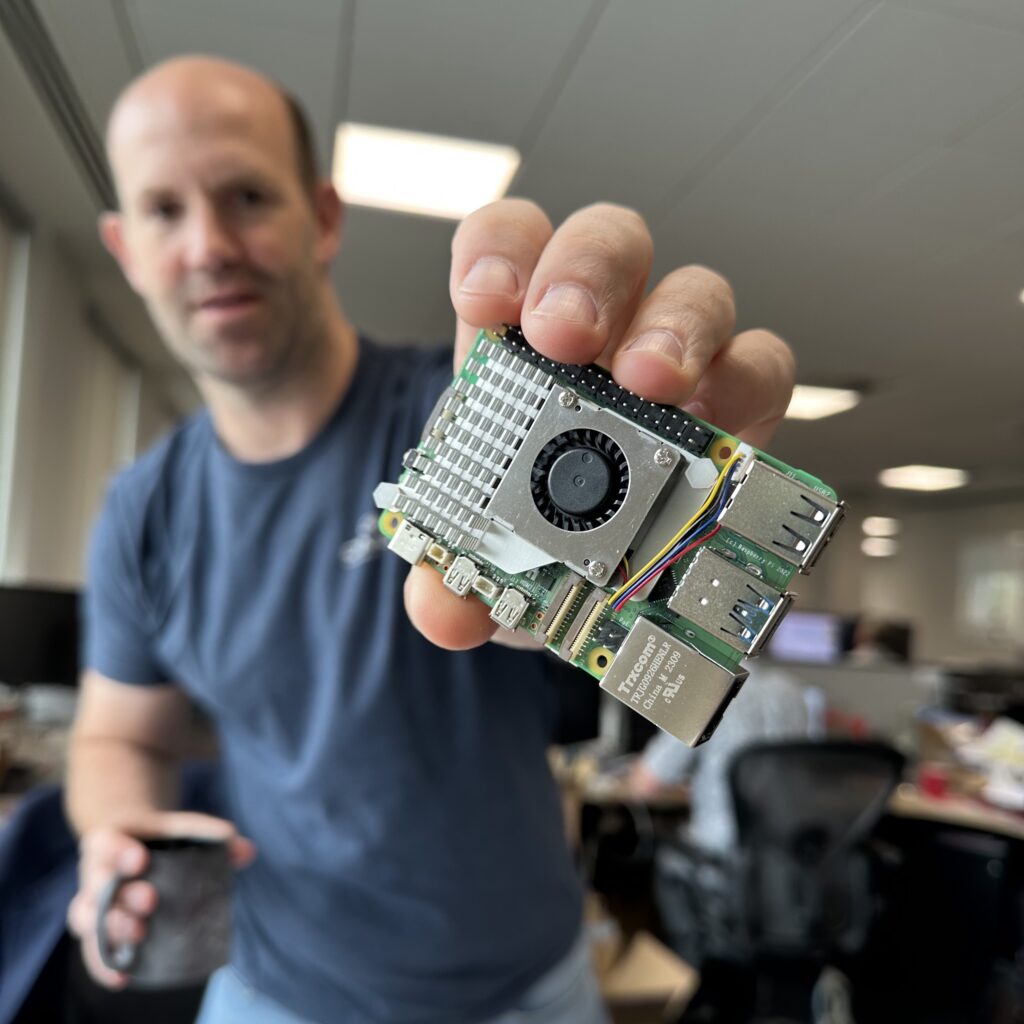

In the video below, our Chief Technology Officer (Hardware) James Adams and I are joined by Liam Fraser, ASIC team member and SPIV creator, to talk about RP1, and the development process that produced it.

Click here to read a transcript of James, Liam, and Eben’s video about RP1.

The transcript below was machine-transcribed before being edited by a human. If you spot any bits of nonsense we’ve missed, let us know in the comments.

Eben 0:00: So we’ve already said that Raspberry Pi 5 is the longest-duration programme that we’ve ever run at Raspberry Pi and it stretches all the way back to 2015, right — it stretches all the way back to, kind of, Raspberry Pi 2 era, just after we launched Raspberry Pi 2. And probably the first thing that we started working on, on this platform, is RP1, the I/O controller. Well, so what is RP1? What does it do?

Liam 0:30: So RP1 takes all of the traditional Raspberry Pi peripherals, the GPIO, the MIPI interfaces, USB Ethernet. What else…

Eben 0:40: Analogue television.

Liam 0:41: Yep, analogue television.

Eben 0:43: Analogue audio, somehow.

Liam 0:44: Yes, analogue audio as well. Yeah. SDIO.

Eben 0:49: And all of the low-speed — So you’ve got the GPIOs. And all of the low-speed peris that — the MUX options that sit behind that, the UARTs and SPIs and stuff.

James 0:57: I guess we don’t have analogue analogue audio; we have PWM output that could do analogue audio if you add the gubbins. But.

Liam 1:05: So we take all of those peripherals, and we’ve put them onto our own chip. And that allows us to keep that as a separate southbridge and then we can migrate our main system-on-chip down to smaller geometry processes.

Eben 1:19: Starting at 16. So 16 nanometres is the first instantiation of this. But in principle, you could then take that same digital design, that 2712 digital design, and you can move that down to progressively smaller nodes, without having to re-procure all of the analogue in the system, right. And so this is all of the I/O: this is the, I guess, the Raspberry Pi-ness of a Raspberry Pi, packed into a single piece of silicon, and now remote. So how does that then talk to the the main SoC, how does that talk to 2712?

PCIe between RP1 and 2712

Liam 1:49: So that’s over a PCI Express link. So all of the masters on RP1 can talk directly into the system memory on the main SoC. And similarly the peripherals in the RP1 address space are mapped into the main SoC as well.

Eben 2:06: So from software’s point of view, these things might as well be integrated; I mean it’s the nice thing about PCI Express, right, is from the software’s perspective, these may as well be integrated onto the main piece of silicon, obviously a somewhat higher-latency link than if they were on the core silicon. That’s a four-lane Gen 2 interface, right, which is four gigabits of payload per lane? 16 gigabits, two gigabytes a second: so we’ve got two gigabytes a second of read and write bandwidth between the two sides of the link. And then when you look on the board, I mean, it’s pretty obvious, you can see this from space pretty much when you look at it on the board, there’s this enormous motorway of lanes with their series capacitors in them that runs between the core SoC and RP1 which is in the top right-hand corner of the board.

James 3:03: If you look at the board the way up the silkscreen is printed, which a lot of people tend to tell us is actually the wrong way up!

Eben 3:12: People think it’s the wrong way up because they — you naturally think your HDMI connectors come out the back, which is a reasonable historical artifact.

James 3:21: One day we may fix even that.

Eben 3:25: Fix it just by turning the silkscreen round!

James 3:28: Sorry, digression.

Eben 3:32: It provides all of this functionality; how’s that all stitched together? So inside of an RP1, inside what we internally called Project Y, how’s that all stitched together? What links the various bus masters? What links those to the PCI Express complex on the chip?

Liam 3:54: So we’ve got a AXI-based bus fabric, which I think is 128-bit data and more than 32 address, probably 40-bit address, something like that. So the PCI address bus comes in as a master and then can talk to any of the slaves and then similarly, all of the masters on RP1 can talk out over PCI Express, which then lets them access the DRAM on the main SoC.

USB topology for improved bandwidth

Eben 4:27: You’ve got a bunch of these components. We should talk about USB, actually, because USB is interesting. That’s somewhere where we’ve got a really interesting uplift in performance over — how did that work topologically on Raspberry Pi 4, and then how is that different and better on Raspberry Pi 5?

James 4:44: So on Raspberry Pi 4, we’ve got single line of Gen 2 PCI Express to this, ah, effectively a hub, so it’s PCI Express to USB 3, and then it’s hubbed out to two USB 2 ports and two…

Eben 4:59: So you’ve got four gigabits of upstream bandwidth. And then you’ve got a pair of, the way we use it, you have a pair of threes and a —

James 5:06: Two threes and two twos, but they’re all effectively behind a — Yeah. They’re all sharing that bandwidth. Whereas in Project Y —

Eben 5:13: So on Raspberry Pi 4, you can’t even run one of those USB 3s at peak rate, right? You can’t —

James 5:22: Not if you’re — well —

Eben 5:23: You’ve got five gigabits nominal payload, but you’ve only got four gigabits upstream. So you can’t even run — Now how’s that different on Project Y and Pi 5?

Liam 5:34: You’ve got two USB 3 controllers, I think each one has a USB 3 port and a USB 2 port. But because of the bandwidth of the PCIe link, we should be able to run both USB 3 ports at full bandwidth.

Eben 5:50: So if we’d gone for that hubbed architecture, we probably still would have been limited to five gigabits, we’d have gone from four to five. In practice, because we’ve got two separate controllers, and we’ve got lots of upstream bandwidth, we now genuinely do have — we can genuinely get the five out of each of the ports separately; which, of course, if you want to use this to build a RAID array or something, that’s a much better solution.

James 6:14: It’s also worth saying that we’ve had this controller in the lab for a long time. And we’ve done a lot of work on making sure that the software works and any bugs in the controller have been ironed out. So actually, there are fewer workarounds for this controller than any other silicon I think we’ve ever had on any Raspberry Pi, right. So it’s the least quirky.

Eben 6:38: Jonathan spent an enormous amount of time looking — particularly I think some of the corner cases you find with UAS devices, which are, you know, they’re fast, and they’re a little bit subtle in how they behave, you know, we really are pretty confident we’ve squeezed all the balancing —

James 6:53: We’ve had a long time to massage it into doing things properly.

Eben 6:59: We’ve had this design in the lab for three-ish years, roughly? When did B0 come back? It’s a another pandemic-era thing, isn’t it?

James 7:08: Was it 2020—?… Gosh.

Eben 7:11: Jonathan in there in the lab in 2020!

James 7:16: It’s all a bit of a blur. I think COVID’s kind of like taken this two-year window out of everything —

Eben 7:20: Well there’s this weird thing with Raspberry Pi 4, where it launched in 2019. So it’s demonstrably now a four-year-old product, and yet I somehow think the combination of the pandemic and then the supply chain challenges, I sort of somehow feel like Raspberry Pi 4 is a new product — it sort of feels like a product that’s only really just getting into its stride now.

James 7:37: We launched RP2040 in 2021!

Eben 7:39: Yeah, it’s incredible!

James 7:40: Actually, it must’ve been 2020 when we had B stepping silicon.

Eben 7:45: And so we will have been able to look at the USB 3 behaviour since then; we’ve obviously had it in FPGA for slightly longer than that — the nice thing about having all of these designs in FPGA — we should talk a little bit about prototyping, actually — so having all these things in FPGA has meant that when you have a — it gives you that godlike perspective that you get when you can put instrumentation onto any net. Okay, it might take you overnight to rebuild your FPGA image, but you really can get in there. And instrument everything.

Liam 8:13: It also allows us to get proof-of-concept Linux drivers for everything, you know. And you can do all-up bandwidth testing. To the best of your ability anyway.

Better MIPIs

Eben 8:23: So we’ve got better USB. I think we have better MIPI now, we have slightly different MIPI. But we have better MIPIs, and what does the MIPI subsystem look like on the platform? This is the camera and display.

Liam 8:39: So there’s two MIPI PHYs and each MIPI PHY, so the physical interface, can be either a camera or display.

Eben 8:48: So these are transceiver PHYs — they can transmit or receive frames.

Liam 8:51: Yeah. And then we can feed that from the system memory over PCIe. And then we’ve got the ISP frontend…

Eben 9:06: Ah — we should talk about the ISP frontend. I think we’re going to do a whole session, we’re going to do a whole video on ISP. But it’s interesting that the the ISP — this is, Raspberry Pi 5 is, the first time we’ve shipped the new generation ISP; all previous Raspberry Pi’s have used the classic VideoCore 4 image sensor pipeline. But that comes in two components doesn’t it? It comes as a frontend component which lives on Project Y and deals with the pixels, does some work on the pixels literally as they come from the camera; and then you have the larger ISP backend that lives on the main SoC.

Liam 9:36: And then on the display side, there’s a DMA that, again, takes the video from the main memory. So is the difference that there’s more bandwidth on the CSI and DSI?

Eben 9:51: I think where we’ve come from — we’ve come from a world in which certainly if you’re a Raspberry Pi, if you’re just the Raspberry Pi flagship product, you have two two-lane interfaces: you have a two-lane camera and a two-lane display. Compute Module, the actual underlying silicon, or all the previous underlying silicon, has had four MIPI interfaces. It had a two-lane and a four-lane camera, and a two-lane and a four-lane display. What we have now is two four-lane transceivers. So strictly you have less on this platform. Strictly, you know, you can only connect a total of two cameras and displays to the platform, but you can connect two four-lane cameras or two four-lane displays. And all of that functionality is brought out on the flagship product; it’s not only available to you in the Compute Module world, it’s available to you on the flagship. So that’s MIPI world, this is additional bus masters in the system.

GPIO

What else is there? GPIO world: how does that work? So we have GPIOs, but you also have low-speed peripherals, and muxing. How does that work? How is it similar to what we’ve had before? And how is it different?

Liam 11:02: So I think we have at least the same number of peripherals that we’ve had on previous platforms.

Eben 11:09: in particular, on Pi 4, which was the one that grew all of the new peripherals. And then they’re in the same MUX positions. So if I have become used to having a UART on a particular pair of GPIOs, I still have a UART on that pair. But they are different. They’re potentially different peripherals. Right, they’re potentially different —

Liam 11:31: Different types of —

Eben 11:32: Different types of peripherals — the UARTs are the — I think we established the UARTs are the same, when we talked last, we established the UARTs are the same.

James 11:38: PL011 Arm UARTs. And everything else is different, mostly from Synopsys — I2C, SPI… I2S is Synopsys as well, and then we have a transmitter and a receiver, rather than a transceiver in Pi 4.

Eben 11:55: SDIO, as well.

James 11:57: SDIO, yep.

Eben 12:01: So that’s kind of the bones of it. But we have analogue television out, we actually have VGA out, don’t we, at the chip level?

James 12:08: At the chip level —

Eben 12:09: Not at the board level?

James 12:10: Not at the board level. Actually, the PHY, you know, each channel of — so you’ve got three-channel PHY on there, largely because sort of IP size-wise, it made little difference to have a single or a triple DAC, right. So it can do VGA; we didn’t have the board area on Pi 5 to bring it out. And also the DAC’s quite power-hungry. So, you know, there’s constraints there, but at the chip level, yeah, you can do VGA with that.

Eben 12:38: You can do VGA, you can do composite — can you do S-video?

James 12:44: I…er… it’s a good question!

Eben 12:44: Can you do S-video with two lanes?

James 12:47: Can’t see…

Eben 12:47: Interesting question.

James 12:47: Yeah, have we have we ever tested it?

Eben 12:49: I’m not sure, but if we haven’t tested it, then —

James 12:51: Probably doesn’t work then!

Eben 12:51: — Alan Morgan’s [a Broadcom engineer] law applies. And then we can do wacky old-style television as well, which is the key thing I’m looking forward to with Raspberry Pi 5, seeing the first person to get a 1950s telly working with Pi 5. So you’ve got that, you got DPI, I guess, so you can do the old-style parallel video interface.

Liam 13:13: Did I mention Ethernet?

Eben 13:14: We didn’t mention Ethernet. What’s integrated on RP1 and what’s not on RP1?

Liam 13:21: So we’ve got the Ethernet MAC but not the PHY. So the Ethernet’s brought out to an RGMII interface, which then connects to an on-board Ethernet PHY.

Eben 13:35: And this is a fairly similar architecture to Raspberry Pi 4, except that in that case, the MAC was in the Broadcom device, but there was still an external — in fact exactly the same external — PHY, [BCM]54213. Cool. So that’s the overall structure of the design. How is it — we should probably actually — there’s an interesting aspect, isn’t there, that you talked about those streams of traffic that go to and from the design; there are two very different sorts of traffic, aren’t there?

Traffic prioritisation

There’s real-time and non-real-time traffic. And how do they interact with each other, and what do we have to — so by real-time traffic, I mean camera and display data: you know, if I don’t get my pixel for the display in time, my display gets corrupted; if I am not able to unload a pixel from the camera before my FIFOs fill up inside the design, then I get a corrupted image. So those are real-time, so those things have to be done. And then you have things like USB; now some — of course USB traffic can be real-time as well, if it, say, comes from a webcam, but most USB traffic isn’t. Most USB traffic is best-effort, to be the high-bandwidth USB traffic to UAS devices is best-effort. So one of the challenges of making those work together, and when they — in the end, the data travels together over a shared link, and what have we done? What have we had to do at the chip level, and then what have we had to do at the system level, and on the other side of that link, to make that work?

Liam 15:05: Yeah. Yeah, so the main bus fabric supports priority. So, for example, your camera would be highest priority, because if your data doesn’t get there, then you lose your image. And then something like USB can be much lower priority depending on the traffic. And that can get passed all the way through the fabric, all the way through the PCIe link, and all the way through to the other system, you know, the main system-on-chip. So the main system-on-chip can get a version of that priority information, to the point where I think PCIe can sort of reorder the way it does things, you know; USB can have something queued up, but then if a higher-priority message or a request from a camera comes in, then that will get handled first.

Eben 15:54: So you — so your — and then down at the main SoC that can have a look at the data that’s coming in. And it can say: oh, this is USB — Because of course, the same thing happens down on the main SoC, right, you’ve got HDMI data there, which is real-time. And so if it goes: oh, well, I’ve got HDMI, I need some pixels to put out on HDMI, which is on the main SoC, and I’ve got a request from a USB drive, I can say: well, USB, you hold off while I go get my pixels that I need to scan out, my real-time pixels that I need to scan out; or even conceivably hold off the USB for the Arm. Because the Arm, while it’s not real-time, Arm is not desperately latency-tolerant. You know, if you load a value from memory on the Arm, you’re probably going to need it pretty quickly, otherwise your processor will stop making progress. That creates a problem, though, right? There’s a notion of head-of-queue blocking that we’ve had to deal with. What is that?

James 16:52: Who’s gonna answer that?

Liam 16:54: He was looking at you!

Eben 16:55: I’m looking at you!

James 16:56: Oh god!

Eben 16:58: If you’re not careful, if you don’t answer it then I’m gonna give my management-level understanding of it, so you’d better answer it!

Dealing with head-of-queue blocking

James 17:03: So…

Eben 17:05: What’s the risk?

James 17:07: This is the risk, basically, that something’s getting in, and effectively, it’s got the ability to pump a bunch of data because it’s been given priority, but that data’s effectively blocking the higher-priority stuff coming in. Either your message — well, there’s two ways, I guess — either your message, your priority message, doesn’t get through, because it’s blocked by somebody else’s, or likewise, the data, right? If you open the gate, and you give it a bunch of window to give its data, and it either stalls or it doesn’t shift the data in quick enough, then you can actually stall the thing that’s higher-priority.

Eben 17:40: So you have this risk, don’t you, that RP1 has no real-time stuff to do, but it has a bunch of non-real-time stuff to do. And so all of the buffers, all the FIFOs in the system fill up with USB traffic, and then the camera wakes up and goes: I need to unload this. And there’s a huge queue between your camera and the DRAM on the main SoC that’s full of low-priority data. And all of those components are looking back, going: well, I’ve only got low-priority stuff, I don’t need anything doing; there’s this guy at the back of the queue, waving his hand, saying: hello, help! I’m running out of FIFO space.

And of course the solution to this, the generic solution to this — and of course, it’s a thing that happens inside the main SoC as well — the generic solution to this is priority forwarding. So if I’m standing in a queue behind you, and I’m high-priority and you’re low-priority, I tap you on the shoulder and I say: you’re high-priority now. And then the next cycle, you tap the next guy on the shoulder and say: you’re high-priority now. And sooner or later that priority, probably at one queue element per clock, that priority makes its way down through the system. And before you know it, the guy who’s standing at the front of the queue, who is nominally low-priority traffic because he’s USB, is now high-priority and he’s high-priority because he needs to get out of the way of the real high-priority guy who’s serialised some way behind him in the queue.

Now that obviously works well inside the system, and of course the fabric does priority forwarding. It’s a bit more subtle, isn’t it when you go over PCI Express because there isn’t really a notion of priority forwarding. And we actually have custom vendor messages don’t we? So PCI Express has the concept, as well as a data payload message, it has a concept of a vendor signalling message. And we define a vendor signalling message which allows us to tell the main SoC, I’ve got panic — we call it panic, you know, very high priority panic. I’ve got panic over here, you should panic now. And then that causes its end to panic to elevate its priority.

And then obviously, it’s another piece of co-design, worked with Broadcom, so that the PCI Express route complex inside 2712 is aware of these vendor messages and is able to turn on and off its panic state. And that’s effectively the very long tapping-the-guy-on-the-shoulder. It’s the tapping the guy who’s at the other end of the PCI Express — in the queue at the other end of the PCI Express link on the shoulder and saying, yeah, panic now, please. So it’s kind of cute. And again, the sort of thing that you need to discover, you need to, I guess, you’ve got to have the architectural awareness, the experience to know that this sort of class of issues is going to be a problem. And then you’ve got to have the time required to spec it properly, and then having specced it properly to check that it actually works.

James 20:17: And these things are complicated: they have — there’s lots of dynamics in these systems. So even when you’ve thought through a problem, you get it on the FPGA or the PiMulator and you spot something else, right. So, again, it sort of speaks to the design, the time and the effort that’s been taken in all of this stuff that, you know, it required some iteration to get it right.

Prototyping the platform

Eben 20:39: What did what did prototyping this platform look like?

Liam 20:43: So we used proFPGA systems, which allowed us to run — ooh, I don’t know how fast it ran actually.

James 20:52: About 60 megahertz, or am I just being optimistic?

Liam 20:55: No, I think you might be right, actually.

Eben 20:58: It’s about a third of — what’s rated clock speed?

James 20:59: Uh, 200.

Eben 21:00: 200. So it’s about a third of — which is good enough, actually, right. So you have the digital elements of the design running at about a third the speed they would in the final chip?

Liam 21:09: Yeah. So yeah, we had PCIe, USB 3, Ethernet, all the GPIOs. We couldn’t do — I don’t think we could do every peripheral in a full system, but you’d have one USB 3 controller, for example. But you could decide to have two USB controllers and not put in DMA or something like that. But yeah, that’s allowed us to sort of develop our Linux drivers alongside the system. And, yeah, it’s allowed us to sort of work out the areas — in particular, you know, seeing the priority. Getting the priorities right, and proving that that works, you know. You can sort of have a camera demo, and then you give the camera the worst priority, or the lowest priority, and then the camera stops working, and then you fix it again. Yeah, so it lets you prove all of that stuff in the real world.

Eben 22:02: And we proved it up against a number of different host devices as well, didn’t we? So we proved it against 2711, so we’ve had a kind of a “Raspberry Pi 4 but with Project Y” world. We even had x86.

Liam 22:17: Yes!

Eben 22:17: x86, well, what did an x86 Raspberry Pi look like?

Liam 22:21: It looked good. Except, well, except the software architecture’s a bit different. So it actually becomes a little bit different to share the drivers, because x86 doesn’t support device tree, whereas the Arm platforms do support device tree, which is sort of how we tend to describe how our platform looks.

Eben 22:41: But we were able to connect proFPGA — so, emulated RP1 in proFPGA — to a desktop PC via a PCI Express card. Did we build PCI Express cards with silicon on?

James 22:58: Yeah, we did.

Eben 22:58: Yeah. So all of this work was done in FPGA. We’ve then had three major tapeouts: we had an A0, B0, C0 tapeout. Each of those, certainly the B0 and C0 tapeouts, we then generated — obviously we had development boards, we had PCI Express cards, and that was kind of fun, right? You actually really do have a PC except it feels, in its I/O world, a lot like a Raspberry Pi, and it has Raspberry Pi — it’s a PC but with a Raspberry Pi GPIO, a PC but with a Raspberry Pi USB or Raspberry Pi MIPI subsystem. It’s kind of unusual to have a PC with MIPI, you know, with MIPI interfaces on it.

Liam 23:38: The bring-up board is PCIe card-shaped, right?

James 23:42: It’s a big PCIe card with a socket or — You can solder the chip on as well.

Eben 23:48: And then finally, we had two generations of Raspberry Pi — there was a, I remember a Raspberry Pi 5 with the right-hand side cut off so for a little while we had a 2712 board that stopped, kind of —

James 24:01: At an x4 PCI Express connector —

Eben 24:03: An x4 PCI Express connector where everything on the right-hand side of the connectors, and —

James 24:08: So, Pi 5 prototypes really started with that, with a massive great proto FPGA — sorry, not FPGA, you know, bring-up board for Project Y, RP1 in it, and then we iterated it again and put the chip down.

Eben 24:25: You did your usual… explained to me that it wasn’t gonna fit.

James 24:29: Well I do that until it fits!

Eben 24:31: Yeah, and I’m used to that kind of thing. I’m used to it now, but I can report I found it alarming! One day it actually fits.

James 24:37: Yeah. Wow, it does fit, yeah.

Eben 24:38: I remember being shocked when you made, when Compute Module 3 was one millimetre taller than the JEDEC — there’s a JEDEC standard, this is DDR2, DDR2 SODIMM ?

James 24:48: DDR2 SO — yeah, the memory DIMM.

Eben 24:52: Yeah, the — CM1 is standards-compliant, and CM2 is one millimetre taller, and I just remember the shock when a thing that you told me wouldn’t fit actually didn’t fit.

James 25:06: No. Sorry.

Eben 25:06: But it fit, it fit in the end anyway. And as we say, it does look beautiful. And it does speak to the co-design.

James 25:13: I mean, it is a thing, right, when you’re doing board layout, you kind of don’t know it’s going to fit almost until right at the end. And I think… So I’m naturally sort of conservative and pessimistic. I will always say it doesn’t fit until it fits!

“Substrate fun”

Eben 25:28: We had some substrate fun as well, didn’t we? We iterated the sub — It’s quite a clever substrate. We iterated it a lot to get —

James 25:32: Well, so, you know, RP1 is designed to be a low-cost chip, right? So it’s on 40[nm], which we know and love; we haven’t put any extraneous stuff in it; all the stuff in there is, you know, been designed for the platform, as we do. The substrate is a two-layer substrate.

Eben 25:49: So — just in terms of the package —

James 25:51: Yeah, so the package — it’s a BGA package.

Eben 25:53: But by BGA — this is a grid of solder balls on the bottom of…

James 25:57: Grid of… yeah, it’s 12 by 12. 12 by 12 millimetre with, I can’t remember what the ball grid number is. But that’s on two — So, substrates are like little tiny PCBs: they can have a number of layers, and you can then route the signals from the die all the way down to the pins or the balls. This is a two-layer substrate, which makes it quite cheap, and we had to — and it’s a wire-bond chip, so the pads of the chip around the edges and wires, the little wires come out and bond down onto the top of the PCB substrate and then get ridged to the balls. So we spent quite a lot of time designing the die so that you could bring those pins — uh, those wires — basically straight out and down to a ball. Sort of makes the substrate easier, in — well, not easier, but cheaper. And we had to iterate that, actually, once because — It’s just a hard thing to do; so, we had some marginality in one of the power supplies, based on the way it was done. So we had to rejig —

Eben 26:57: You had to go and fatten up the column.

James 26:58: Yeah, we had to fatten it up and change the layouts so it’ll reduce the inductance. But again, it’s another thing that, with effort, we’ve managed to make work, and it’s it’s reduced the cost of that chip. Even though most people would say, oh, you probably want a four-layer substrate for that, we’ve kind of —

Eben 27:13: And it’s a pretty, it ends up being a pretty cost-effective chip, you know, it has all of the Raspberry Pi-ness in there. And it’s not really materially more expensive than any of the previous I/O controllers. Our previous I/O controllers have done one or two things: have done USB hubbing, or they’ve done USB hubbing and Ethernet. This has all of the Raspberry Pi-ness. And really, it doesn’t — the cost impact on this, I mean, it’s about the same.

James 27:36: That two-layer substrate makes the ballout sort of a little bit weird, but it’s fine, because we could iterate it on the Pi and just say, okay, it works now. It looks a bit odd, looks slightly less neat than you might get from a, sort of, one that’s been designed first and then designed the other way around. But it works fine. Yeah.

Eben 27:55: It’s a nice, it’s a lovely platform, right? Yeah. So as I say it’s gone through three major iterations, you know, over a long time. And of course, it’s the reason why we have a chip team. And it also, I guess, we should talk a little bit about tooling. It sort of justified the creation of some of the tooling, that then made the RP2 device, RP2040, easier to build.

Tooling

Liam 28:21: Yep, yep. So RP1 is sort of built from the same scripting architecture that was used to make RP2040. Generally, anything that we’re going to repeat, whether that’s repeat across multiple chips, or just repeat on the same chip, we tend to sort of script up, so that you can build it from a specification. So all of our clock generators are built up from a list of — you know — you specify a list of clocks that you need, what size the dividers should be, what features they have, and then that will just stamp out ten — well, it’s five on RP2040, and then on Project Y, or RP1, I think it’s 20 or 30. Or there’s lots of…

James 29:04: I think it’s about 30 actually.

Eben 29:05: It’s really taught me how much of the complexity, the power and clocks complexity, of the classic architecture is actually in the analogue elements rather than in the fast digital elements of the design.

Liam 29:19: Yeah. And the reason we’ve got so many clocks tends to be — audio and video are quite fussy about the clocks that they need. I2S is as well. And then — so we do that with clocks; we do that with resets; we do that with power-on state machine, which takes things out of reset in a particular sequence and waits for each thing to be done; we do it with the GPIO muxing. So the GPIO —

Eben 29:47: Is that done that way on Project — on RP1 as well as RP2040?

Liam 29:51: Yep. So it comes from a spreadsheet. Basically the spreadsheet that’s in the datasheet is the spec that goes into the tool, and then it does the right thing. Yeah, there’s some other bits as well, all the general wiring. So you say, I want my chip to have two USB controllers, two SDIOs, etc, etc. And then it will go and instantiate all of that for you. Oh — it wires up the bus fabric, spits out the address map…

Eben 30:17: Spits out some of the headers and stuff for software.

Liam 30:20: Yep. All the documentation, software headers…

Eben 30:23: It’s cute. And I guess we’ve all had some experience in ASIC development before this, and it does feel like an extra level of performance beyond — All organisations have some level of design automation at that point, but somehow, we seem to have managed to take it to a higher level than we’ve seen before. Which is kind of fun, right? And it helps us have a small team, it helps us have a small, efficient — of course, small teams are challenging, right? Because there’s a lot of work to do, and you only have a small number of people to do it, but small teams are good because you can have enormously high calibre, it’s easier to recruit a small, high-individual-calibre team than it is a large high-individual-calibre team. And also the amount of time you spend talking to each other, when you’re a small team, is more than linearly better than a large team. And a lot of this work you did with Terry, right?

Liam 31:16: Yeah, yeah. So I was, I guess, Terry’s understudy, but yeah, Terry is the guy who’s written most of these, he would describe them as highly parameterisable components. So yeah, and that’s a clock generator, the I/O muxing, the pad wrappers, the reset slice, we would call it, the power slice. Yeah, we call all these things, like, clock slices, etc. But yeah, the idea is you sort of stack them together to make the block that you want. It also means that, you know, we can verify that as a standalone item, and then call that known-good, and then stamp them out as we need.

Eben 31:57: And it’s this idea, I think, that we’ve come to, of having a team which is not quite starving; you have a team where you have slightly more than the minimum amount of resource you need. So you have little enough resource that there’s a strong incentive to look for design automation opportunities, but a large enough team that there is somebody available to do the design automation, right. Situations where teams are starving don’t generate innovation; situations where teams are under some resource constraint generate innovation.

James 32:29: I think we’ve got enough seasoned hands who are fed up of typing lots of stuff that we’ve typed in ten times already that having this tool has just made everyone’s life so, so much easier.

RP1 and RP2040

Eben 32:43: And it made RP2040, we should probably talk about how RP2040 fits into this flow, right? RP2040 was kind of multiplexed onto the same design team really as a way of kind of — at the time it felt like sweating some extra value out of our investment in ASIC engineering. It was feasible, I think, because a lot of this design automation, and — even there you have AHB fabric that’s automatically assembled as well. So you have this level of automation. From my point of view, I think it’s done another helpful thing, which is it’s meant that RP1 is not our first production chip. RP2040 was an interesting chip to get into production because, of course, it went into production during a pandemic, it went into production during a supply chain crisis. But it taught us a lot about operations; obviously, Jon Matthews joined us to run the operations element of the organisation. I think it would be quite scary, right, if a chip which was in our flagship big Raspberry Pi product was also the first piece of silicon that we did.

Liam 33:53: Yeah, I mean, the production test for RP2040 is relatively simple compared to RP1 because there’s so much analogue on RP1: the tester has to be more complicated, and there’s lots more to test.

Testing

Eben 34:04: But interestingly, we’re using the same tester, aren’t we, we’re still using J750 for it. We use Teradyne J750. But with a sophisticated load board. I think that’s another thing that we learnt, another thing that we really learnt — well, I think probably Jon knew or suspected, and I think that we learnt over the course of RP2040 — was that you can get away with a very cost-effective tester, and then the load board that adapts the chip onto the tester, you can put some of the analogue components in there.

James 34:31: I think we are using J750Ex, which is the slightly shinier one, but it’s still a low-cost tester. Which is great.

Eben 34:41: I forget what it was we were planning to use, but it was hellish, wasn’t it? It was a very, very complicated tester before.

James 34:48: I can’t remember.

Eben 34:48: What’re they called, those super — UltraFLEX, we were gonna use UltraFLEX, but we’ve been able to use J750.

Liam 34:53: And how many boards can we test in one go?

James 34:57: Quad site.

Eben 34:58: This was quad site.

James 34:59: So for —

Eben 35:00: RP2040’s octal site —

James 35:00: RP2040’s eight —

Eben 35:00: Yeah it’s octal site.

James 35:00: And then we do four for this one. Yeah.

Eben 35:04: Which is still very wide; very few of the chips that I’ve worked on in a previous life had even quad site testers. But it’s fun. It’s fun and good to have another chip in the wild. Good to explain to people what the ASIC team has been doing. Good! Excellent. Well anyway, that’s RP1. It probably comes to, of that $25 million, it’s probably substantially more than half the cost of developing Raspberry Pi 5. But it’s been fun to do, and I suspect it will probably be with us for a little while.

36 comments

Dom

A quick look at the documentation reveals some interesting

features such as the noise-shaping PWM and debounced interrupts on the GPIO (among many others). What are the chances of making this device available separately? It would make a good companion to the Compute Module 4, especially if you need multiple Ethernet interfaces, additional GPIO or a way of providing USB3 ports without having to try to source VL805s through (how can I put this politely?) “unofficial” channels.

Raspberry Pi Staff Alasdair Allan

There aren’t any current plans to sell RP1 separately.

Edward

That’s a real shame, I’d love to have that interfacing capability in my products. Can imagine I’d be ordering at least a few tens of thousands a year.

Tong

While it seems straight forward to sell the RP1 separately, it actually will add a lot of support workload to the team. It makes sense just keep the chip for in house usage.

Hans

Would really like to see the RP1 capabilities on a desktop computer by PCI-E. Please think about that (even though the capabilites might be limited).

Bean Genie

Is the spelling of the acronym SPIV deliberate? According to Wikipedia, Spiv is British slang for black marketeer.

Interestingly, nowhere in the transcript does the term SPIV reappear. So I’m curious what it stands for and if it’s open-source or at least free to download?

Curious reference in the transcript: “Eben 12:49: I’m not sure, but if we haven’t tested it, then probably doesn’t; Alan Moore’s law applies.” Are you talking about the Watchmen or electric sheep already?

Raspberry Pi Staff Helen Lynn

Having searched for this phrase to try and make sense of it while editing the transcription, I too would love to know the answer to this. Possibly Eben was thinking about somebody else’s law, or perhaps he didn’t say what it sounded to me like he said. I will have to try to remember this important outstanding query for when I next get a chance to buttonhole him :-)

Randy Connelie

Perhaps he is referencing Amara’s law?

>We tend to overestimate the effect of a technology in the short run and underestimate the effect in the long run.

In context of the conversation: Eben could be implying that they didn’t think that feature was important, but the users might do amazing things with it. (The “underestimate in the long run” portion of Amara’s Law.)

AndrewS

SPIV is part of our internal tooling, and stands for “Scripted PI Verilog”.

Jose

You already had me at accelerated AES encryption.

Max

Interesting inside looks at the development of the RP1, thank you very much!

I’ve missed out so far, what is the PiMulator?

Bersis

Do you Going to sell it to public like rp2040 if you do some people would appreciate.

Thanks

Raspberry Pi Staff Alasdair Allan

There are no current plans to sell RP1 on its own.

Robert Heffernan

Well make plans. Firstly from the perspective of repair if someone manages to spike some GPIO pins then parts are available to cheaply replace the RP1 and repair their RP5.

Then you got the guys who want to design it into their own designs.

Plus better for the RP Foundation because more money from IC sales.

Adam Kerr

I doubt there’s a big market of people who are capable of replacing a BGA chip, who would bother on a sub $100 device.

CJay

If I had a Pi5 and had managed to cook the RP1 chip, then you can be sure I’d like the chance to have a go at replacing it.

The Pi is headed for the bin if I can’t replace it and BGA is far from impossible to replace with relatively simple tools and a bit of ‘knack’ which a lot of home tech enthusiasts have these days.

What have I got to lose?

Danny

Love it! Keep it up team! So excited to get my hands on the Raspi 5 and add it to my collection of over a dozen Raspi 4b boards lol.

Leonard Peris

Even 5.50 Misspelling ”genuinely’

Raspberry Pi Staff Helen Lynn

Good spot. Corrected.

Gavin McIntosh

X86 host for RP1?

That could be fun for some, make sure VGA pins are broken out then run freeDOS?

Neil Jones

Will the RP1 be available for sale as a standalone device ?

tovli

Any guidance for systems that relied on wiringpi for access to the GPIO? – Would love to add Pi 5 to my robot but use wiringpi for SPI and I2C access.

Tony Finch

Interesting that the RP1 has a pair of Cortex-M3 whereas the RP2 has Cortex-M0+. Is the RP2 that desperate for smaller cores? Or are there some extra capabilities in -M3 that RP1 needs?

Nicko

Table 2 in the data sheet lists several blocks that are not documented in this data sheet. Notably, it includes a PIO block at offset 0x40178000, between TICKS and SDIO0. Is this a PIO block like in the RP2040 (please, please, please!) or is this something else far less exciting?

Raspberry Pi Staff Alasdair Allan

As Eben said in the post, at least at launch RP1 is going to be minimally documented. We’re looking at perhaps exposing some PIO capabilities eventually, but there isn’t going to be anything written down at launch, or for some time afterward. That said the PIO in RP1 is not quite the PIO you’re used to in RP2040, and doesn’t work in quite the same way.

Raspberry Pi Staff PhilE

You should read Luke’s comment on the Pi 5 launch post: https://www.raspberrypi.com/news/introducing-raspberry-pi-5/#comment-1594112

I can tell you from experience that the register interface to the PIO block is slightly different (beyond just address map changes) so there is no binary compatibility with RP2040, but in terms of PIO functionality it is half an RP2040. However, the fact that one M3 core is reserved and SRAM is shared between the cores means making PIO available to users while maintaining the standard RP1 functionality is going to need a significant amount of software effort, so don’t hold your breath.

Stefania Canella

RP1 … 3V3-failsafe general-purpose I/O pins …

VERY NICE !!!

I had a couple of Raspberry PI3 cards “burned” by my students handling GPIO with different devices without much attention …

Leo C Steinberg

The ethernet documentation doesn’t explicitly say it but references the cadence IP documentation variant for supporting AVB. Will the RP1 be able to support TSN?

Tovli

Does the hardware i2c fully support clock stretch?

Trent Piepho

If the RP1 chip was available, it seems like one could make a PCI-e expansion card or a M2 form factor module that contained it as a sort of I/O expander for a desktop. And possible via USB with some sort of USB->PCIe bridge. Which would be a useful way to add things like SPI/I2C/UARTS that don’t come with PCs.

But it would also be useful for those developing applications that will run on a RPi5. The RPi5 itself isn’t all that fast compared to a modern workstation. With a RP1 addon, one could develop and run on a real workstation, with the currently popular bloated IDEs, but the software could also use a real RP1 chip during development, the same it will use when running on actual RPi5 hardware.

William

Is the Pi 5 with the RP1 compatible with Linux software written using the APIs and device paths provided to the Pi 4 and earlier?

Matthew Miller

What’s the status of upstream kernel support for the RP1 (and everything connected through it, for that matter)?

Chrisberry

When can we expect a 16GB RAM Raspberry Pi 5 or a Pi 500?

I’d insta-buy the Pi5 if it had 16 gigs of RAM.

Jaack

16 gb boards may be announced but product availability remains the frustrating stocking reality. Pi4s remain out of stock even today! When can a pi5 become available is anyone ‘s guess along with corporate greed pricing & availablity pollicies?

Raspberry Pi Staff Liz Upton

Pi 4s are not out of stock at all, and have not been for many months. http://www.rpilocator.com

Jaack

Too bad that every full Pi 5 boards are sold out at minute ONE of every announcements. Still pi 4 are sold out at many authorized sellers even though the new gen. of boards have just been announced. Wish Pi makers announce only when stock is available for boards! Too bad they don’t listen to individual people buying one or more board.

“Where’s the beef” Jingle Fits this continuing supply shortages. Very disappointed ☹️.

Comments are closed