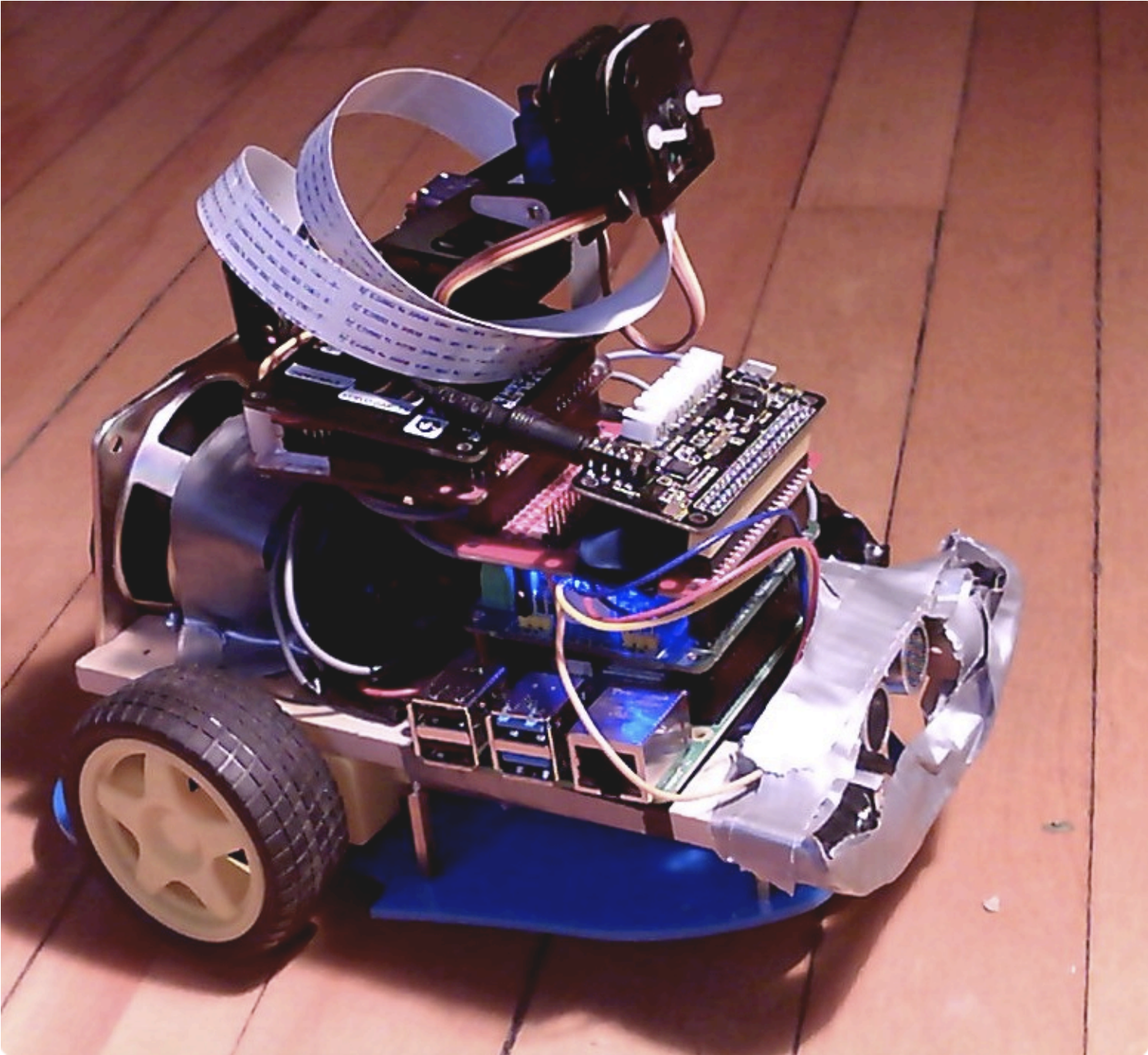

Nandu’s lockdown Raspberry Pi robot project

Nandu Vadakkath was inspired by a line-following robot built (literally) entirely from salvage materials that could wait patiently and purchase beer for its maker in Tamil Nadu, India. So he set about making his own, but with the goal of making it capable of slightly more sophisticated tasks.

Hardware

- Raspberry Pi 4 Model B 8GB

- ReSpeaker 2-Mics HAT

- PiJuice HAT

- Motor controller board

- Raspberry Pi camera and Pimoroni Pan-Tilt HAT

- Ultrasonic distance sensor

- Infrared proximity detector

- A battery pack for the motors

Software

Nandu had ambitious plans for his robot: navigation, speech and listening, recognition, and much more were on the list of things he wanted it to do. And in order to make it do everything he wanted, he incorporated a lot of software, including:

- Python 3

- virtualenv, a tool for creating isolating virtual Python environments

- the OpenCV open source computer vision library

- the spaCy open source natural language processing library

- the TensorFlow open source machine learning platform

- Haar cascade algorithms for object detection

- A ResNet neural network with the COCO dataset for object detection

- DeepSpeech, an open source speech-to-text engine

- eSpeak NG, an open source speech synthesiser

- The MySQL database service

So how did Nandu go about trying to make the robot do some of the things on his wishlist?

Context and intents engine

The engine uses spaCy to analyse sentences, classify all the elements it identifies, and store all this information in a MySQL database. When the robot encounters a sentence with a series of possible corresponding actions, it weighs them to see what the most likely context is, based on sentences it has previously encountered.

Getting to know you

The robot has been trained to follow Nandu around but it can get to know other people too. When it meets a new person, it takes a series of photos and processes them in the background, so it learns to remember them.

Speech

Nandu didn’t like the thought of a basic robotic voice, so he searched high and low until he came across the MBROLA UK English voice. Have a listen in the videos above!

Object and people detection

The robot has an excellent group photo function: it looks for a person, calculates the distance between the top of their head and the top of the frame, then tilts the camera until this distance is about 60 pixels. This is a lot more effort than some human photographers put into getting all of everyone’s heads into the frame.

Nandu has created a YouTube channel for his robot companion, so be sure to keep up with its progress!

6 comments

Michael

Nicely done! Amazing what can be done on a budget with some time and care. I want one. :)

Claude Pageau

Is there a github repo for some of the custom software?

Nandu Vadakkath

Hi Claude

I am working on creating that. The issue, as usual is having the time. Also, I would like to have it available as a proper framework that people can use without getting too frustrated. Will need a bit of clean up. Hopefully before the new year!. I will post back here when it is ready.

Ronak

Hi Nandu,

Amazing work, Is the code opensource and available in github? I look forward to see what you build next. Cheers :)

A. McD

Interested in your choice of DeepSpeech plus spaCy. Did you consider other on-board ASR (e.g. PocketSphinx)? Did you consider other speech to function interpreters (e.g. Snowboy)? (I see that snowboy is being phased out this month.)

Would also like to see a list of your implemented actions/functions, with the stimulus/invocation channel (sensors, ASR, ASR+NLU, time, etc. )

You really packaged a lot into a single Raspberry Pi based bot. Not easy to tie it all together. Great job!

Now that you have it working, do you feel Language Model and NLU front end to the robot is better than a grammar-based ASR with domain restricted to the available/programmed robot functions?

Joe

Cool project! I’m working on something similar… Did you run into any problems with the PiJuice HAT supplying enough current to simultaneously power the Raspberry Pi 4, motor control board (with other batteries attached), and Pan tilt HAT? Or did everything work fine? Thanks!

Comments are closed