BearID: Face recognition for brown bears

BearID is the kind of engaging project that everyone loves: using face-recognition technology, it helps work out how many individual bears live in an area and monitors their movement and health. Identifying brown bears (Ursus arctos) they already know about allows the project team to develop and refine animal face-recognition techniques that can then be applied to other species.

ARM principal engineer Ed Miller is BearID’s director and software developer. He runs the non-profit in his spare time along with technology partner Mary Nguyen and conservation director and field researcher Dr Melanie Clapham. A flexible working regime at ARM allows Ed free time for this personal passion project which aims to improve wildlife monitoring by testing software in the field and developing guidelines for its use. In particular, BearID aims to help development of face recognition for other threatened wildlife, aiding conservation efforts worldwide.

Ed points out that scientists are under increasing pressure to draw larger conclusions from their research, but have fewer resources available. A new technique for monitoring wild populations of brown bears and asking a wider variety of applied research questions is therefore likely to be very valuable, especially if it can be replicated with camera traps monitoring other species.

Inspiration for the project came when Ed and Mary were working on deep learning and watching mesmerising footage of brown bears at Brooks Falls in Katmai National Park in Alaska. They hit upon the idea of using AI to detect the bears’ faces and ears. These are the least changeable aspects of a bear, given their fluctuating weight, coat colour and markings throughout the year. Focusing on these two characteristics would also limit the amount of data processing required in making a positive ID.

They were also convinced, having read about Google FaceNet, that facial recognition for bears was the most viable approach. Dlib machine learning algorithm toolkit was used for a working example, based on identifying dogs’ faces.

“It’s a fun little application an example for how the application could work. It was interesting to us because the first part of machine learning oftentimes is having to label all of your images to train your network. Normally we would have had to take a bunch of images of bears and manually draw boxes around their faces, locate where their eyes and nose are, draw points on for those and so on for 1000s of images. This ‘dog hipsteriser’, because it was trained on dogs, which have similar facial features to bears, gave us a huge head start.”

The project began back in 2016 when Ed and Mary, his BearID technology partner, began exploring possibilities for deep learning. Canada-based research partner Melanie Clapham joined the next year and started using BearID in the field. The first test site is at Katmai National Park in Alaska where Explorer.org “broadcasts live views of bears every summer while they’re fishing along this waterfall in Alaska”.

Their 150-strong data set includes a second set of bears in the Knight Inlet area of British Columbia, Canada where most of Melanie’s research is based. As well identifying bear numbers in the wild, monitoring population growth or depletion, habitat and land management issues come into play.

“Dr Clapham has a whole network of camera traps set up to monitor air movement and look at how the bear are using different areas. Good salmon years or bad salmon years may affect how they’re using the land. Some of the territory belongs to First Nations groups, with whom Dr Clapham has established a useful cooperative approach to understanding bear numbers, locations and information that informs legislation and land management .

Ed and Mary are working to expand the application to cover all eight species of bear. Ed says “a lot of them are bears under human care, bears in zoos, bears and rescue sanctuaries. For some of these species, those are the easiest ways to get photos because they’re threatened so it’s easier to get them that way.” Progress is encouraging. “We did our first pass through the full identification algorithm for the Andean bears, which one of the researchers that we’re working with has, so we are starting to expand out into other bears.”

While brown bears rarely come into contact with humans in remote Alaska or British Columbia, so human-wildlife conflict is not very likely, they come into close proximity with humans in other regions. In South America conflict with humans is a big concern. Villages monitor Andean bear movements and have an early warning system. “For polar bears coming through your village, you probably just want to stay home and not be outside,” suggests Ed.

Technology challenges

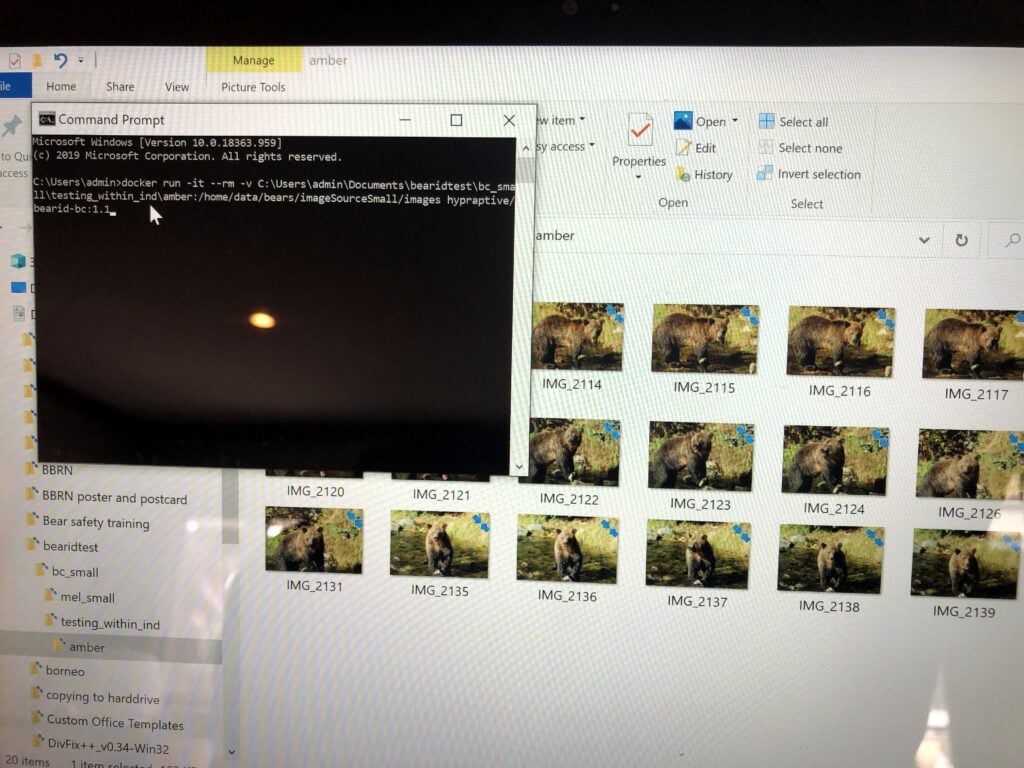

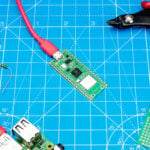

BearID runs in a cloud service, “mostly Microsoft Azure” since they have a grant from Microsoft AI For Earth and credits they can use. Most of the machine learning training is done on “big GPU-based machines” but the field use is all based around ARM systems. Laptops and tablets were used at first, but Ed is working a Raspberry Pi 4B version they “can use in the field to plug in a memory card, it grabs all the images, stores them on some local memory does all the analysis and then and then you can provide the results back onto the memory card”.

“Power is always a bit of a concern, but Melanie wants to have something she can run and do analysis while she’s in the field so she’s doesn’t have to wait to get [the results] back to the university.” Ed’s therefore looking into options that may provide an accelerator for Raspberry Pi or “another ARM-based platform that has machine learning acceleration built into it.

Looking to the future “the ultimate goal is for camera itself to detect and identify the bear and then be able to provide those alerts or the population movement or that kind of information by some low power transmission protocol to a base station and you can actually get real-time movement information,” says Ed.

For more details of the BearID Project see: bearresearch.org

2 comments

ukscone

Now we know which bear to blame for the poop in the forest

Raspberry Pi Staff Liz Upton

That was the Pope, wasn’t it?

Comments are closed