This massive nose sniffs things then prints a description of the smell

Listen, we get tagged in some questionable stuff on social media, but until I saw this post by Adnan Aga, we hadn’t yet been tagged in a photo of what looks like a receipt being pulled from the nostril of a giant nose sculpture.

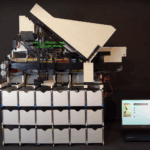

Weirder still, that’s not a receipt. Look more closely and you’ll see that it’s a detailed description of how something smells. That’s because this giant nose is a Raspberry Pi-powered smell-detecting machine.

OK… what?

Adnose is an interactive sculpture combining image recognition and machine learning. It was 3D printed in separate pieces before being assembled and then finished to give it a sculptural look. Adnan modelled it after his own nose!

Hardware inside the nose

- Raspberry Pi 4

- Raspberry Pi camera wearing a fisheye lens

- Distance sensor

- Thermal printer

- Speaker

How does it work?

When users place something under the nose, a distance sensor connected to the Raspberry Pi detects that something is there and then has the Raspberry Pi take a picture. Then the Pi discerns what the object is using a Python script that feeds the photo it took into Google Images.

Next, Google Image’s decision as to what the object is gets sent to the GPT-4 large language model, which composes a description of what said object probably smells like in a “poetic fashion”. A thermal printer connected to the Raspberry Pi 4 then, ahem, delivers it through the nostril of the sculpture.

The speakers also deliver the description audibly with the help of a text-to-speech generator.

These artsy types come up with the w̶e̶i̶r̶d̶e̶s̶t̶ best ideas

This unique sensory experience was created for Adnan’s graduate thesis at NYU’s Tisch School of the Arts ITP (Interactive Telecommunications Program). He exhibited it at Olfactory Art Keller in New York’s Chinatown, where visitors brought along their own objects for the sculpture to sniff.

2 comments

Michael Horne

That’s… I don’t know whether to be impressed or slightly revolted…

We’ll go with impressed. An interesting use of combining technology!

Jonas Kastner

haHaHa

Comments are closed