Introducing the Raspberry Pi AI HAT+ 2: Generative AI on Raspberry Pi 5

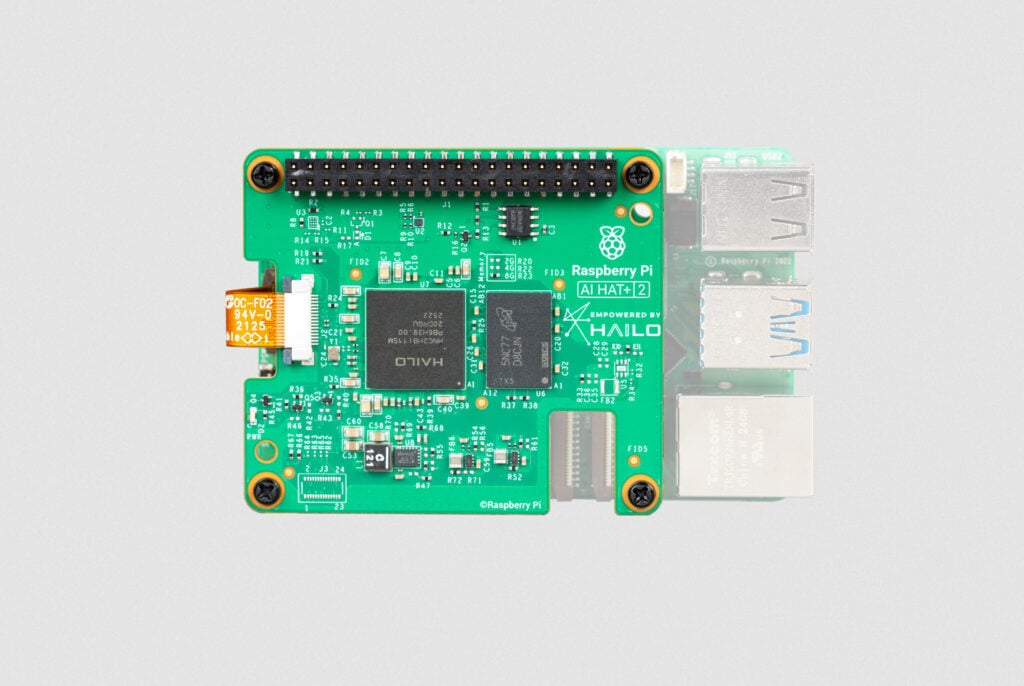

A little over a year ago, we introduced the Raspberry Pi AI HAT+, an add-on board for Raspberry Pi 5 featuring the Hailo-8 (26-TOPS variant) and Hailo-8L (13-TOPS variant) neural network accelerators. With all AI processing happening directly on the device, the AI HAT+ delivered true edge AI capabilities to our users, giving them data privacy and security while eliminating the need to subscribe to expensive cloud-based AI services.

While the AI HAT+ provides best-in-class acceleration for vision-based neural network models, including object detection, pose estimation, and scene segmentation (see it in action here), it lacks the capability to run the increasingly popular generative AI (GenAI) models. Today, we are excited to announce the Raspberry Pi AI HAT+ 2, our first AI product designed to fill the generative AI gap.

Unlock generative AI on your Raspberry Pi 5

Featuring the new Hailo-10H neural network accelerator, the Raspberry Pi AI HAT+ 2 delivers 40 TOPS (INT4) of inferencing performance, ensuring generative AI workloads run smoothly on Raspberry Pi 5. Performing all AI processing locally and without a network connection, the AI HAT+ 2 operates reliably and with low latency, maintaining the privacy, security, and cost-efficiency of cloud-free AI computing that we introduced with the original AI HAT+.

Unlike its predecessor, the AI HAT+ 2 features 8GB of dedicated on-board RAM, enabling the accelerator to efficiently handle much larger models than previously possible. This, along with an updated hardware architecture, allows the Hailo-10H chip to accelerate large language models (LLMs), vision-language models (VLMs), and other generative AI applications.

For vision-based models — such as Yolo-based object recognition, pose estimation, and scene segmentation — the AI HAT+ 2’s computer vision performance is broadly equivalent to that of its 26-TOPS predecessor, thanks to the on-board RAM. It also benefits from the same tight integration with our camera software stack (libcamera, rpicam-apps, and Picamera2) as the original AI HAT+. For users already working with the AI HAT+ software, transitioning to the AI HAT+ 2 is mostly seamless and transparent.

Some example applications

The following LLMs will be available to install at launch:

| Model | Parameters/size |

| DeepSeek-R1-Distill | 1.5 billion |

| Llama3.2 | 1 billion |

| Qwen2.5-Coder | 1.5 billion |

| Qwen2.5-Instruct | 1.5 billion |

| Qwen2 | 1.5 billion |

More (and larger) models are being readied for updates, and should be available to install soon after launch.

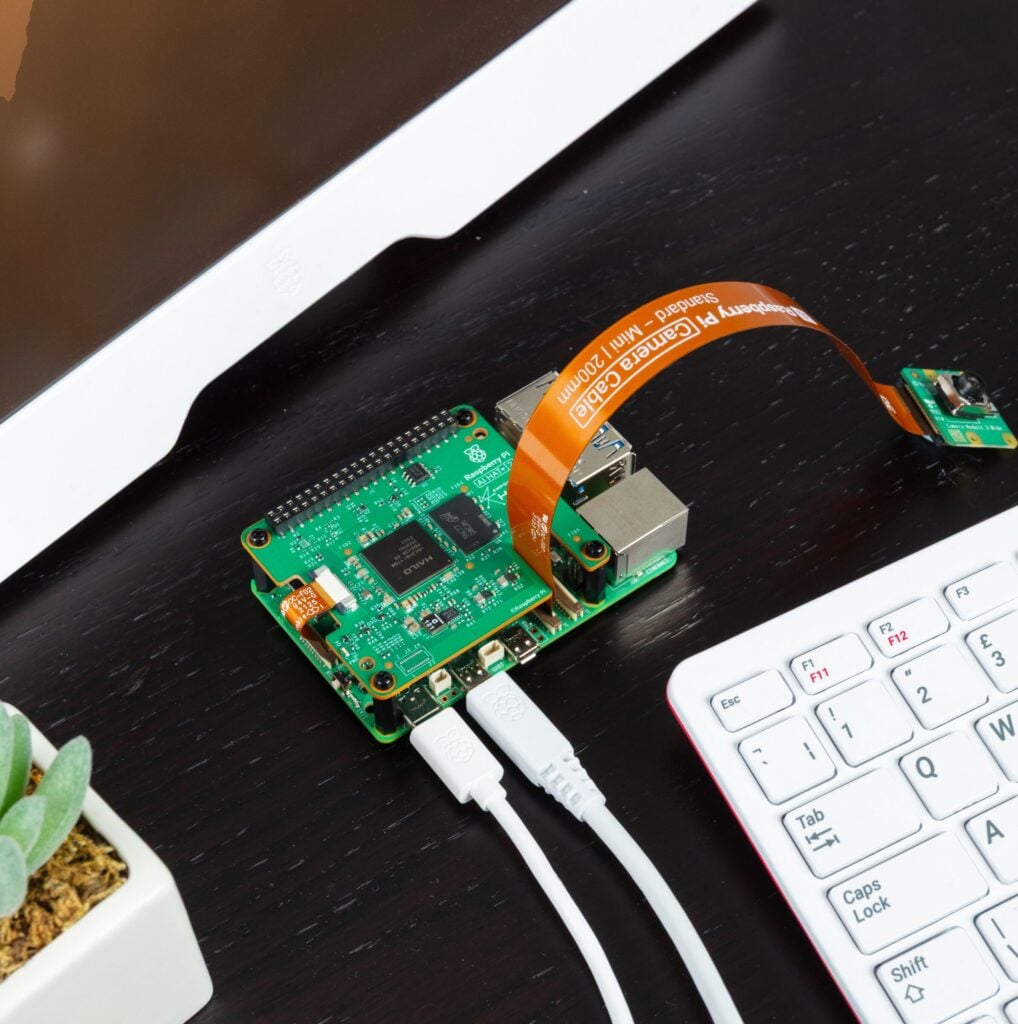

Let’s take a quick look at some of these models in action. The following examples use the hailo-ollama LLM backend (available in Hailo’s Developer Zone) and the Open WebUI frontend, providing a familiar chat interface via a browser. All of these examples are running entirely locally on a Raspberry Pi AI HAT+ 2 connected to a Raspberry Pi 5.

The first example uses the Qwen2 model to answer a few simple questions:

The next example uses the Qwen2.5-Coder model to perform a coding task:

This example does some simple French-to-English translation using Qwen2:

The final example shows a VLM describing the scene from a camera stream:

Fine-tune your AI models

By far the most popular examples of generative AI models are LLMs like ChatGPT and Claude, text-to-image/video models like Stable Diffusion and DALL-E, and, more recently, VLMs that combine the capabilities of vision models and LLMs. Although the examples above showcase the capabilities of the available AI models, one must keep their limitations in mind: cloud-based LLMs from OpenAI, Meta, and Anthropic range from 500 billion to 2 trillion parameters; the edge-based LLMs running on the Raspberry Pi AI HAT+ 2, which are sized to fit into the available on-board RAM, typically run at 1–7 billion parameters. Smaller LLMs like these are not designed to match the knowledge set available to the larger models, but rather to operate within a constrained dataset.

This limitation can be overcome by fine-tuning the AI models for your specific use case. On the original Raspberry Pi AI HAT+, visual models (such as Yolo) can be retrained using image datasets suited to the HAT’s intended application — this is also the case for the Raspberry Pi AI HAT+ 2, and can be done using the Hailo Dataflow Compiler.

Similarly, the AI HAT+ 2 supports Low-Rank Adaptation (LoRA)–based fine-tuning of the language models, enabling efficient, task-specific customisation of pre-trained LLMs while keeping most of the base model parameters frozen. Users can compile adapters for their particular tasks using the Hailo Dataflow Compiler and run the adapted models on the Raspberry Pi AI HAT+ 2.

Available to buy now

The Raspberry Pi AI HAT+ 2 is available now at $130. For help setting yours up, check out our AI HAT guide.

Hailo’s GitHub repo provides plenty of examples, demos, and frameworks for vision- and GenAI-based applications, such as VLMs, voice assistants, and speech recognition. You can also find documentation, tutorials, and downloads for the Dataflow Compiler and the hailo-ollama server in Hailo’s Developer Zone.

28 comments

Jump to the comment form

Nicko

This is great news! The limitations in the Halio-8 were not just about memory and performance; the architecture was not well suited to generative AI tasks. The Halio-10 fixes that. This is going to be particularly useful for speech-to-text (and text-to -speech) tasks.

Of course now all we need is for it to not be twice the price of a Pi5 :-)

Gordon77

Great news..

Is it fully compatible with existing software written for the hailo8 version, including picamera2 ?

Naushir Patuck — post author

Yes, existing apps should work, but you will need different model files compiled for the H10 NPU. Our picamera2/rpicam-apps based demos now pull the H10 models that we support.

Antonin Lefevre

Nice !

Only with RP5 ? Or high RP4 could work ?

xeny

I’d expect Pi 5 only as it will need the PCIe interface.

dom

It connects through PCIe, so Pi5 only.

Esbeeb

I’ve ordered one! This is a rare occasion where I’ve impulse-bought a new piece of Raspberry Pi kit.

Anders

It’s the other way round for me, I impulse buy everything new that appears from Raspberry Pi.

av

Is it possible to buy the 10H without the Raspberry AI HAT ?

Dave Rensberger

Can anyone summarize the power consumption that should be expected with this HAT for typical AI inference tasks?

Anders

Jeff Geerling has had a look at this already:

https://www.jeffgeerling.com/blog/2026/raspberry-pi-ai-hat-2/

Joonas

Will it be possible to support any multimodal models capable of real-time voice conversations? (can any of them be distilled to run in 8GB, including KV cache + audio tokenization/decoding + runtime overhead)

Mark Tomlin

WhipserAI’s `medium.en` should fit into 5GB, their `large` model is 10GB, and their `small.en` is 2GB. So if you are just doing English, it should fit with their largest `medium.en` model. I’m pretty excited as this is exactly my use case!

Esbeeb

Great tip, thanks!

Peter

I run Parakeet V3 for multi language, V2 is smaller and English Only

Christian Hatley

I wanted the Raspberry Pi 6 :(

YKN

They made the Raspberry Pi 500+ before a Raspberry Pi 5B+ or a 5A… I just don’t see why you can’t plug a mechanical keyboard into the Pi 5. Pi 6 would be nice, but I don’t know if it’s a good idea with the RAM shortage driving up prices.

Christian Hatley

I think B+ and A are discontinued. I guess they merged? Never saw a 4B+ or 4A.

Kyle Maloney

This is great! Loved the AI Hat+ but have been using an Axera accelerator lately for generative projects. Can’t wait to use this, ordered mine today!

Will the 10H be released in an M.2 form factor as well?

Gordon77

There is this…

https://hailo.ai/products/ai-accelerators/hailo-10h-m-2-ai-acceleration-module/

I don’t know if it works with a Pi, or where you can buy it…

Microscotch

Looks like working, as demonstrated by Martin Cerven in this video:

https://www.youtube.com/watch?v=yhDjQx-Dmu0

Lars Wattsgard

Great to see this in the daylight – looking forward to take it for a spin! Wonder if we can get our tic-tac-toe playing le-robot onto it? Will let you know! Great work!

Alf

It would be soo cool to see a M.2 version, just like the 1st ai kit. This version is so much more capable than the 1st one but the thing is a M.2 version would be more compatible with other cases that have fans and such, making everything just more compatible and better thermals over all.

Lars

Great update to the original AI hat!

Since this is a HAT+, I am wondering is the HAT stackable? I see no definitive yes/no in the technical spec to this question. My intend is to use NVMe M.2 HAT+ in conjunction with the AI HAT+.

Thanks!

AndrewS

Both the Raspberry Pi M.2 HAT+ and the various Raspberry Pi AI HATs connect to the PCIe port on the Raspberry Pi 5, so no you can’t use them both at the same time.

James

Hi all, I’m looking at adding the ai hat+2 to my raspberry pi5 running Home assistant.

Will this fit in the pironman 5 max case? Or should I look at other options to run AI?

Gordon77

This review https://www.cnx-software.com/2025/06/29/pironman-5-max-review-a-fancy-raspberry-pi-5-tower-pc-enclosure-with-dual-m-2-pci-sockets-for-ssd-and-or-ai-accelerator/ fits the ai hat using a dual nvme card

Oleksandr Turevskiy

Would be happy to see the Frigate support soon