Raspberry Pi detects factory defects with machine learning

The folks at Modzy got in touch to tell us about their defect detection platform that uses Raspberry Pi Zero W and a Raspberry Pi Camera Module to pick up mistakes on factory production lines. Their message specifically stated their love for Raspberry Pi, so I was sold.

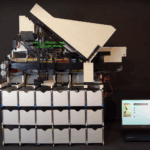

Modzy deploys machine learning models in the cloud and at the edge. They built the demo above to show their manufacturing customers how easy and affordable it is to detect defects using machine learning in a factory.

How it works

Hardware:

- Raspberry Pi Zero W

- Raspberry Pi Camera Module 3

- NVIDIA Jetson Nano running a computer vision model

- A “conveyer belt” consisting of a cake decorating turntable with a motor attached

A Raspberry Pi Camera Module acts as the eyes of the production line monitor, feeding real-time images from the production line. A computer vision model analyses the images to detect broken teeth, scratches, and dents in 3D-printed spur gears as they roll through on the homemade “conveyer belt”. When the model detects a defect, Modzy’s system updates and timestamps a log, recording what was wrong with the spur gear and exactly when it rolled past the detection point on the production line.

Low-cost efficiency

This defect detection platform cost less than $150 to build.

Modzy is particularly proud that this model achieved three out of three on speed, security, and cost:

- It’s fast thanks to low-latency GPU-powered inference (meaning it can spot defects as quickly as the production line rolls)

- It’s secure, since it can operate completely offline

- It’s cost effective, since no cloud computing is needed and the hardware is affordable

More machine learning from Modzy

The Modzy folks are big into Raspberry Pi for machine learning and have built two other apps:

- Their Air Quality Index Prediction detects current air quality with Raspberry Pi 3B+, and uses that data to generate a prediction for the next hour.

- Their Hugging Face NLP Server deploys and runs a hugging face model on Raspberry Pi with Docker.

6 comments

Eric

Can someone explain why two SBCs are used? Why is the camera not attached to the Jetson?

Michael

My best guess: The nano has more horsepower for machine learning based applications. So they could probably use multiple cameras from the raspberry pi in order to use one nano for multiple camera feeds. This would definitely reduce the cost. It’s nano is about $150 per unit whereas the zero is $15 I believe. Those numbers are based off of the last time I looked them up. So essentially a server client based setup.

Seth

Good question, Eric, we’re actually using two cameras on this rig. The first camera that’s watching gears on the turntable is connected directly to the Jetson Nano for inference. The second camera, connected to the Pi 0w, is pointed at the nano itself to “prove” this is happening in real-time. So we’re treating the Pi 0w kind of like an IP camera for the sake of the demo.

Kevin

Is it really using AI or just template matching? Too many times I see the media state AI when it’s not. :-(

Seth

Great question! The model used here is a Pytorch/Yolov5 model that was trained to identify 3 classes: broken teeth, punctures, and scratches. You’re welcome to check out the model here and play around with it if you happen to have any spur gears lying around: https://hub.docker.com/r/bmunday131/defect-detection-yolov5-arm/tags

Raspberry Pi Staff Ashley Whittaker — post author

HI SETH!

Comments are closed